1

1 1

1

The proliferation of AI writing tools has dramatically accelerated the content creation process, but according to a recent analysis, the efficiency gains in writing are superficial. The core challenge in content marketing remains the acquisition and verification of information – ideas, factual accuracy, and reference material – an area where current AI tools reportedly falter. This conclusion emerged after an experiment involving the generation of 40 articles using Claude, an AI language model, directly, rather than relying on intermediary writing platforms.

The author distinguishes between general AI writing tools, such as Jasper, Frase, and Writesonic, which are built upon large language models (LLMs), and the direct use of an LLM like Claude with a curated set of personal files and a defined process. While acknowledging that existing writing tools can be a viable option for individuals lacking strong writing or SEO skills, or those with limited time, they are deemed a ceiling rather than a floor for content quality when aiming for higher standards.

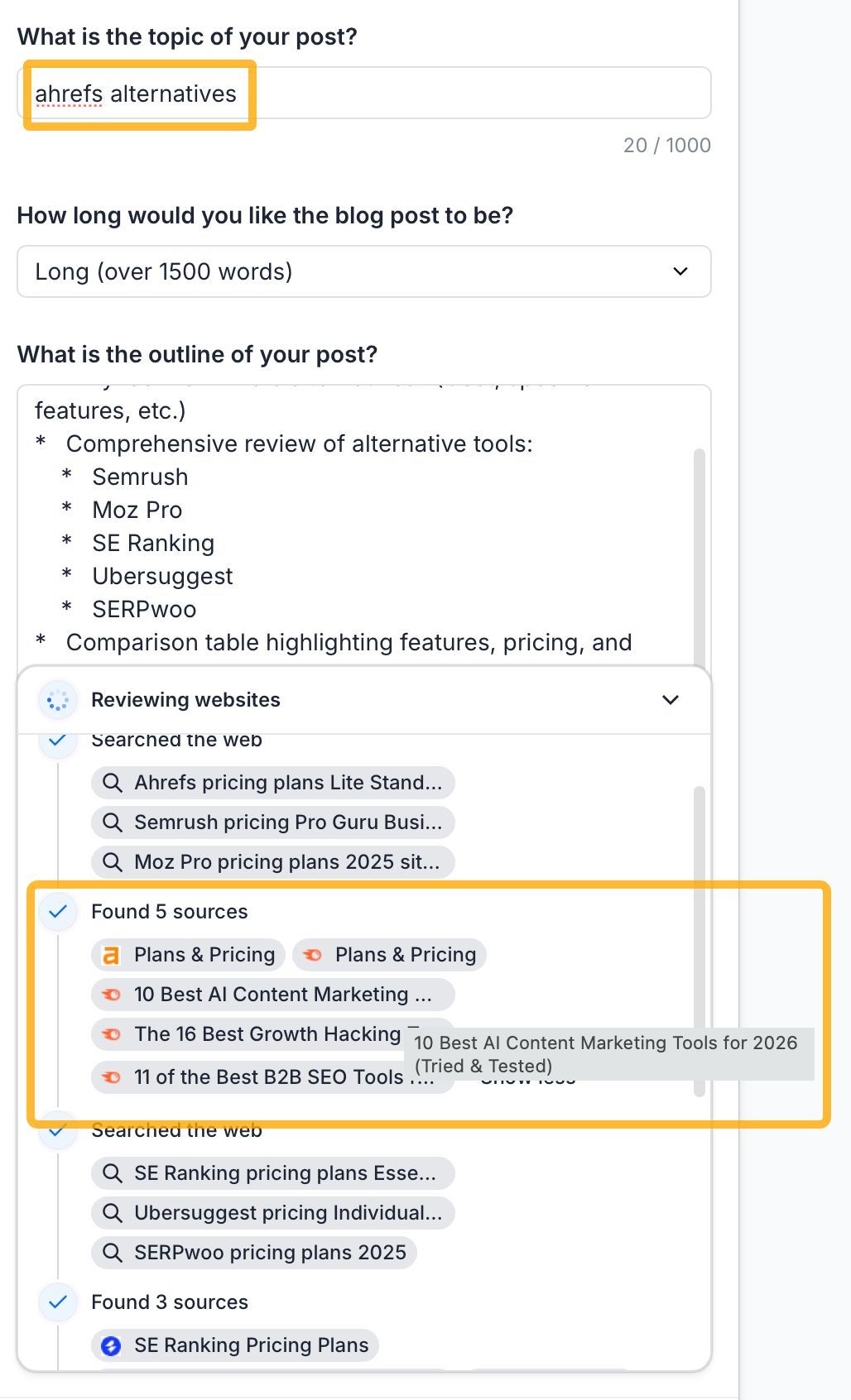

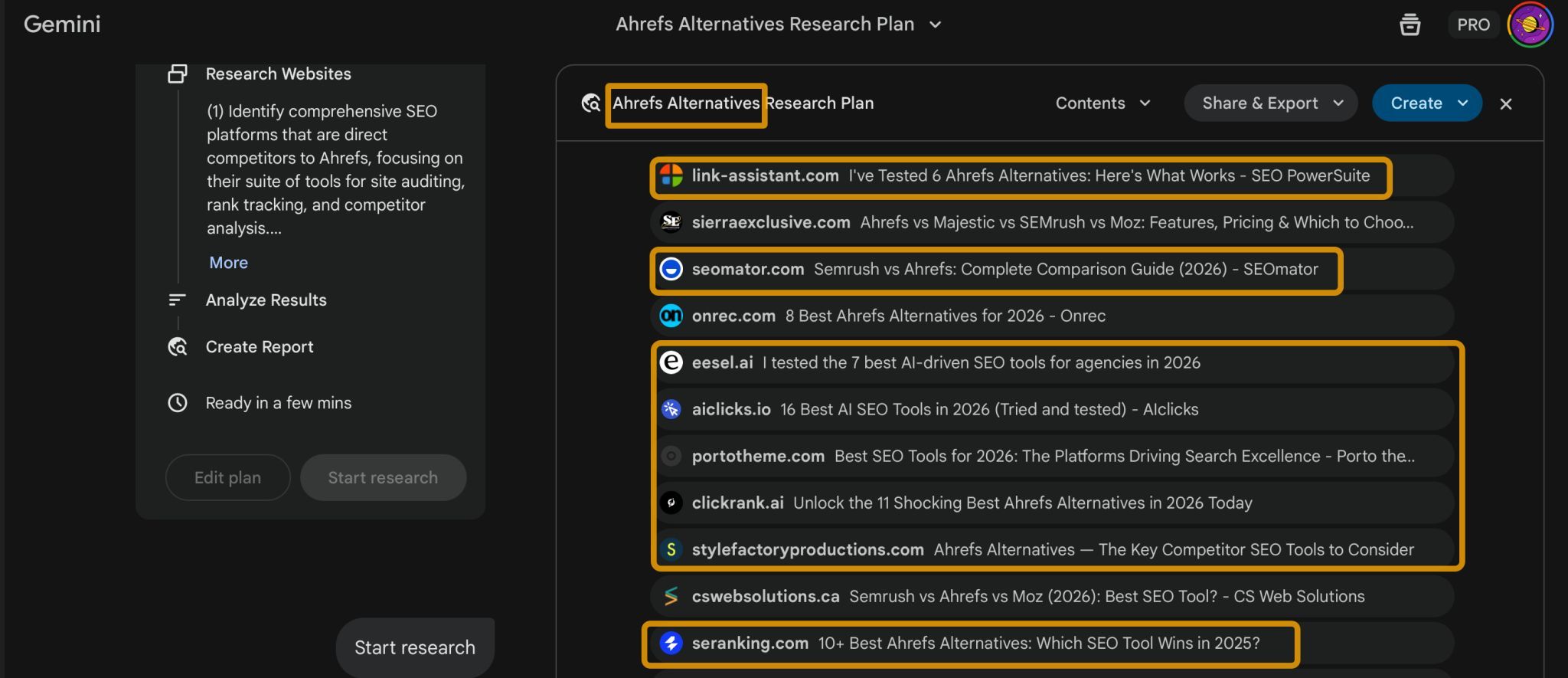

A primary issue identified is the AI’s research methodology. Most AI writing tools "fact-check" by cross-referencing information against whatever currently ranks on search engines. This often leads to the recycling of existing content, including competitor marketing pages, outdated blog posts, and articles that have already copied data from other sources. This process can inadvertently "launder errors through consensus," where the AI treats aggregated misinformation as fact. The experiment yielded instances of incorrect pricing, inaccurate features, and significant discrepancies in numerical data, often sourced from biased or unreliable origins without the AI’s ability to discern their poor quality. For example, one tool utilized Gemini Deep Research, which, like other AI assistants, was found to rely on existing search rankings for its data.

The complexity of generating comprehensive content, such as a comparison of eight products, highlights this limitation. Such a task necessitates the AI referencing multiple, fact-checked documents for each product, alongside style guides and editing checklists. The author found that no tested writing tool could effectively manage this volume of reference material.

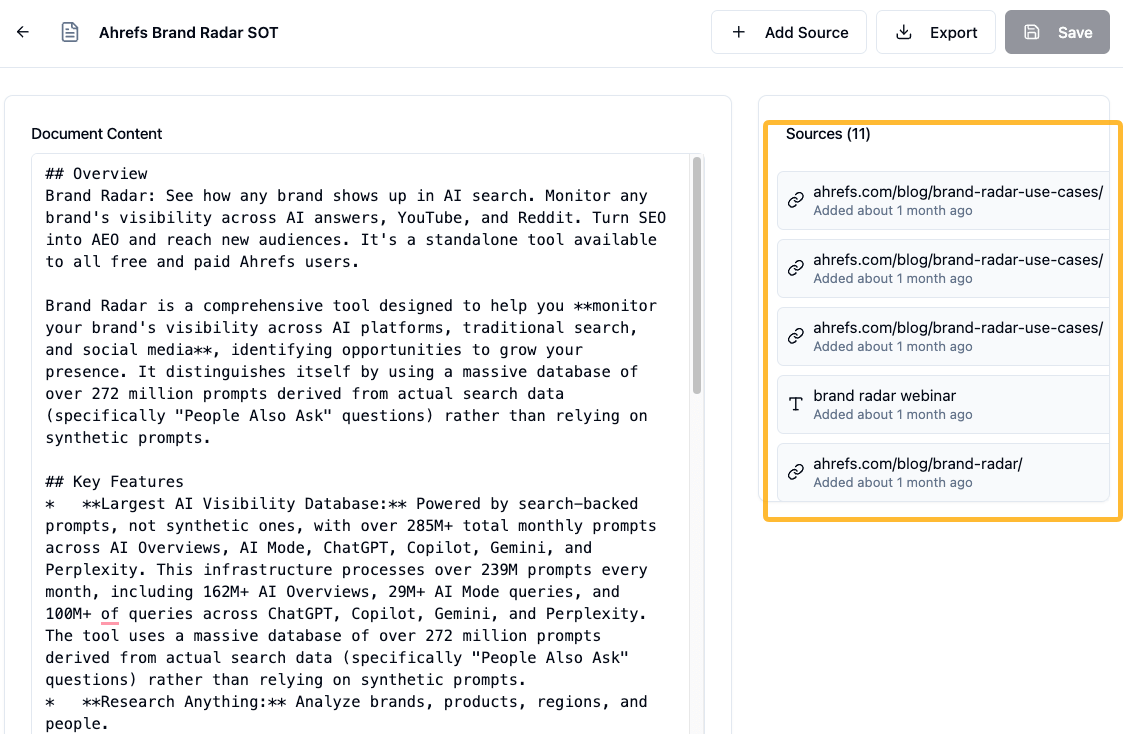

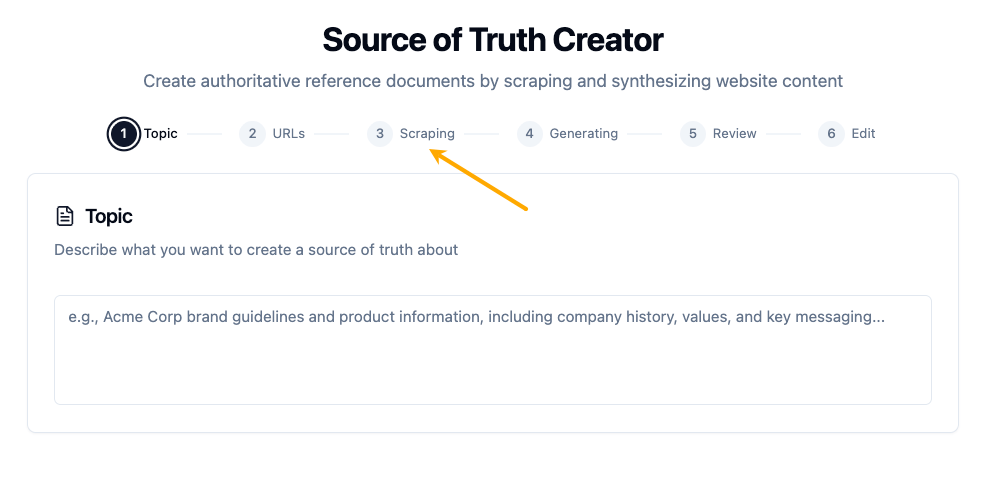

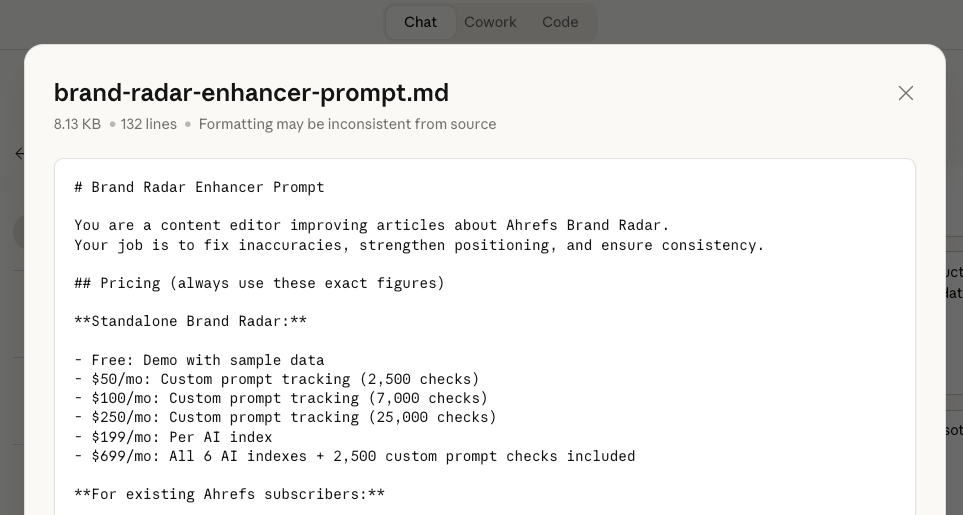

To address this "research problem," the proposed solution is to meticulously build and maintain personal reference files. This involves creating verified data documents for each product and competitor. A knowledge base for one’s own products, detailing pricing, features, and use cases, should be established in a readily accessible format. For competitor content, specific documents detailing their pricing pages, feature lists, and limitations must be prepared. The author advocates dedicating a significant portion of the content creation timeline, potentially three weeks out of four, to the development of these foundational reference files, emphasizing that no AI content generation should commence until these are complete.

A second challenge identified is the "process problem," where writing tools attempt a "one-shot" article generation, contrasting with the iterative and nuanced nature of effective writing. The author likens the AI writing tool approach to an assembly line, whereas good writing is akin to cooking, involving continuous tasting, adjustments, and even spontaneous creative detours. The author’s experience indicated that achieving the desired brand voice, even with specific instructions or style files, consistently required five to six rounds of editing. This level of granular editing – from individual sentences to entire sections or article-wide patterns – proved difficult with the fixed editing options offered by most writing tools, necessitating a more conversational interaction.

The suggested solution is to deconstruct the writing workflow into a series of repeatable prompts or "skills." Each distinct task, such as refining a specific aspect of the content or ensuring adherence to brand guidelines, should have a dedicated prompt. Through trial and error, these prompts can be refined to achieve optimal results. The author notes that these refined prompts can later be integrated into automated workflows, such as Claude’s "skills" feature. For critical steps, running prompts twice or using a second AI for cross-verification is recommended to catch any missed nuances.

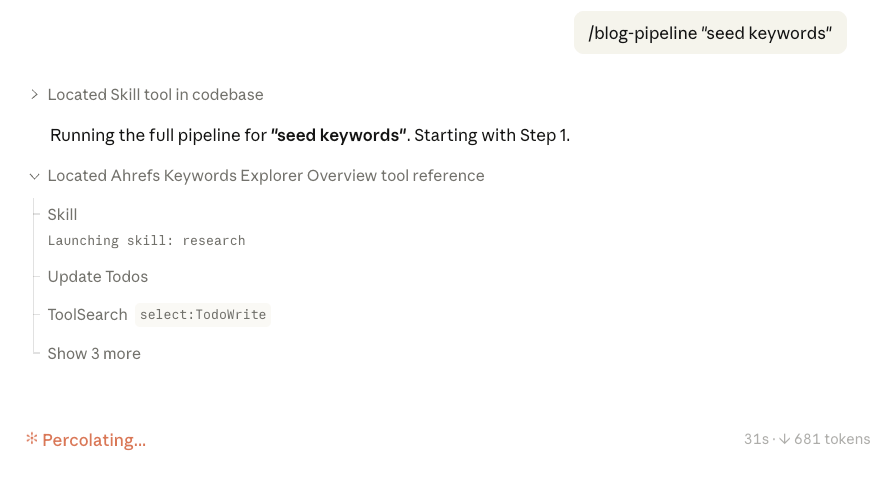

The "scale problem" arises from writing tools treating each article as an isolated entity, hindering efficient large-scale content production. While some tools offer workflow features for automation, they are often described as difficult to build, with limited human-in-the-loop functionality and output that drifts as requirements become more complex. The author points to AI assistants and specifically Claude Code as having already solved this by enabling more comprehensive automation. Instructions like "scan every article for Product X’s pricing and check it against the reference file" can initiate a complete process, with the AI making adjustments as needed.

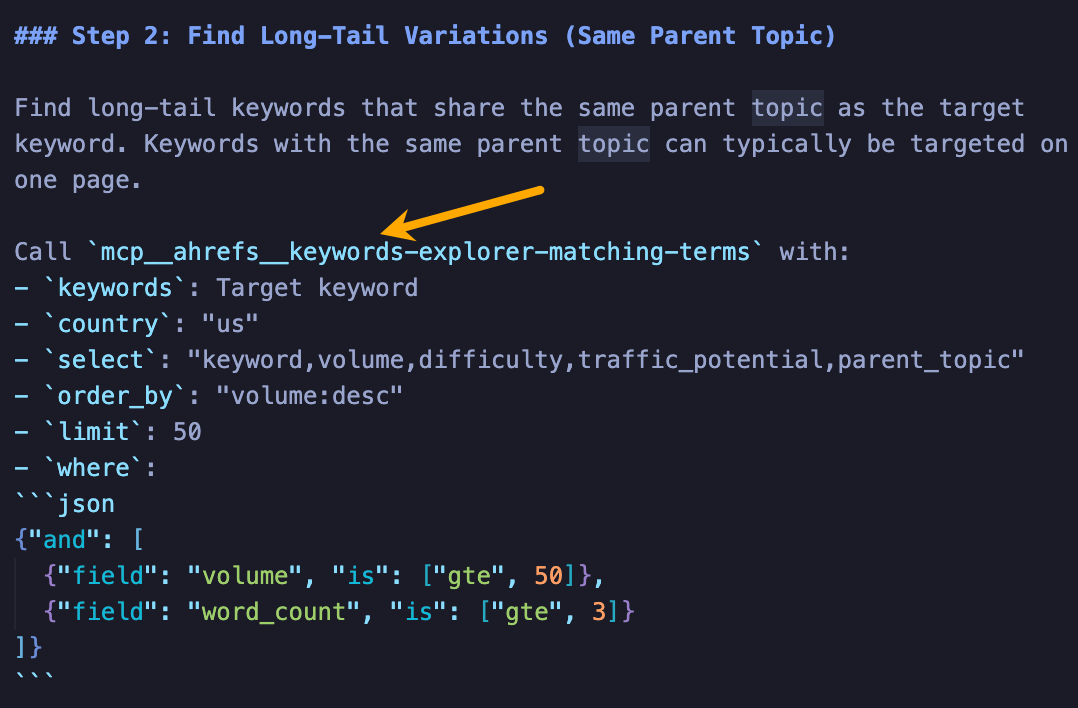

The recommended approach here is to become proficient in working with tools like Claude Code. A single instruction can trigger a multi-phase process that includes fetching SEO data, accessing reference files, web scraping, and phased article generation. The author envisions integrations with research tools like Ahrefs’ MCP, enabling direct data piping into these workflows for automated SEO research. Even without direct integrations, manually pulling data or using screenshots as input for the AI is a viable alternative.

The "economics problem" is highlighted by the cost disparity between flexible AI chatbots and more restrictive writing tools. A $20 monthly chatbot subscription offers access to the latest models without generation limits, whereas writing tools costing significantly more ($50-$200 or even $2,000 per month) often utilize older models and impose caps on output. This suggests paying a premium for a less capable service. The author describes a workflow where top-cited articles for a keyword are retrieved using Ahrefs’ Brand Radar, their structure extracted by Claude to form an outline, and then personal ideas are woven in. This process, covering research, structure, and writing within a single conversational interaction, allows for control at every stage. The author concedes that some users may prefer an all-in-one writing tool, but for their own needs, a return to dedicated AI writing tools is unlikely. The dynamic nature of LLM development also means that wrappers built on older models risk becoming obsolete as newer LLMs are released.

The proposed solution to this economic disparity is to invest more resources into the data fed to the AI, rather than the AI wrapper itself. This includes dedicating time and budget to creating robust source-of-truth files and ensuring human judgment remains integral to the process. This strategy also allows for greater adaptability as AI models evolve and content requirements shift.

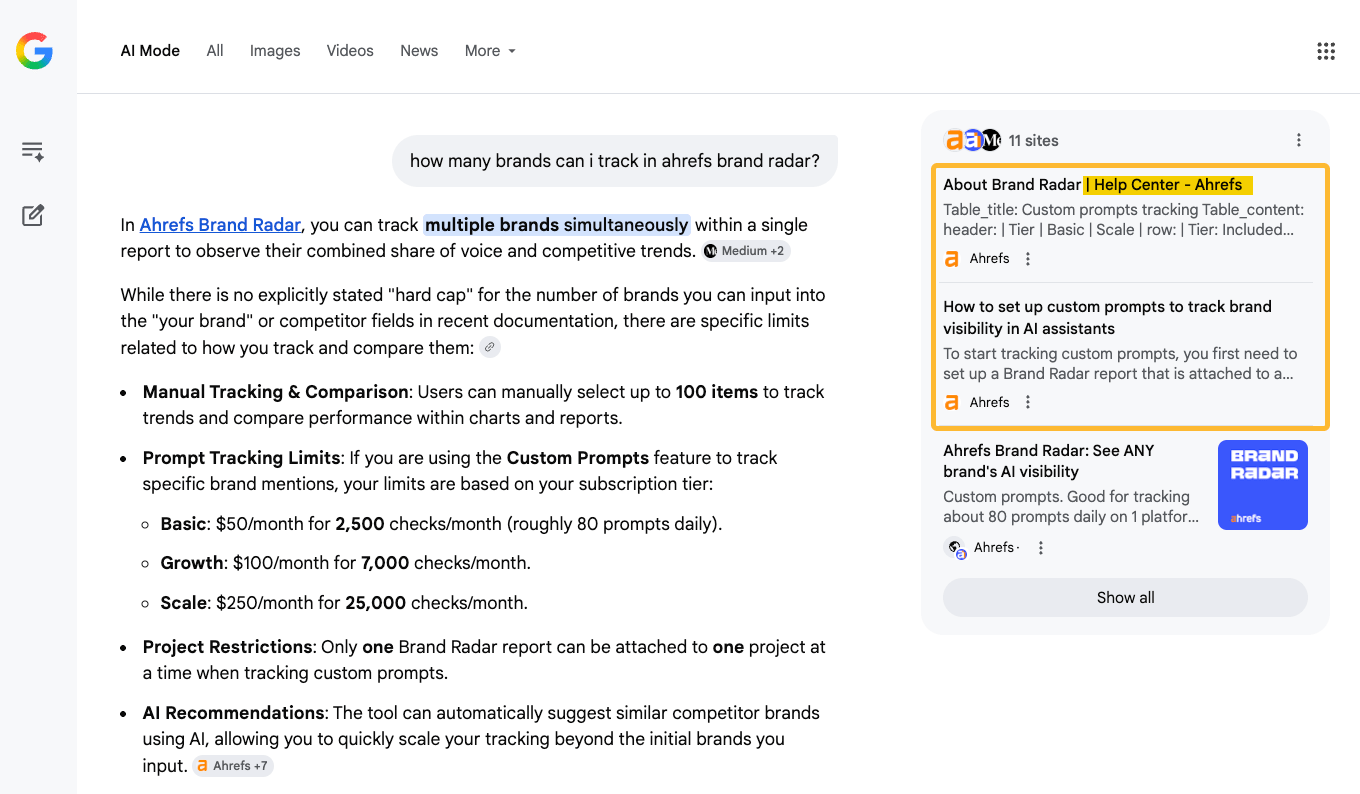

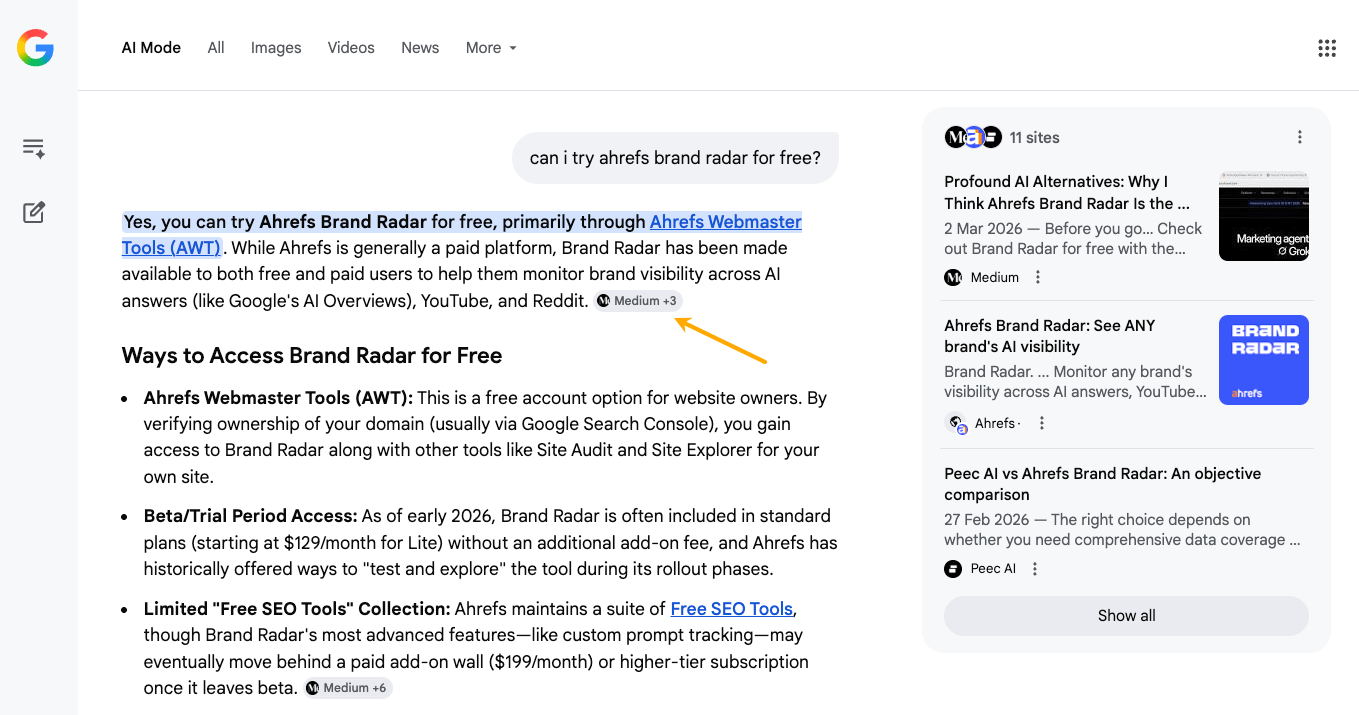

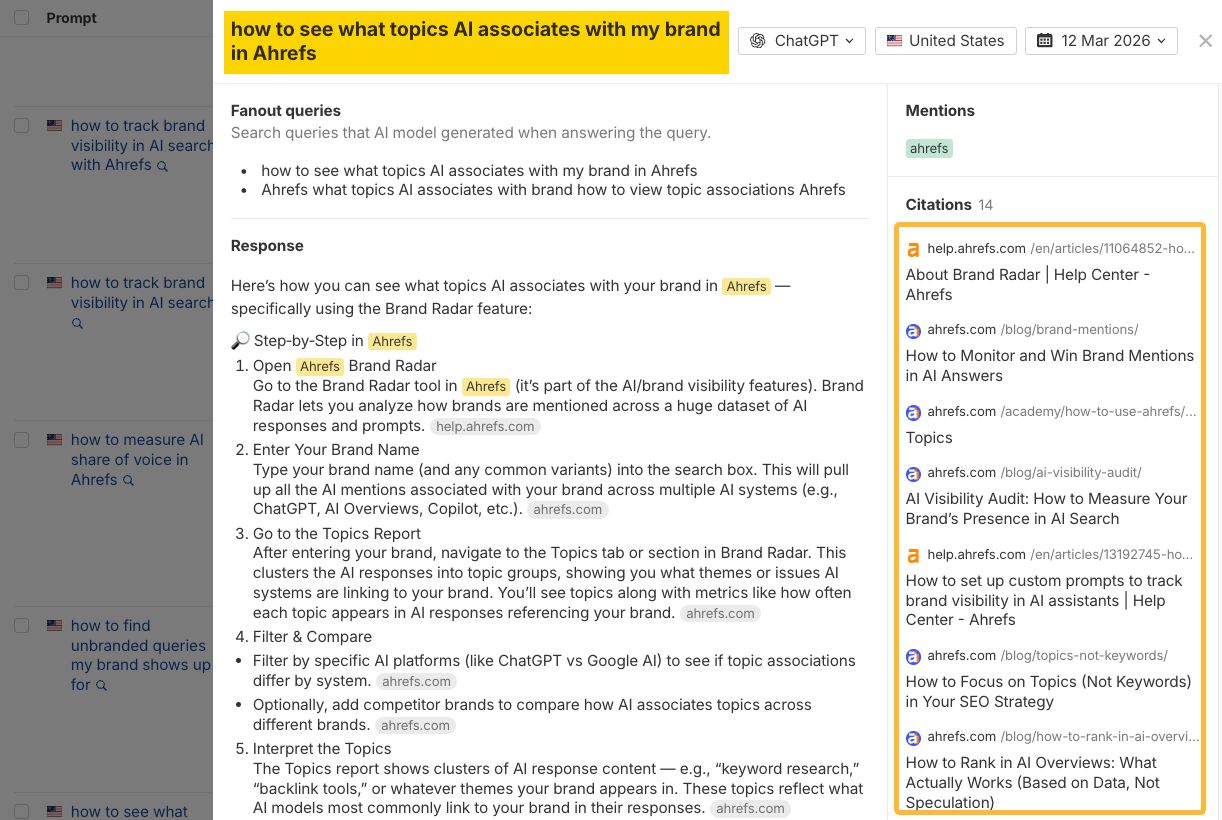

Finally, the "content strategy problem" addresses the tendency of writing tools to apply a single process to what are fundamentally two distinct types of content: searchable content and shareable content. Searchable content, such as product documentation, help articles, and comparison pages, has become critical as AI models increasingly rely on published information to ground their answers and avoid hallucination. The author posits that a company’s product documentation now serves as its brand’s voice within AI conversations. An example cited is an AI assistant directly citing Ahrefs’ documentation when asked about the number of brands trackable in Brand Radar. Gaps in this documentation can lead to AI answers lacking official citations, potentially relying on secondary sources or even fabricating information.

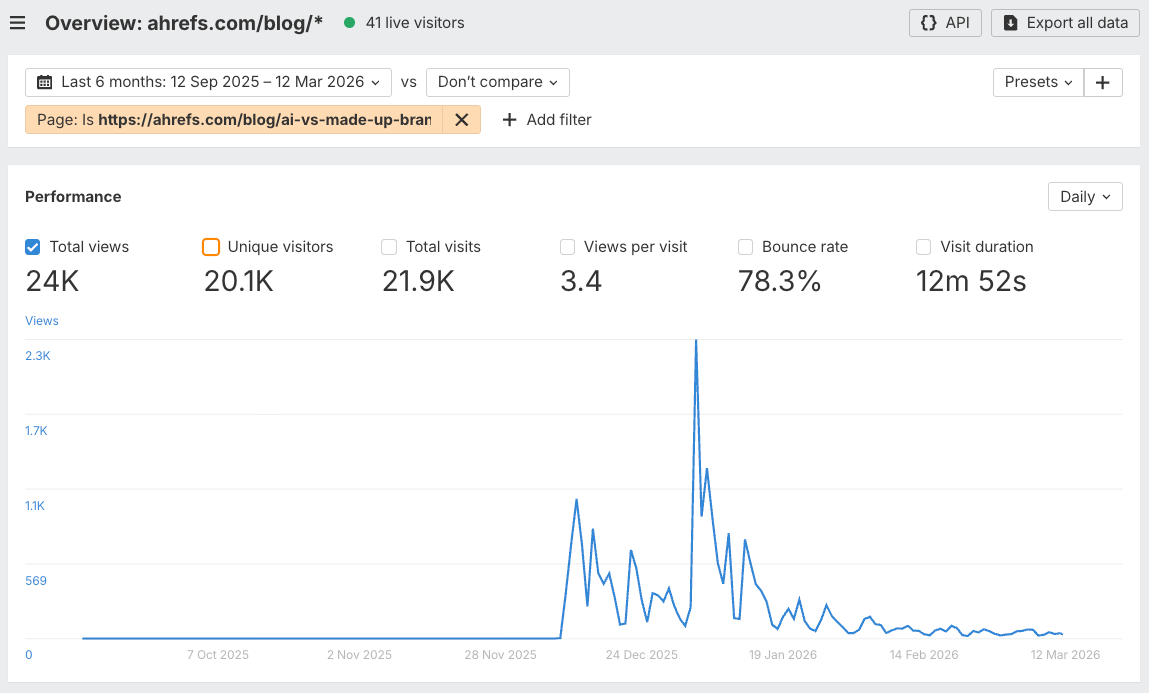

Shareable content, conversely, is defined as deeply human-first, experiential content that cannot be templated. The author’s AI misinformation experiment, which ranked poorly but generated significant traffic and social engagement, exemplifies this category.

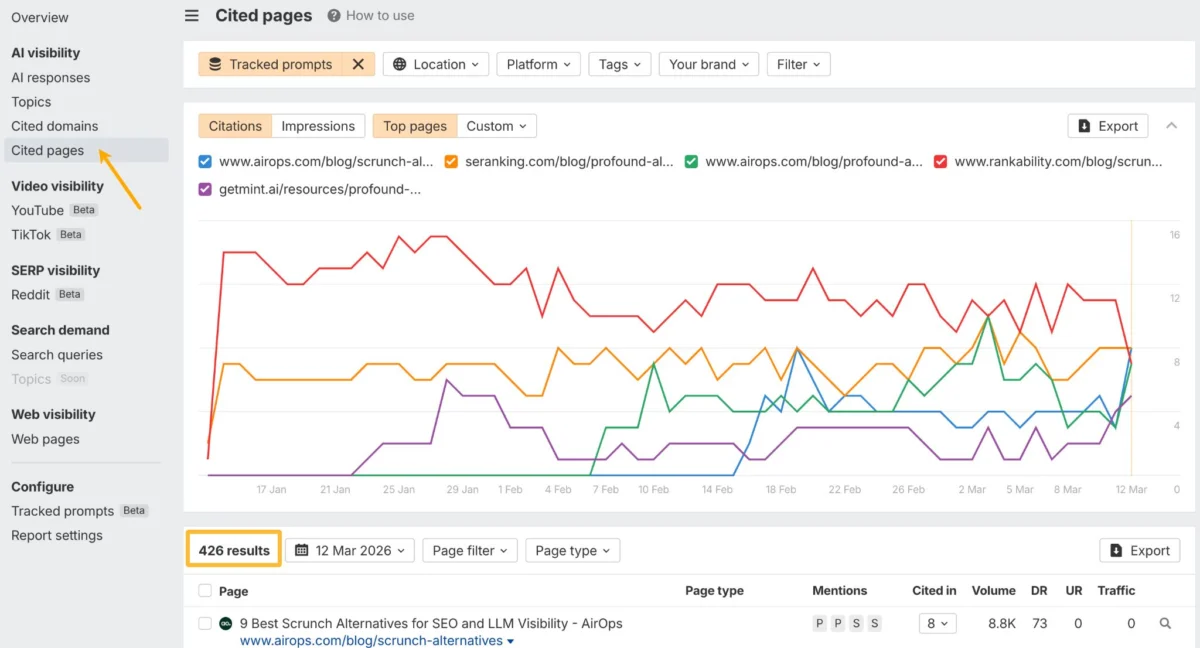

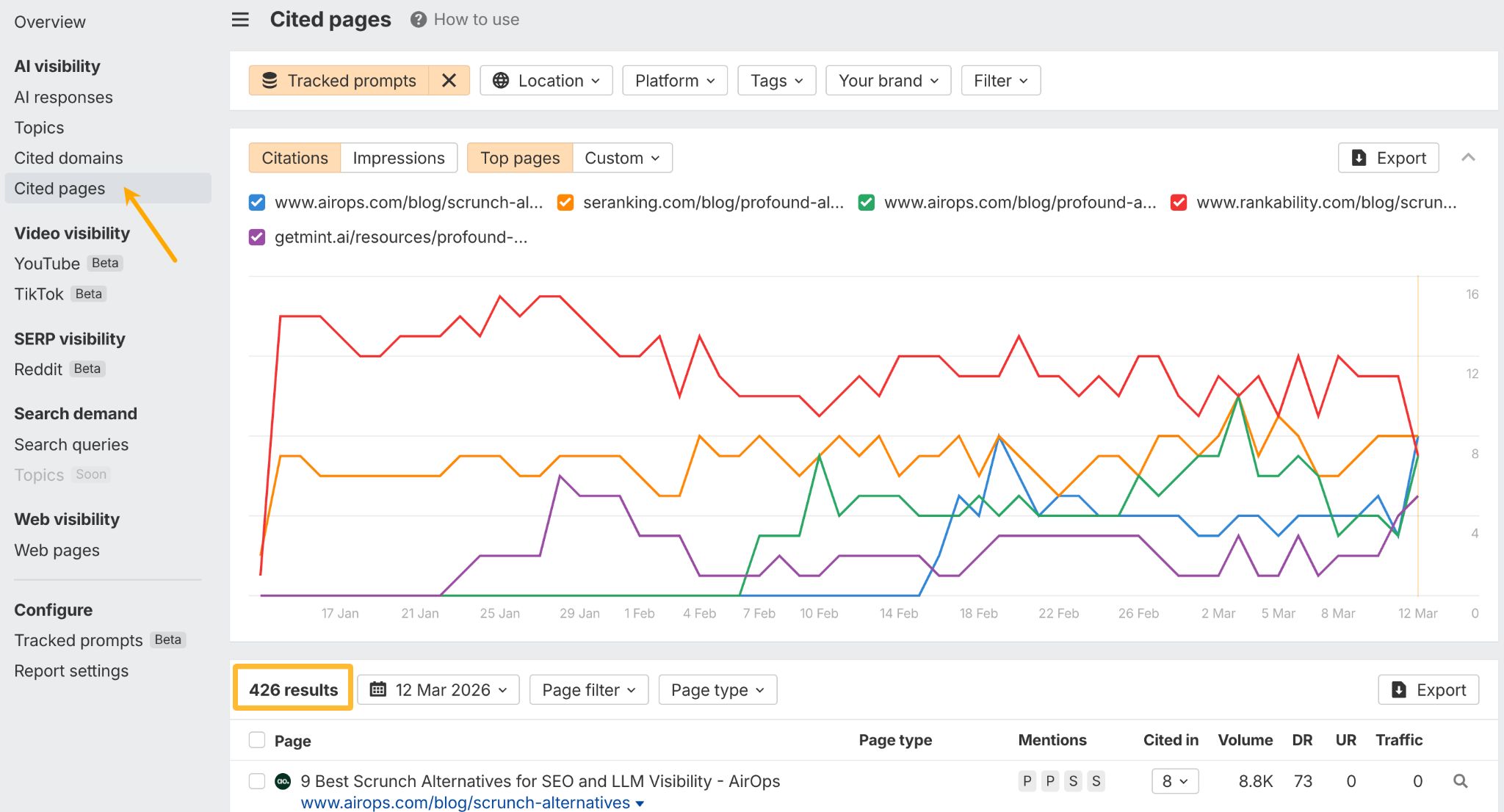

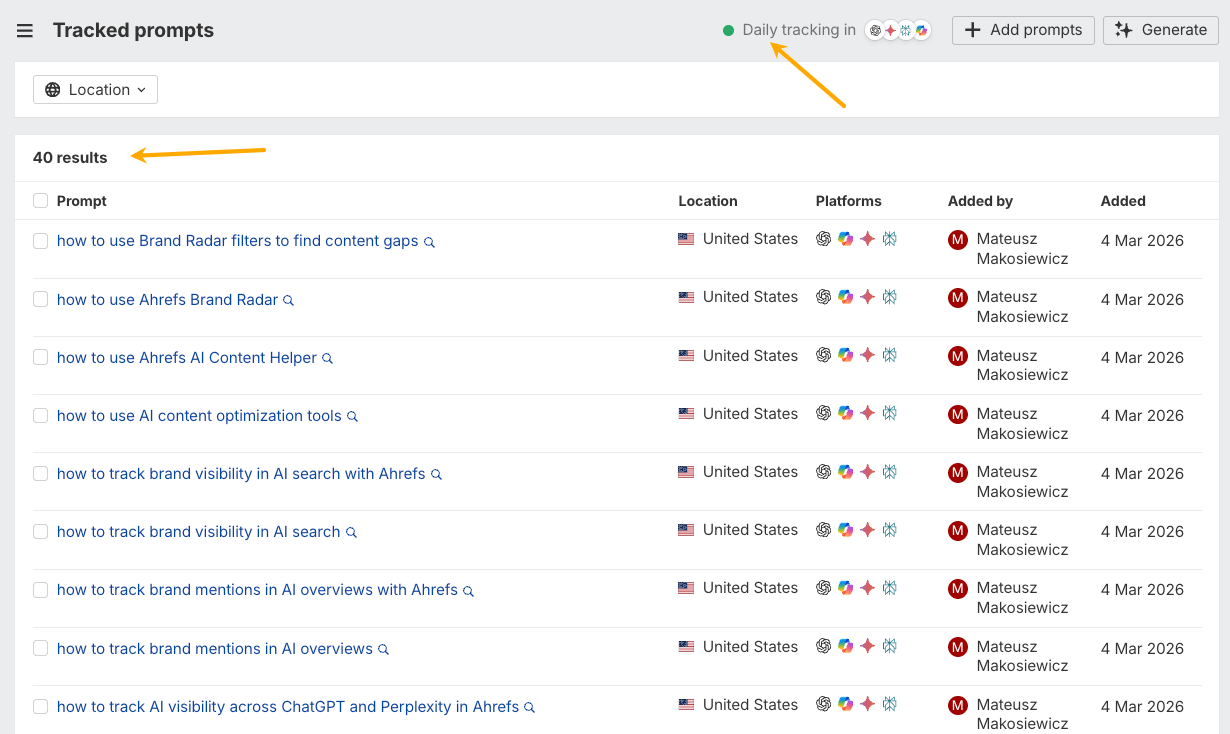

The solution for this strategic divergence is to embrace flexibility over convenience and to develop processes tailored to each content track. AI chatbots, due to their versatility, are deemed the most suitable tools. For searchable content, a thorough audit of product documentation and help content is essential. If an AI cannot answer basic product-related questions using the company’s own materials, it creates an opportunity for competitors or misinformation to fill that void. Tools like Ahrefs Brand Radar can help identify these gaps at scale by tracking AI responses to custom prompts.

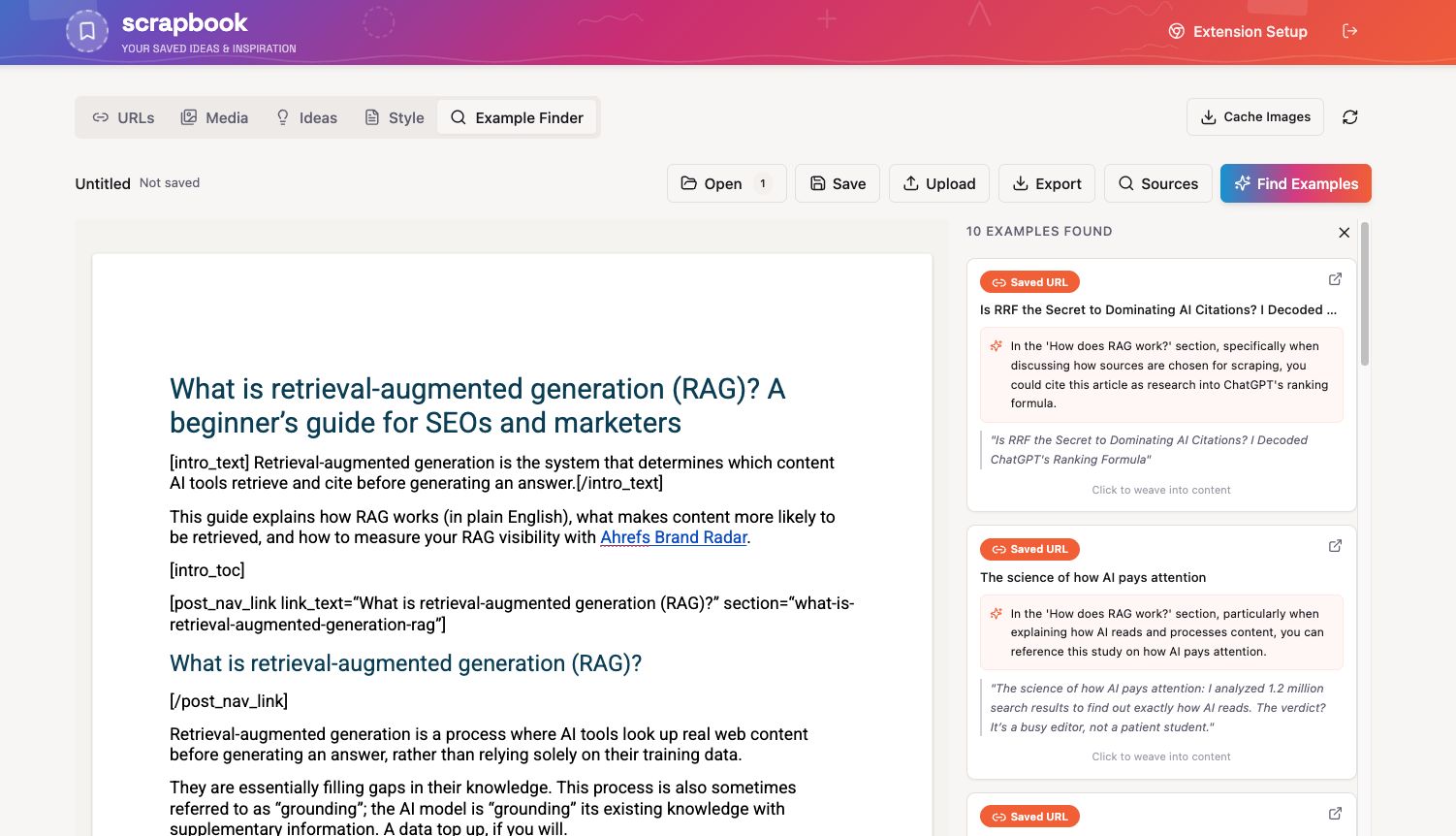

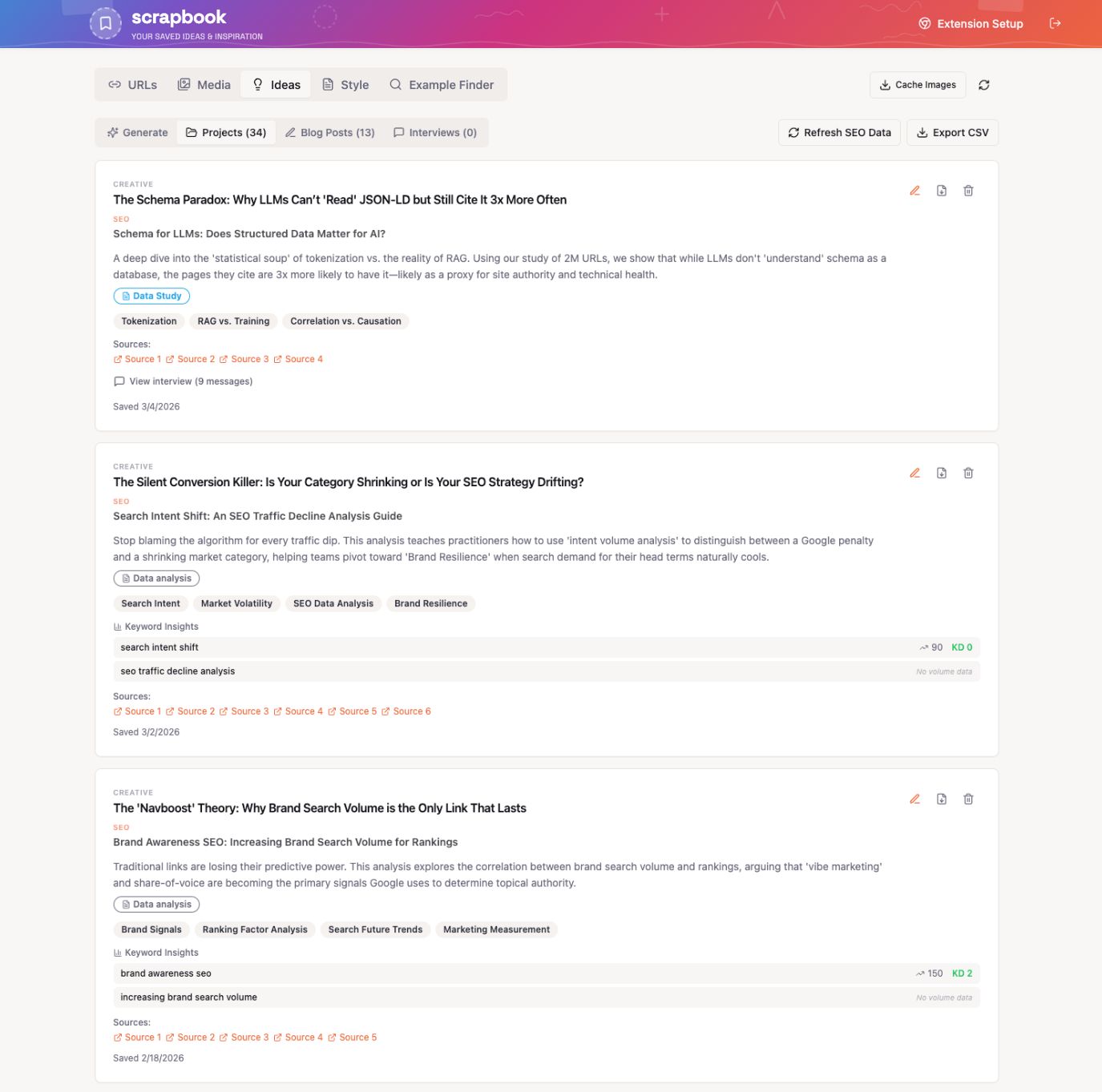

For shareable content, the focus should be on building an idea pipeline. This involves creating a "scrapbook" of ideas, facts, quotes, social posts, and other relevant material that can be readily accessed by AI. While tools like Notion or Evernote can be used, the author suggests developing custom tools that incorporate features like an "example finder" or content idea generation directly from the stored material. An AI agent, set up to periodically scan the web for trending topics on platforms like LinkedIn and Reddit, can also help maintain a constant awareness of emerging content trends. Tools like Firehose are presented as a way to stream the web in real-time for specific topics, with API connectivity for integration with AI agents.

In conclusion, the overarching message is to prioritize the quality and structure of the information fed to AI systems. Building comprehensive "source-of-truth" files and maintaining human oversight throughout the content creation process are paramount. The future of AI-assisted content creation, the author predicts, will favor individuals and teams who excel as knowledge curators, leveraging AI as a tool to enhance their judgment and access to better information, rather than relying on it as a complete writing solution.