1

1 1

1

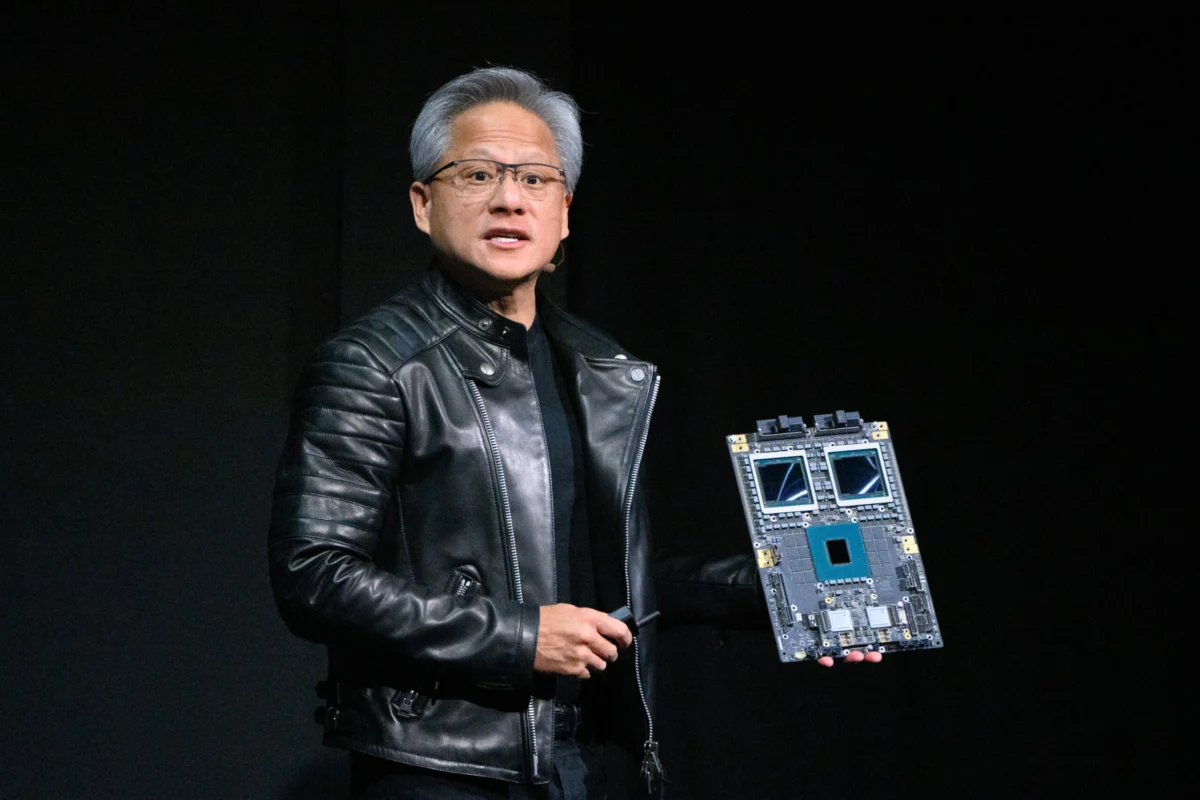

Nvidia CEO Jensen Huang has consistently demonstrated a remarkable ability to foresee market shifts years in advance, a prescience that has cemented the company’s position at the forefront of technological innovation. A prime example of this visionary leadership emerged in 2010, when Huang initiated efforts to develop AI-specific chips, more than a decade before the current artificial intelligence boom captured global attention. This strategic move laid the groundwork for Nvidia’s unparalleled dominance in AI computation. A similar, yet largely unheralded, strategic maneuver occurred in 2020: a decisive doubling down on data center networking through a significant acquisition. This less publicized move has since blossomed into one of Nvidia’s most lucrative and rapidly expanding divisions, quietly contributing billions to its bottom line with surprisingly little fanfare.

In a remarkably short span, Nvidia’s networking business, which provides the critical infrastructure to connect sophisticated data centers, has ascended to become the company’s second-largest revenue generator, trailing only its formidable compute division. The financial performance of this segment is nothing short of extraordinary. In the most recent quarter, it reported a staggering $11 billion in revenue, marking an astounding year-over-year increase of 267%. For the full fiscal year 2026, Nvidia’s networking operations contributed more than $31 billion, according to the company’s latest financial disclosures. These figures underscore the division’s pivotal role in Nvidia’s overall growth trajectory and its deep integration into the burgeoning AI economy.

The impressive growth of this division is fundamentally driven by the escalating demand for AI processing capabilities. Its technological portfolio is comprehensive, encompassing essential components required for high-performance data transfer and processing within AI factories. Key technologies include NVLink, a high-speed interconnect designed to facilitate seamless communication between GPUs housed on a data center rack; Nvidia InfiniBand Switches, an advanced in-network computing platform optimized for extreme performance and scalability; Spectrum-X, Nvidia’s cutting-edge Ethernet platform specifically engineered for AI networking workloads; and sophisticated co-packaged optics switches, among other critical infrastructure elements. Collectively, Nvidia’s networking business provides the entire technological stack necessary for constructing an "AI factory," which is a specialized data center meticulously designed and optimized for the intensive task of training complex artificial intelligence models.

Despite its colossal financial contribution, the networking business often operates in the shadows of Nvidia’s more celebrated segments. Kevin Cook, a senior equity strategist at Zacks Investment Research, highlighted the sheer scale of this often-overlooked division to TechCrunch, describing it as "one of the most impressive new segments from the company." Cook emphasized its financial prowess by stating, "[Nvidia’s networking business] reports $11 billion for the quarter; that number is greater than Cisco’s networking business, almost as big as the full-year estimates." He further underscored its efficiency, noting that Nvidia’s networking business achieves in a single quarter what Cisco’s entire networking business typically accomplishes in a full year. Yet, this remarkable achievement does not garner the same level of public attention as the company’s significantly larger chip business or even its gaming division, which, though nearly three times smaller in revenue, remains its original "bread-and-butter" and a consistent source of public fascination.

The origins of Nvidia’s formidable networking business can be traced back to Mellanox, an Israeli networking company founded in 1999. Nvidia acquired Mellanox in 2020 for a substantial sum of $7 billion, successfully outbidding rivals like Intel in what was a fiercely contested acquisition. Kevin Deierling, who now serves as Senior Vice President of Networking at Nvidia, joined the company as part of the Mellanox acquisition. Deierling admits that the relative anonymity of Nvidia’s networking business compared to its other divisions could, perhaps, be attributed to a lack of effective marketing on his part, though he quickly dismisses that explanation as insufficient.

Deierling elaborated on the common misconception surrounding networking: "People think of networking as just, ‘I got a printer, and I need to connect to it,’" he explained. However, Jensen Huang’s perspective on networking was profoundly different and far-reaching. Deierling recalled Huang’s words on the very first day of the acquisition: "Jensen said this the first day when he acquired us, he said the data center is the new unit of computing. Networking is a lot more than just moving the smaller amounts of data between a compute node, it’s actually a foundation." This statement encapsulates Huang’s visionary understanding that in the era of AI and large-scale data processing, the network is not merely a conduit but the very backbone and foundational architecture of modern computing.

While Deierling initially grappled with understanding the precise timing and rationale behind Huang’s decision to acquire Mellanox when he did, he now fully comprehends its strategic brilliance. Integrating a robust networking business alongside its leading GPU business provides Nvidia with an unparalleled advantage: the ability to offer a complete, optimized solution where its high-performance chips are seamlessly paired with the networking technology specifically designed to maximize their performance. This synergistic approach ensures that customers receive an integrated system that performs optimally from day one, rather than having to piece together disparate components. As Cook, the Zacks analyst, affirmed, "When Jensen bought Mellanox in 2020, he saw that was the missing piece to make GPUs a complete package." This comprehensive package is crucial for the demanding requirements of AI training and inference at scale.

Deierling further illuminated another critical aspect of Nvidia networking’s success: its strategic go-to-market approach. Nvidia consciously chooses to sell its networking technology as a full-stack solution, rather than offering individual components. This ensures deep integration and optimized performance across the entire system. Furthermore, the company does not sell its networking products directly to end-users; instead, it leverages an extensive network of partners. This partner-centric model allows Nvidia to scale its reach and focus on innovation, while relying on its partners for implementation and customer support. "I can’t think of other companies that have [the] full-stack capabilities that we have," Deierling stated, highlighting Nvidia’s unique position. "We are really different. We build the full compute stack, fully integrated stack, and then we go to market through all of our partners." This integrated, partner-led strategy minimizes friction for customers and ensures that Nvidia’s AI factories are deployed efficiently and effectively.

Nvidia continues to push the boundaries of networking technology, as evidenced by its recent announcements. During Huang’s keynote address on March 16 at the company’s annual Nvidia GTC technology conference, a slew of significant updates to its networking system was unveiled. Among the highlights was the launch of the Nvidia Rubin platform, a groundbreaking development that includes six new chips designed to power what the company refers to as an "AI supercomputer." These chips represent the next generation of high-performance computing infrastructure, crucial for tackling the most complex AI challenges. Additionally, Nvidia announced the new Nvidia Inference Context Memory Storage platform, designed to enhance the efficiency and speed of AI inference workloads, and more efficient Nvidia Spectrum-X Ethernet Photonics switches, which promise superior performance and energy efficiency for AI networking, alongside other innovative products. These continuous advancements reinforce Nvidia’s commitment to maintaining its leadership in both compute and networking, ensuring its ecosystem remains at the cutting edge.

Deierling’s insights underscore the profound transformation of networking’s role in modern computing. "It’s no longer a peripheral to connect the printer, some other slow I/O device," he emphasized. Instead, networking has become absolutely "fundamental to the computer." Drawing a historical parallel, he explained, "In the old days, we had what was called the back lining inside the computer. Today, the network is the back lining of the AI factory, and it’s super important." This analogy powerfully illustrates that just as the motherboard was once the central nervous system of a single computer, the high-speed, intelligent network now serves as the essential internal architecture of the distributed, colossal "AI factories" that are driving the current technological revolution. Nvidia’s foresight in recognizing this shift and strategically investing in comprehensive networking solutions has positioned it not just as a chipmaker, but as the architect of the future of AI infrastructure.