1

1 1

1

Flash floods stand as one of the planet’s most devastating meteorological phenomena, claiming over 5,000 lives annually and inflicting widespread damage across communities worldwide. Despite their deadly impact, these events have historically remained among the most challenging to predict accurately. Their ephemeral nature – being highly localized and incredibly short-lived – means that traditional weather monitoring infrastructure often fails to capture the comprehensive data required for effective forecasting. While human meteorologists and advanced sensor networks have amassed vast quantities of weather data, such as temperature fluctuations or consistent river flows that are monitored over extended periods, flash floods often slip through these nets. The rapid onset and confined geographical scope of these deluges create significant data gaps. This absence of rich, granular historical data has, until now, hindered the application of sophisticated deep learning models, which thrive on extensive datasets, from accurately forecasting these sudden and destructive events.

In a groundbreaking endeavor, Google believes it has found an innovative solution to this critical problem: by harnessing the power of artificial intelligence to interpret and learn from human-generated news reports. The tech giant’s researchers turned to Gemini, Google’s advanced large language model (LLM), to sift through an immense repository of 5 million news articles originating from every corner of the globe. The objective was to systematically identify and extract reports pertaining to floods, a task that Gemini accomplished with remarkable precision, isolating information on 2.6 million distinct flood events. This colossal trove of extracted data was then meticulously transformed into a geo-tagged time series, a structured dataset that assigns precise geographical coordinates and timestamps to each reported flood incident. This pioneering dataset, aptly named "Groundsource," marks a significant milestone as the first instance of Google employing its language models for this specific type of environmental data generation, as confirmed by Gila Loike, a Google Research product manager. The findings from this research, along with the comprehensive Groundsource dataset, were made publicly available on a recent Thursday morning, underscoring Google’s commitment to open science and global collaboration in crisis prediction.

With Groundsource established as a robust, real-world baseline of historical flood events, Google’s researchers proceeded to develop and train a specialized forecasting model. This model is built upon a Long Short-Term Memory (LSTM) neural network, an architectural choice particularly well-suited for processing and making predictions based on sequential data, such as time-series information. The LSTM model is designed to ingest vast amounts of global weather forecasts – including precipitation, temperature, wind patterns, and atmospheric pressure – and then, by correlating this meteorological data with the historical patterns learned from Groundsource, it generates a probabilistic forecast of flash floods for any given area. This sophisticated system allows for the identification of regions at heightened risk, offering a crucial early warning capability.

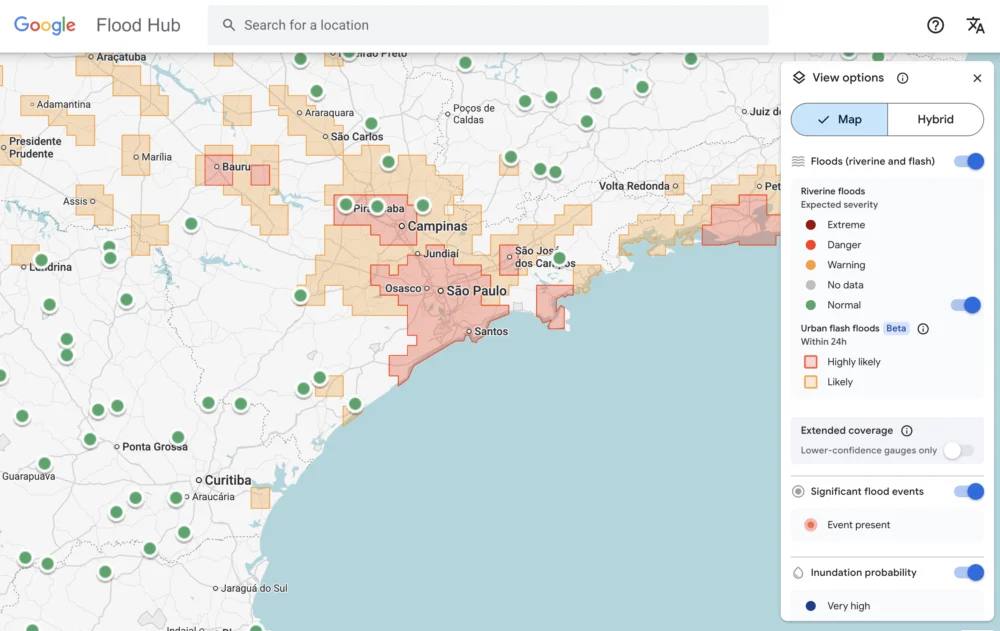

The impact of Google’s flash flood forecasting model is already being felt across the globe. The system is actively highlighting potential risks for urban areas in 150 countries through the company’s dedicated Flood Hub platform. This platform serves as a central repository for flood alerts, providing visual maps and probability scores to illustrate the likelihood and potential impact of impending floods. Beyond simply publishing information, Google is also proactively sharing its invaluable data with emergency response agencies and humanitarian organizations around the world. One such beneficiary is the Southern African Development Community (SADC), whose emergency response official, Antóntio José Beleza, participated in trialing the forecasting model. Beleza lauded the system, stating that it significantly improved his organization’s capacity to respond more swiftly and effectively to flood events, enabling better allocation of resources and potentially saving lives through earlier evacuations and preparedness measures. The ability to predict flash floods with even a few hours’ notice can make a profound difference in regions frequently ravaged by these natural disasters.

Despite its innovative approach and widespread utility, the Google model, like any nascent technology, still possesses certain limitations. Foremost among these is its relatively low spatial resolution, currently identifying risk across areas approximately 20 square kilometers in size. While this scale is useful for regional warnings, flash floods can be hyper-local, impacting much smaller areas with intense, concentrated rainfall. This resolution can sometimes mean that very localized, severe events might be missed or their precise impact areas generalized. Furthermore, the model is not yet as precise as highly localized systems such as the US National Weather Service’s advanced flood alert system. A key reason for this disparity is Google’s model’s current inability to incorporate real-time local radar data, which provides minute-by-minute tracking of precipitation intensity, movement, and accumulation. Such radar data is critical for pinpointing exactly where and when heavy rainfall is occurring, enabling highly accurate, short-term flood warnings.

However, these perceived limitations must be understood within the broader context of the project’s foundational design philosophy. A core objective of Google’s initiative was to create a forecasting solution specifically tailored to benefit regions that are most vulnerable but least equipped. Many local governments and communities around the world simply cannot afford the substantial investment required for expensive weather-sensing infrastructure, such as advanced Doppler radar networks, or do not possess extensive, long-term records of meteorological data crucial for traditional forecasting methods. This is where Groundsource’s unique strength truly shines. Juliet Rothenberg, a program manager on Google’s Resilience team, articulated this point compellingly to reporters at a recent briefing, stating, "Because we’re aggregating millions of reports, the Groundsource data set actually helps rebalance the map. It enables us to extrapolate to other regions where there isn’t as much information." This means the model can provide critical warnings in data-sparse areas by inferring patterns from a global collection of human observations, effectively democratizing access to crucial flood forecasting capabilities.

Looking ahead, the potential applications of this methodology extend far beyond flash floods. Rothenberg expressed the team’s optimism that leveraging large language models to transform qualitative, written sources into quantitative data sets could be applied to develop predictive models for other ephemeral yet critical phenomena. This includes natural hazards such as heat waves, which are becoming increasingly frequent and severe due to climate change, and mudslides, which often follow intense rainfall in vulnerable terrains. The ability to glean structured, actionable data from unstructured text offers a powerful new paradigm for environmental monitoring and disaster preparedness.

This innovative approach by Google is also part of a larger, burgeoning effort within the scientific and technological communities to enhance weather forecasting through advanced machine learning. Marshall Moutenot, the CEO of Upstream Tech, a company that utilizes similar deep learning models to forecast river flows for clients like hydropower companies, underscored the significance of Google’s contribution. Moutenot, who also co-founded dynamical.org – a collaborative group dedicated to curating machine learning-ready weather data for researchers and startups – highlighted the dual challenge faced in geophysics: "Data scarcity is one of the most difficult challenges in geophysics. Simultaneously, there’s too much Earth data, and then when you want to evaluate against truth, there’s not enough. This was a really creative approach to get that data." His comments emphasize the paradox of having vast amounts of raw environmental information but lacking the structured, verified datasets necessary for training robust AI models. Google’s Groundsource tackles this paradox head-on, transforming anecdotal evidence into actionable intelligence.

This important announcement comes as technology and innovation continue to shape global discourse, often highlighted at major industry gatherings. For instance, the intersection of AI, environmental science, and public policy is expected to be a key topic at upcoming events like the TechCrunch event scheduled for San Francisco, CA, from October 13-15, 2026. Such platforms provide critical opportunities for researchers, policymakers, and industry leaders to discuss breakthroughs like Google’s flash flood prediction system and explore their broader implications for society.

The author of this report, Tim Fernholz, is a seasoned journalist with extensive experience covering technology, finance, and public policy. His notable contributions include a decade as a senior reporter at Quartz, a global business news site, and his earlier career as a political reporter in Washington, D.C. Fernholz is also the author of the acclaimed book, Rocket Billionaires: Elon Musk, Jeff Bezos and the New Space Race, which delves into the rise of the private space industry. Readers seeking to contact or verify outreach from Tim Fernholz can do so by emailing [email protected] or by sending an encrypted message to tim_fernholz.21 on Signal. Further details about his professional background and published works are available through his complete bio.