1

1 1

1

As artificial intelligence continues its rapid infiltration into corporate workflows, enterprise leaders are facing a critical challenge: the inability to accurately quantify the return on investment (ROI) of these multi-million dollar deployments. While traditional business investments often follow a linear path of cost-to-value, Atlassian’s Teamwork Lab has released new research indicating that AI value is inherently compounding. To address the current measurement gap, the organization has unveiled the "Enterprise AI ROI Value Framework," a strategic model designed to help organizations move beyond simple time-saved estimates and capture the full spectrum of AI-driven impact.

The current state of AI measurement in the corporate world is often described as a scramble. When boards of directors and executive committees demand hard figures, many leaders find themselves relying on "productivity anecdotes" or narrow metrics that fail to reflect the systemic changes occurring within their teams. A Chief AI Officer overseeing more than 1,000 engineers recently summarized the sentiment of the industry, stating that their number-one problem remains metrics. The executive noted that while the potential is clear, the board requires a granular understanding of what happens "when the rubber meets the road."

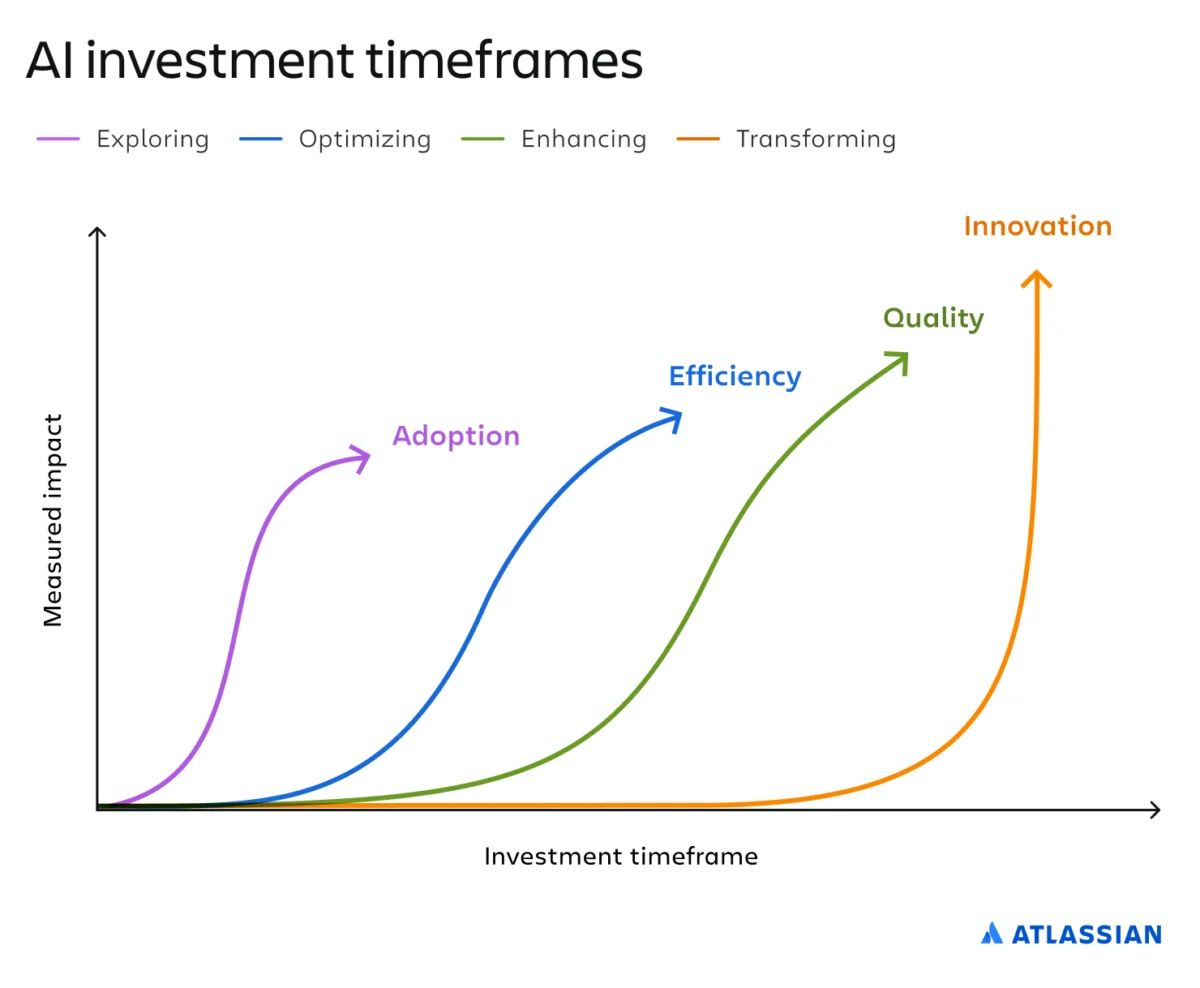

Atlassian’s research suggests that the primary error in current ROI calculations is the assumption of a clean, linear cause-and-effect relationship. In reality, AI value does not arrive as a single, explosive event. Instead, it matures through a predictable lifecycle that flows from the individual to the team, and finally to the entire organization. While a lone "superuser" may generate significant personal gains, the true enterprise-scale impact is only realized when teams align around AI-first methodologies. This compounding effect is the cornerstone of the new four-stage maturity framework: Exploring, Optimizing, Enhancing, and Transforming.

The first stage of the ladder, Exploring, is where the vast majority of modern organizations currently reside. In this phase, teams are experimenting with various tools, testing pilot programs, and identifying potential use cases. Atlassian characterizes this as a critical investment phase focused on seeding skills and building cultural comfort with the technology. However, this stage is frequently fraught with frustration. Progress is often uneven, and many pilots follow a "two steps forward, one step back" pattern.

In the Exploring phase, the framework advises leaders to resist the urge to demand immediate financial returns. Some executives dismiss this early stage as "AI tourism," but the Teamwork Lab argues that exploration is a prerequisite for all future gains. The primary goal here is to determine if the workforce is utilizing AI enough—and in the right ways—to generate actionable data. Key metrics for this stage include the percentage of active users (Weekly Active Users/Monthly Active Users), qualitative sentiment analysis regarding tool helpfulness, and the growth of shared internal resources such as prompt libraries. Organizations that under-invest in this foundational phase often find themselves unable to progress to more sophisticated stages of efficiency or innovation.

The second stage, Optimizing, marks the transition from experimentation to operational integration. At this point, teams have moved past "playing" with AI and have instead developed a collection of high-value, AI-first workflows. The focus shifts from adoption to measurable efficiency. AI is no longer a novelty; it is a tool tied directly to delivering faster outcomes. In this stage, organizations begin to see a measurable reduction in cycle times and a significant decrease in the cost per task.

During the Optimizing phase, the framework suggests tracking metrics such as the volume of work completed by AI-enabled teams compared to non-enabled teams, the reduction in time spent on manual "to-do" tasks, and overall operational throughput. However, Atlassian issues a stern warning for organizations at this level. Many leaders mistakenly believe that the AI journey ends at productivity. If an organization stops at the Optimizing stage, they risk creating "work slop"—a phenomenon where faster outputs lead to a decrease in standards, resulting in more errors, increased noise, and low-quality content that moves faster than human review systems can handle.

To combat the risks of the Optimizing stage, organizations must move to the third rung: Enhancing. In this stage, the objective shifts from speed to quality. AI is no longer just drafting or ideating; it is being used to assist in governance, review, and complex decision-making. The goal is to raise the bar on accuracy and consistency across the board. Signs that an organization has reached the Enhancing phase include the use of AI for automated "pre-flight" checks on work, the integration of AI in compliance and risk management, and the use of the technology to synthesize vast amounts of internal data for better strategic planning.

The metrics for the Enhancing phase are more sophisticated and are often tied to the Key Performance Indicators (KPIs) the business already prioritizes. These include a measurable reduction in error rates, improvements in Net Promoter Scores (NPS) or customer satisfaction, and a higher adherence to internal quality standards. Ben Ostrowski, a researcher at Atlassian’s Teamwork Lab, notes that once adoption and efficiency are mastered, the new constraint becomes trust. To succeed in this phase, leaders must isolate exactly where AI-enabled changes are improving the quality of the final product.

The final and most elusive stage of the framework is Transforming. This represents the end goal of the AI journey—a stage that few companies have reached in the current market. In the Transforming phase, AI is no longer an additive tool; it is woven into the very fabric of the business strategy. At this level, AI fuels entirely new features, products, and revenue streams that were previously impossible. The metrics for this stage align with traditional ROI frames because they reflect real business outcomes rather than just operational efficiency.

For organizations in the Transforming phase, ROI is measured through the lens of innovation. Key metrics include the percentage of revenue generated by AI-powered products, the acceleration of R&D cycles for new market entries, and the capture of new market share. At this stage, AI ROI looks like any other large-scale innovation bet, where the impact is visible on the company’s bottom line and competitive positioning.

The Atlassian framework serves as a reminder that measuring AI is not a guessing game but a structured climb. By identifying which rung of the ladder an organization occupies, leaders can set realistic expectations for their stakeholders. Forcing a "new revenue" metric on a team that is still in the Exploring phase is counterproductive, just as dismissing breakthrough innovation as a mere "productivity gain" undersells the technology’s potential.

In conclusion, the Enterprise AI ROI Value Framework provides a roadmap for navigating the complexities of modern technological integration. It acknowledges that while the math of AI may be non-linear, the path to value is predictable. As organizations move from individual usage to collective alignment, the compounding nature of AI begins to manifest. For leaders ready to put this framework to work, the first step is a honest assessment of their current maturity level, followed by the implementation of stage-appropriate metrics that move the conversation away from anecdotes and toward a rigorous, data-driven understanding of AI’s true value. By treating AI ROI as a ladder of adoption, efficiency, quality, and innovation, enterprises can finally provide the clarity that boards and executives have been demanding since the start of the AI revolution.