1

1 1

1

An engineering team operating on the Google Cloud Platform (GCP) recently resolved a critical infrastructure bottleneck using the Atlassian Rovo Dev CLI, demonstrating a significant leap in troubleshooting efficiency by reducing a multi-day diagnostic process to a single hour. The incident, which centered on persistent 404 errors during the onboarding of internal services to self-hosted OpenSearch clusters, had previously exhausted nine engineer-days of manual effort across five different specialists without reaching a resolution.

The technical challenge began when two service teams attempted to integrate their applications into a GCP-based environment. While the OpenSearch clusters, provisioned via internal automation, appeared to be functioning correctly, the services attempting to connect to them were met with "backend NotFound" and "default backend – 404" errors. The issue was particularly elusive because the calls worked consistently when executed from local machines or within a canary environment with an identical configuration. This inconsistency led the engineering team to investigate several potential culprits, including network configurations, Service Proxies, Istio service mesh settings, and the application code itself.

For three days, a cohort of five engineers scrutinized the system architecture. Initial suspicions focused heavily on the Service Proxy component, leading the team to involve specialists from that department. However, internal friction arose when the Service Proxy team maintained that their component was functioning correctly, suggesting instead that the GKE (Google Kubernetes Engine) Ingress was the likely source of the failure. Despite these hypotheses, the team lacked the specific diagnostic data to confirm the root cause, as logging was not enabled on the relevant Google Cloud Load Balancer (GCLB) paths.

The breakthrough occurred when a lead engineer, who self-identified as having extensive experience with Amazon Web Services (AWS) but "zero" prior knowledge of GCP, utilized the Rovo Dev CLI to approach the problem. The diagnostic workflow began by granting the AI-powered tool read-only access to the GCP environment via the gcloud CLI and read-write access to the staging Kubernetes cluster. To ensure the AI’s suggestions were relevant to the specific organizational context, the engineer "grounded" the tool by pointing it toward the relevant Jira tickets and Confluence pages describing the system’s unique architecture and OpenSearch cluster configurations.

By treating the CLI as a collaborative partner, the engineer prompted the tool to investigate whether the Ingress configuration could explain the "default backend" errors. The Rovo Dev CLI analyzed the symptoms and the Service Proxy team’s earlier suggestions, agreeing that the Ingress was a high-probability cause. It then identified a critical visibility gap: the absence of logs for the Load Balancer. Unlike a manual process that might require extensive documentation searches to enable GKE logging, the engineer instructed the CLI to enable logging directly. Within minutes, the team was able to tail live traffic logs.

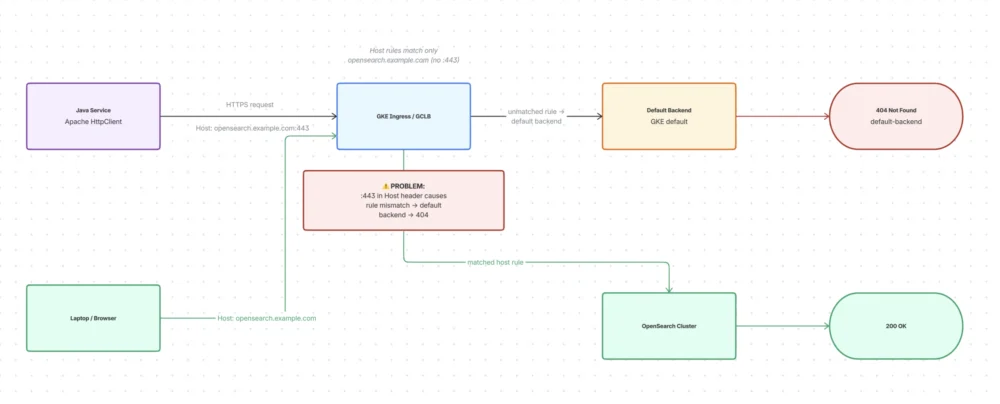

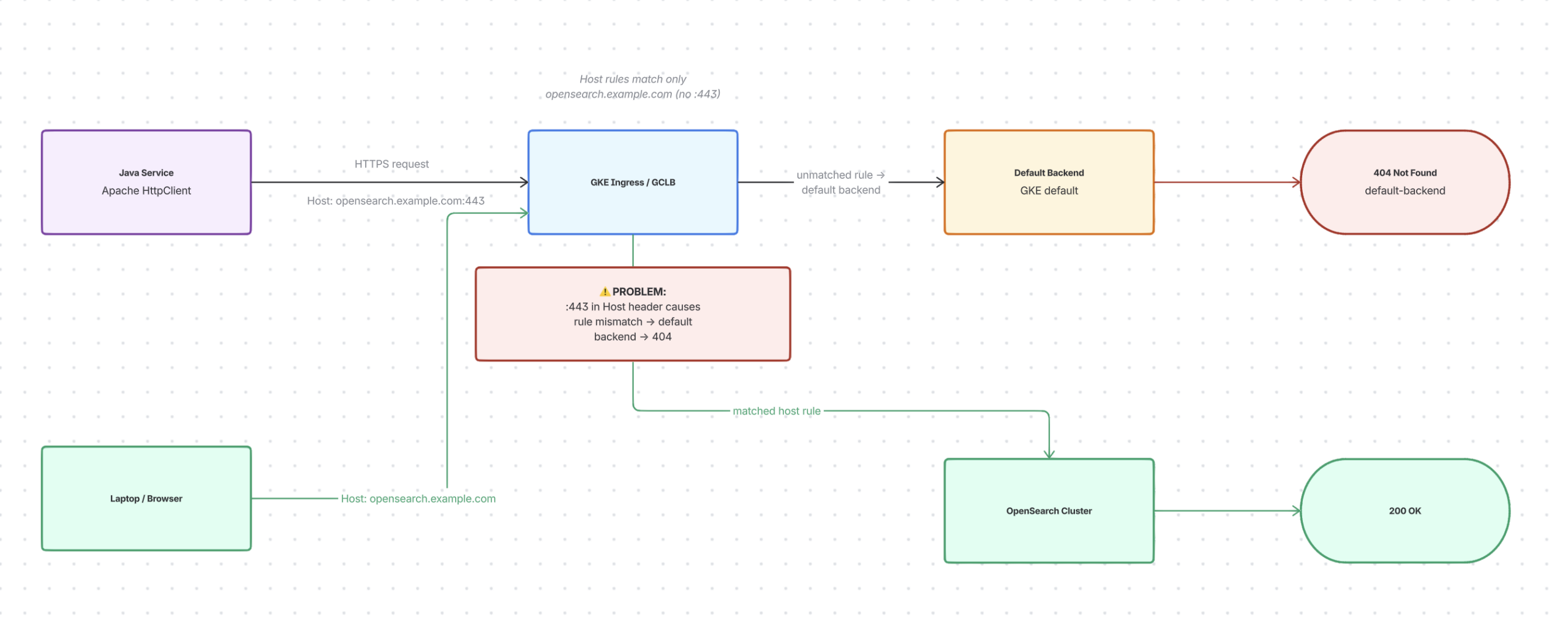

The log analysis revealed a subtle but decisive discrepancy in how different clients interacted with the system. Requests from the engineer’s laptop and web browsers—which were succeeding—sent standard host headers. However, the Java-based services using the Apache HttpClient were appending a port suffix (":443") to the Host header. The Rovo Dev CLI identified that the GKE Ingress host rules were configured to match only the bare domain name. Because the rules did not account for the port suffix, the GCLB failed to match the incoming requests to the specific OpenSearch service rules, instead routing them to the default backend, which returned a 404 error.

To validate this finding before applying a production-wide fix, the engineer used the CLI to generate a parameterized Java program. This tool allowed the team to toggle headers and host formats, successfully reproducing the 404 error in a controlled environment. Once the cause was verified, the CLI suggested a robust mitigation strategy: explicitly defining a default backend in the Ingress YAML configuration and marking the Ingress so that any unmatched host requests would be automatically routed to the OpenSearch service.

The implementation of the fix involved a simple modification to the Kubernetes manifest:

spec:

defaultBackend:

service:

name: opensearch

port:

number: 8443

annotations:

ingress.gke.io/default-backend: "opensearch:8443"This configuration ensures that if a request fails to match an explicit host rule—whether due to Apache HttpClient quirks or other header variations—it defaults to the intended service rather than a generic error page. The fix was first patched in a single namespace, where the Rovo Dev CLI monitored the rollout in real-time. The logs immediately showed that previously failing paths had turned "green," and requests with port-suffixed headers were being correctly routed.

The efficiency gains reported by the team were substantial. The manual investigation had consumed approximately nine engineer-days of collective time. In contrast, the AI-assisted workflow reached a verified resolution in approximately one hour. Beyond the speed of resolution, the process yielded superior documentation. The Rovo Dev CLI used its integrated Confluence tools to generate a summary of the incident and a detailed rollout plan. It also utilized a web search Model Context Protocol (MCP) server to verify that the chosen fix was a recognized industry best practice for handling unmatched rules in GKE Ingress.

This incident highlights a shift in how engineering teams manage complex cloud environments. The engineer noted that the AI acted as a "workflow partner" that compensated for a lack of platform-specific knowledge. By automating the "boring parts" of the job—such as looking up GCP-specific command syntax, enabling logging, and generating reproduction scripts—the tool allowed the human engineer to focus on higher-level strategy and observation.

The use of Rovo Dev CLI also addressed the common problem of "hero moves," where a single engineer solves a problem but the knowledge remains siloed. Because the entire diagnostic conversation and the resulting artifacts were captured and integrated into the team’s existing Atlassian suite (Jira and Confluence), the solution became a repeatable asset rather than a one-off fix. The team now has a verifiable test client and a documented history of why the Ingress rules were modified, which can be referenced during future onboarding exercises.

This case study is part of a broader exploration of how AI tools like Rovo Dev and Cursor are being integrated into developer workflows. The progression moves from "the plumbing"—wiring tools to internal data—to "real leverage," where AI provides a measurable return on investment by saving time and reducing system downtime. The engineer emphasized that while AI is not a "magic" solution and still requires human oversight to define constraints and verify outputs, it functions as a force multiplier for problems engineers already understand but struggle to diagnose due to system complexity.

Looking forward, the engineering team intends to explore proactive applications of the Rovo Dev CLI. This includes using the tool to perform automated policy enforcement and proactive checks to prevent similar Ingress mismatches from occurring during the initial provisioning phase. By moving from reactive troubleshooting to proactive prevention, the team aims to utilize AI to maintain higher standards of system reliability across their Google Cloud Platform infrastructure.