1

1 1

1

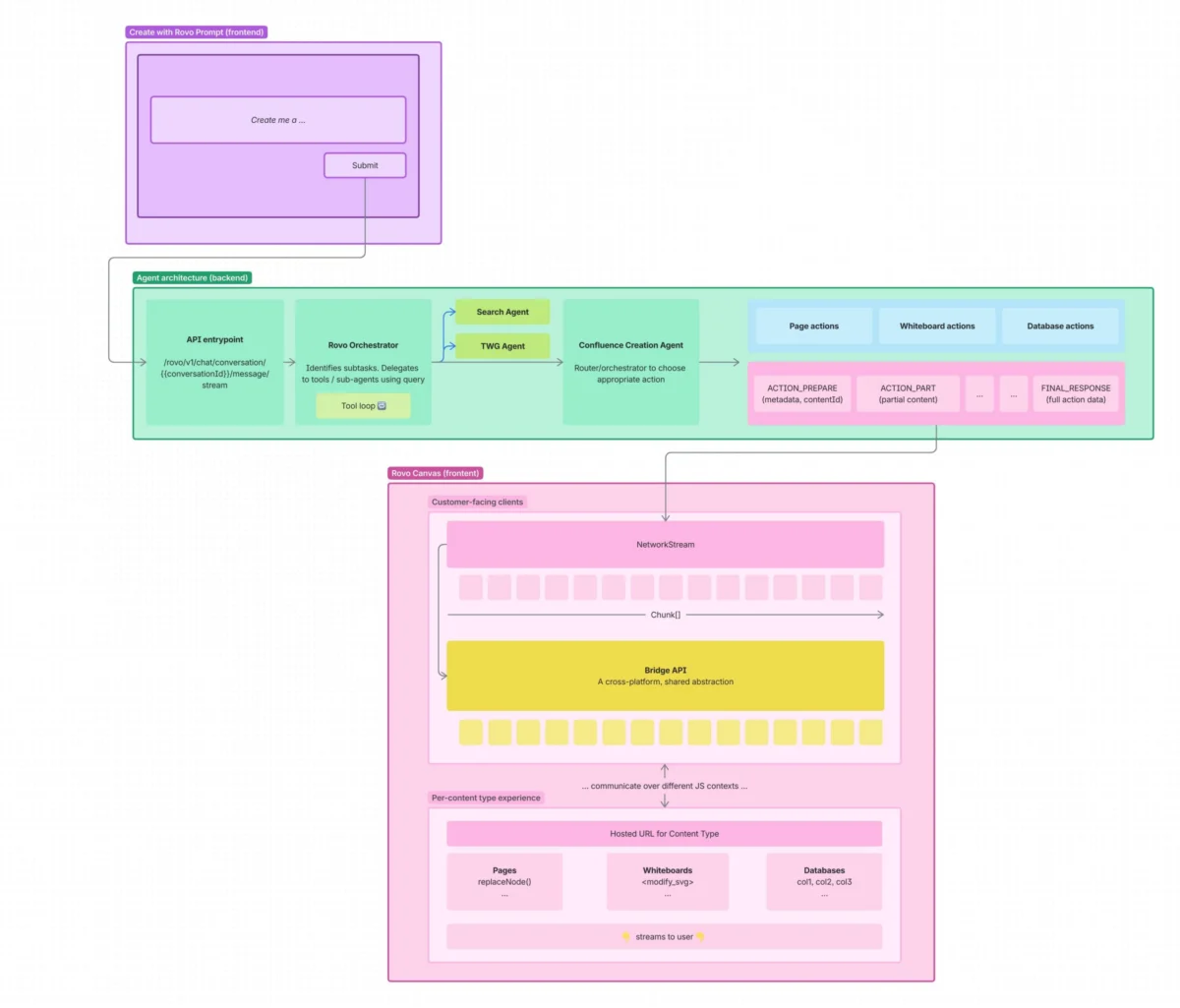

On March 19, 2026, Atlassian provided an in-depth technical overview of the engineering architecture powering Rovo, the company’s flagship AI agent, and its newly integrated collaborative creation canvas. The development represents a strategic shift in how artificial intelligence interacts with human workflows, moving away from a "handoff" model toward a synchronized, real-time collaborative environment. By unifying streaming technologies, structured data formats, and agent-powered orchestration, Atlassian has built a system capable of co-authoring complex documents, whiteboards, and databases across its entire product ecosystem.

Rovo was initially introduced as an AI agent designed to operate at the center of Atlassian’s "Teamwork Graph." Its primary function was to search across internal and third-party knowledge bases, pulling context from tools like Confluence, Jira, and various connected applications. While Rovo could surface information and interact with ecosystem objects, it previously lacked a dedicated space for iterative content generation. The "Creating content with Rovo" initiative was launched to fill this gap, providing a collaborative space where users and AI can refine rich content together in real time. The project’s guiding philosophy is that content creation should be a continuous collaboration rather than a linear exchange between a human and a machine.

The engineering team established several core principles to guide the development of the Rovo canvas. First, the tool was designed to be platform-agnostic, meaning it is not a standalone writing tool confined to a single product. While it has a primary entry point in Confluence, it is natively built on Rovo and accessible across every Rovo-supported surface. This allows for a seamless transition where a user might begin with a question in a chat interface, proceed to co-author a page, iterate on a visual whiteboard, and eventually refine a database—all within the same conversational context and powered by the same underlying AI logic.

At the heart of Rovo’s technical implementation is a top-level orchestrator agent. This orchestrator serves as the "brain" of the system, possessing the authority to invoke a suite of specialized skills based on the user’s intent. These skills include cross-product search capabilities, Teamwork Graph queries, and specialized retrieval agents for Jira. To facilitate the new canvas features, Atlassian introduced a purpose-built Confluence creation and editing skill. This specific agent is responsible for generating new content, modifying existing structures, and maintaining the integrity of the document format. Because it inherits the full context of the user’s conversation and connected knowledge sources, it can execute complex prompts, such as generating a project plan based on specific quarterly goals retrieved from the Teamwork Graph.

One of the primary technical hurdles addressed by the engineering team was the variety of underlying data representations required for different content types. Confluence pages, whiteboards, and databases each have distinct constraints and structural requirements. To maximize output quality, Atlassian conducted extensive evaluations across various Large Language Models (LLMs) and output formats to determine the most reliable approach for each medium.

For standard pages and "Live Docs," the system utilizes the Atlassian Document Format (ADF), a nested JSON structure. Generating valid ADF directly from an LLM is notoriously difficult due to the strictness of the schema. To solve this, the engineering team developed a proprietary ADF repair library. This library serves two functions: it cleans up syntax errors or structural hallucinations produced by the LLM and ensures that the final output adheres to the specific schema required for Confluence rendering. When editing existing pages, the LLM does not rewrite the entire document; instead, it utilizes a set of editor-style commands—such as "insert_after," "replace_node," or "delete_node"—to manipulate the ADF. This process operates as an agentic loop with reflection, similar to how a coding agent operates, allowing the AI to review its own edits before finalizing them.

The implementation of AI-driven whiteboards presented a different set of challenges, specifically regarding spatial layout and visual semantics. The team evaluated several output formats, including JSON and Markdown, but ultimately determined that SVG (Scalable Vector Graphics) was the superior choice. The rationale was that LLMs, having been trained on vast amounts of web data, possess a strong understanding of SVG code. SVG provides a firm parallel to how humans perceive infinite-canvas boards, utilizing shapes, text, and specific coordinate positions.

To facilitate the whiteboard experience, Atlassian built a streaming SVG parser using the "saxjs" library. When combined with proprietary constraint-solving algorithms, this enabled what Atlassian describes as the market’s first released streaming UI experience for a digital whiteboard. This allows users to see their whiteboards being "assembled" in real time as the code streams from the LLM. For editing whiteboards, the system uses a "vision-to-code" approach where the current state of the board is converted into an SVG representation for the LLM to process. The team also implemented a "todo_list" tool, which requires the LLM to outline its plan—such as "move stickies to the left" or "change color to red"—before executing the changes. This planning step significantly improved the quality of complex, multi-step visual edits.

The third content type, databases, required a structured yet flexible format. The engineering team settled on a system where the LLM produces three separate CSV (Comma-Separated Values) sections—schema, views, and data—wrapped in XML tags. This separation ensures that the presentation layer and the data layer remain distinct and parseable even as the information is being streamed. When a user requests an edit to a database, the model receives the current representation and outputs two CSVs: one for schema changes and one for data row updates. Each row in these CSVs represents a declarative change, allowing the system to apply updates safely and incrementally.

Delivering this generated content to the client required a robust streaming architecture. Atlassian opted against building simplified previews; instead, the canvas renders content using the exact same components used in the standard Confluence experience, embedded within an iframe. This ensures that the AI-generated canvas is fully featured and functional from the moment it appears. To support this, a new streaming API contract was defined, specifying how the LLM should handle different content types.

Furthermore, the team developed the "Rovo Bridge API" to facilitate bidirectional communication between the chat interface and the content objects. This library allows distinct applications—such as the Rovo chat window and a Confluence page—to communicate using local function calls while abstracting the underlying transport mechanisms. This bridge is essential for features like "click-to-edit," where an action in the chat triggers a specific change in the canvas, or where the canvas provides feedback to the chat regarding the success of a generation task. The underlying transport uses "postMessage" for web-based communication and specialized bridges for the Atlassian desktop app.

In its concluding remarks, Atlassian emphasized that building for AI requires a fundamental shift in engineering mindsets. Because models and prompts evolve rapidly, the company has prioritized a robust evaluation suite, extensive metrics for online experimentation, and comprehensive reliability monitoring. These tools allow the engineering team to iterate on Rovo’s capabilities with confidence. The collaborative AI canvas is viewed as a foundational element that provides a unified creation surface, a powerful editing framework for LLMs, and a standardized streaming protocol. Atlassian indicated that this architecture will serve as the basis for expanding Rovo’s capabilities to new content types and surfaces in the future, signaling that the current release is only the beginning of their roadmap for AI-integrated productivity.