1

1 1

1

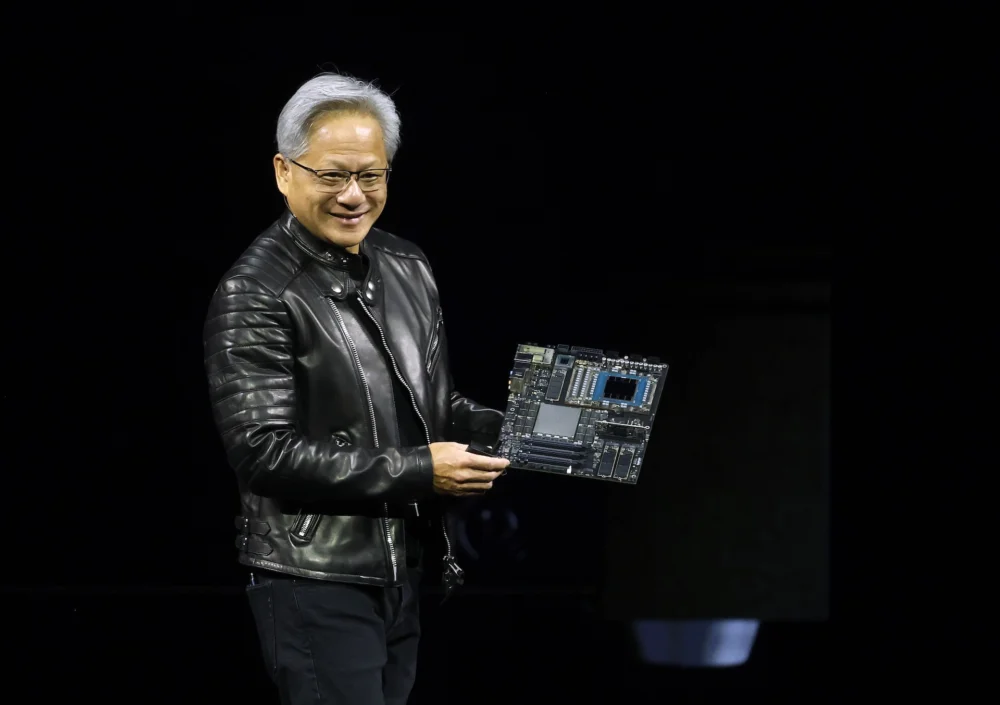

Nvidia is poised to kick off its highly anticipated annual GTC developer conference in San Jose, California, next week, with CEO Jensen Huang’s keynote address scheduled for Monday at 11:00 AM PT / 2:00 PM ET. This flagship event, standing for GPU Technology Conference, serves as Nvidia’s premier platform to announce groundbreaking products, highlight strategic partnerships, and articulate its vision for the evolving landscape of computing. Huang’s two-hour keynote is expected to be a focal point, delving into Nvidia’s pivotal role in shaping the future of computing and artificial intelligence. Attendees have the option to experience the address in person at the SAP Center or to livestream the talk directly from the official event website.

The broader three-day conference is meticulously designed to spotlight the next frontiers of AI across a diverse range of industries. From advancements in healthcare and robotics to the complexities of autonomous vehicles, GTC promises to offer deep insights into the practical applications and future trajectory of artificial intelligence. The event attracts a global audience of developers, researchers, business leaders, and enthusiasts eager to witness the innovations that will drive the next wave of technological progress.

On the software front, industry rumors suggest that Nvidia is preparing to unveil an open-source platform specifically designed for enterprise AI agents, reportedly dubbed "NemoClaw." As originally reported by Wired, this platform aims to provide businesses with a structured and efficient framework for building and deploying AI agents. These sophisticated software entities are capable of autonomously carrying out complex, multi-step tasks, ranging from data analysis and customer service automation to intricate operational processes. The introduction of NemoClaw would strategically position Nvidia to directly compete with similar enterprise-focused AI agent offerings already available from companies like OpenAI, signaling Nvidia’s intent to broaden its ecosystem beyond foundational AI infrastructure to more application-level tooling. An open-source approach could significantly accelerate adoption, allowing a wider community of developers and enterprises to customize and integrate these agents into their unique workflows, potentially democratizing access to advanced AI automation.

In parallel with software innovations, significant hardware announcements are also highly anticipated. The Wall Street Journal has reported that Nvidia is planning to release a new chip engineered to accelerate the AI inference process. This distinction between "inference" and "training" is crucial in the AI lifecycle. AI training involves feeding vast datasets to a model to teach it patterns and relationships, a process that demands immense computational power and is largely dominated by Nvidia’s high-end GPUs. Inference, on the other hand, is the subsequent phase where the trained AI model applies its learned knowledge to generate responses, make predictions, or execute decisions based on new inputs. While less computationally intensive than training, efficient inference is critical for real-time applications and scalable AI deployment.

The development of a dedicated inference chip underscores Nvidia’s strategic ambition to cement its dominance across the entire AI pipeline. Currently, Nvidia commands an estimated 80% share of the AI training market, a testament to the unparalleled performance of its GPUs for computationally heavy workloads. However, the inference market presents a different competitive landscape, with intensifying rivalry from custom-designed chips developed by tech giants such as Google (with its Tensor Processing Units, or TPUs) and Amazon (with its Inferentia chips), among others. These custom silicon solutions are optimized for specific inference workloads, often offering compelling cost-efficiency and performance. A new Nvidia chip focused on accelerating inference would represent a powerful counter-move, aiming to make AI applications faster, more accessible, and more cost-effective for businesses globally. Faster and cheaper inference is widely recognized as one of the last significant bottlenecks preventing the broad scaling of AI applications across various industries, making this rumored hardware announcement particularly impactful.

Adding another layer of intrigue to GTC is the expected clarification regarding Nvidia’s relationship with Groq, an inference-focused company. Kevin Cook, a senior equity strategist at Zacks Investment Research, conveyed to TechCrunch that attendees are eager to learn more about the future direction of this collaboration. Late last year, Nvidia reportedly paid a substantial $20 billion to license Groq’s cutting-edge technology. The tie-up has generated considerable curiosity within the industry, especially given that Groq’s founder, Jonathan Ross, its President, Sunny Madra, and other key members of the Groq team have agreed to join Nvidia. This move suggests a deeper integration and a concerted effort to advance and scale the licensed technology within Nvidia’s expansive ecosystem. Groq is known for its innovative Language Processor Unit (LPU) architecture, which delivers exceptionally high-speed inference, particularly for large language models. Nvidia’s decision to license and integrate Groq’s technology, coupled with the acquisition of key talent, could signal a strategic move to bolster its inference capabilities, potentially incorporating Groq’s unique architectural strengths into its future product lines or software stacks. This development could have significant implications for the competitive dynamics of the AI hardware market, offering Nvidia another powerful tool in its arsenal against rival custom chip developers.

Beyond these specific product and partnership discussions, GTC will, as always, feature a wide array of other partnership announcements and live demonstrations. These showcases will highlight Nvidia’s extensive AI capabilities and its collaborative efforts across a multitude of industries. From groundbreaking research in medical imaging and drug discovery to advancements in robotic automation for manufacturing and logistics, and the continuous evolution of autonomous driving systems, Nvidia’s technology forms the backbone of innovation. These demonstrations typically provide concrete examples of how Nvidia’s hardware and software platforms are enabling new breakthroughs and transforming operational paradigms across the global economy. The conference serves not only as a platform for product launches but also as a vibrant forum for knowledge exchange, networking, and collaborative problem-solving, further solidifying Nvidia’s position at the epicenter of the artificial intelligence revolution.