1

1 1

1

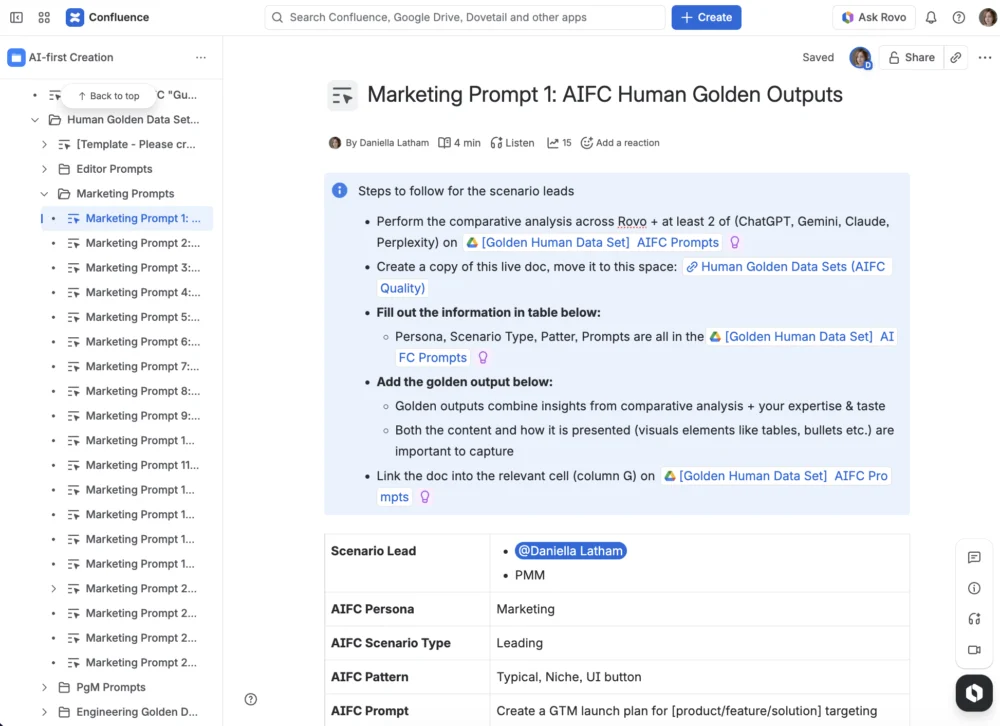

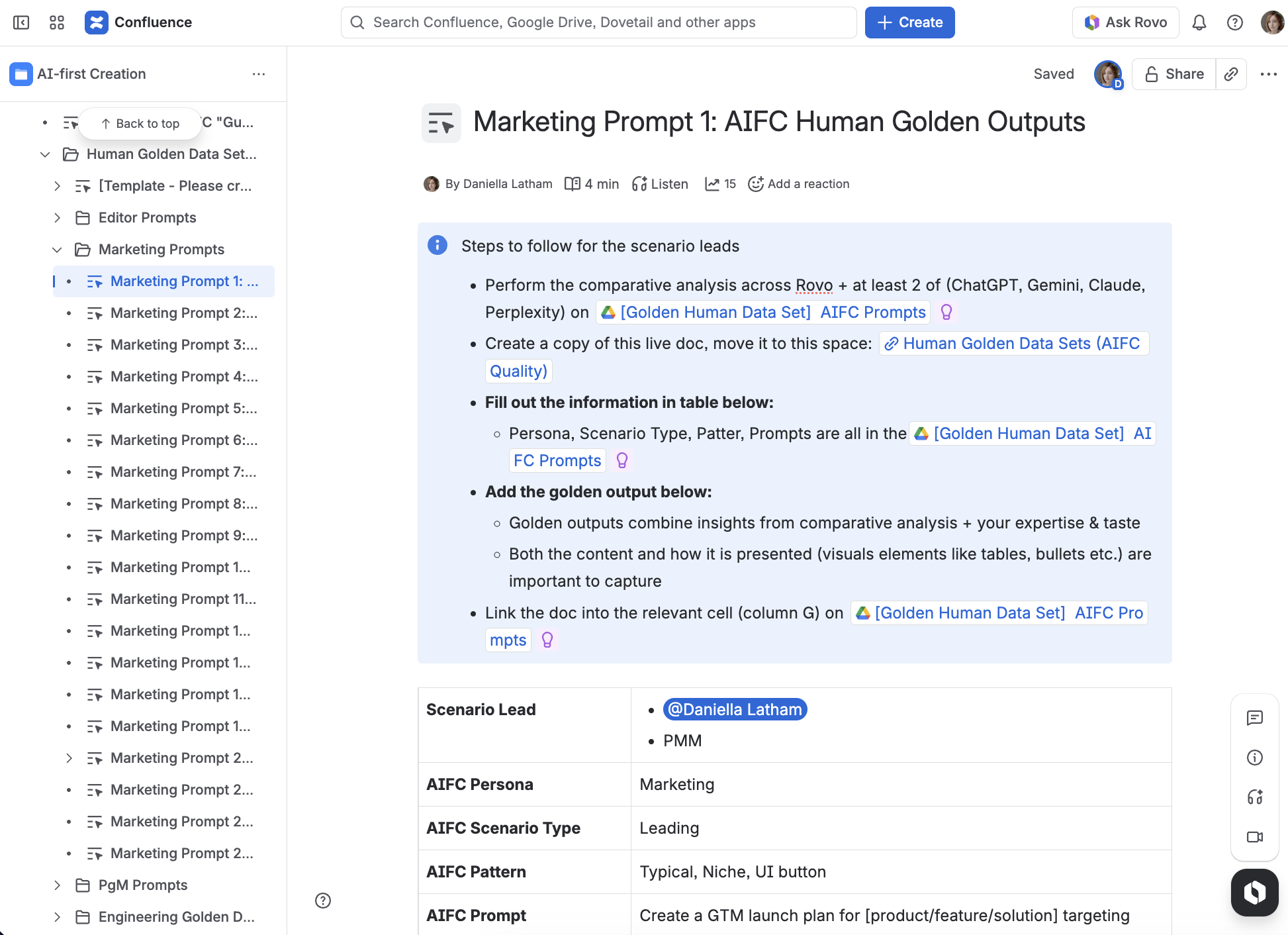

In the high-stakes lead-up to the Team ’25 Europe event, Atlassian has unveiled a sophisticated framework for transforming artificial intelligence from a speculative tool into a reliable enterprise asset. At the center of this transition is Atlassian Rovo, an AI-powered creation experience designed to integrate directly within Confluence. By utilizing a methodology centered on "golden prompts," the company’s cross-functional teams have successfully moved beyond the "hype" phase of AI, establishing a system where generative technology produces accountable, high-quality outcomes for complex professional workflows.

The initiative was driven by a fundamental challenge facing modern enterprises: ensuring that AI-generated content remains robust and accurate within the "messy" and unpredictable environments where teams actually operate. For the Team ’25 Europe showcase, Atlassian’s Product Marketing, Engineering, and Product Management divisions collaborated to ensure that Rovo’s output—ranging from Product Requirements Documents (PRDs) and project plans to marketing briefs—met the rigorous standards required for live demonstrations and everyday professional use.

Atlassian defines a "golden prompt" as a specifically engineered request designed to produce high-quality, high-stakes output for well-defined use cases. Unlike casual AI interactions, which may involve simple queries or creative experimentation, golden prompts are treated as strategic corporate assets. These prompts are characterized by their repeatability, their reliance on deep organizational context, and their ability to withstand professional scrutiny.

The strategy dictates that golden prompts should be employed whenever AI begins to influence shared plans, critical business decisions, or customer-facing deliverables. The company notes that while one-off, low-risk tasks may not require such precision, any work that carries accountability requires a "golden" standard to ensure the AI acts as a reliable partner rather than a source of potential error.

To implement this framework, Atlassian identified and refined 10 to 15 golden prompts for five key personas: Marketing, Sales, Product Management, Engineering, and general Knowledge Workers. Each prompt was designed to capture the specific goals of the persona, the necessary data inputs (context), and the precise requirements of the final output.

For Marketing professionals, golden prompts focus on the creation of comprehensive campaign briefs and social media copy that aligns with brand voice. In the Sales sector, the focus shifts to generating follow-up emails and pitch decks based on customer interactions. Product Managers utilize these prompts to draft PRDs and roadmaps, while Engineering teams use them to generate technical specifications and incident post-mortems. For the general Knowledge Worker, the prompts are optimized for summarizing complex meeting notes and synthesizing information from disparate sources.

This persona-based approach served as an "alignment device" for Atlassian’s internal teams. It forced a shared understanding of what constitutes "quality" for each role and identified the exact points where AI should hand off work to human experts.

The development of these prompts was not an isolated creative exercise but a data-driven engineering project. A "braintrust" consisting of representatives from Marketing, Product, and Engineering subjected each prompt to relentless testing. Notably, these prompts were tested across a spectrum of industry-leading Large Language Models (LLMs), including ChatGPT, Claude, Gemini, and Perplexity, alongside Atlassian’s own Rovo.

The decision to test across multiple tools was strategic. It allowed the team to benchmark Rovo’s performance against the broader market and ensured that the prompts were robust enough to yield high-quality results regardless of the underlying model. This "multi-LLM" approach helped the team identify the unique strengths of different architectures and informed the refinement of Rovo’s internal logic.

The evaluation process followed a strict five-step cycle:

A "high-quality" output was required to meet four specific criteria: it had to be accurate and grounded in provided facts, maintain a professional and relevant tone, include the necessary context from the Atlassian ecosystem, and follow a structure that made the content immediately usable.

To bring these prompts to life, Atlassian utilized Confluence whiteboards to map end-to-end user journeys. This allowed the team to visualize the "messy back-and-forth" that characterizes real human-AI collaboration. By mapping these flows before building software screens or keynote animations, the team could identify critical "magic moments" and potential friction points.

The team focused on two primary narrative arcs: a Marketing Campaign launch and a Product Launch. These scenarios were designed to show the AI performing "heavy lifting"—such as synthesizing research—while highlighting the moments where a human must step in to provide strategic direction or final approval.

This mapping process was essential for designing the "human-in-the-loop" experience. It ensured that Rovo did not operate as a "black box" but as a transparent assistant that allows users to refine, question, and adjust outputs in real-time. By documenting these loops on whiteboards, the team was able to define the precise boundaries of AI autonomy and human oversight.

The success of the golden prompt initiative is attributed to the deep cross-functional partnership within Atlassian. The project involved a diverse array of specialists, including Product Managers like Aniket Vaidya and John Murnen, and an Engineering team led by Paul Borza, Velu Alagianambi, Peter Martigny, and Dhanraj Jadhav. Sales perspectives were provided by Scott Silver, while Marketing was represented by Shelley Wang and Nidhi Chaudhry. Program management was overseen by Manjiri Soman and Guru Prakash Nagarajan.

This collaboration ensured that the prompts were technically sound, commercially relevant, and user-friendly. The company reports that this early investment in cross-functional "braintrusts" has already paid dividends, resulting in more stable product features, faster development cycles for AI interactions, and a higher level of trust from internal stakeholders and early adopters.

The work performed for Team ’25 Europe represents a broader shift in Atlassian’s approach to AI. By focusing on "golden prompts," the company is moving away from generic AI applications toward specialized, context-aware tools that understand the specific nuances of different professional roles.

The methodology emphasizes that the value of AI in the enterprise is not found in the complexity of the technology alone, but in the reliability of its application. By treating prompts as rigorous technical requirements rather than casual suggestions, Atlassian has established a blueprint for how organizations can integrate generative AI into their core operations without sacrificing accountability or quality.

Atlassian has confirmed that the "Create and Edit with Rovo" experience is now live and available to users. This feature allows teams to turn prompts and context into ready-to-use work—including pages, plans, and briefs—directly within Confluence. As the company continues to iterate on this technology, the "golden prompt" framework will remain a cornerstone of its strategy to deliver AI that is both magical in its execution and dependable in its results.