1

1 1

1

The proliferation of AI writing tools promises to accelerate content creation, but a recent deep dive reveals that the true bottleneck in content marketing isn’t the act of writing itself, but the quality and verification of the underlying information. This finding emerged from an experiment generating 40 articles using Claude, where it became clear that while AI can expedite the writing process, it falls short in providing the crucial, verified factual basis and reference material that forms the bedrock of effective content.

The author, who chose not to name specific AI writing platforms like Jasper, Frase, or Writesonic, opted to use the Large Language Model (LLM) directly, coupled with a proprietary system for managing their own data and processes. These off-the-shelf tools, while adequate for users with limited writing or SEO skills, or those facing time constraints, ultimately impose a quality ceiling rather than serving as a foundational element for high-caliber content.

1. The Research Problem: AI Rehashes Existing Rankings

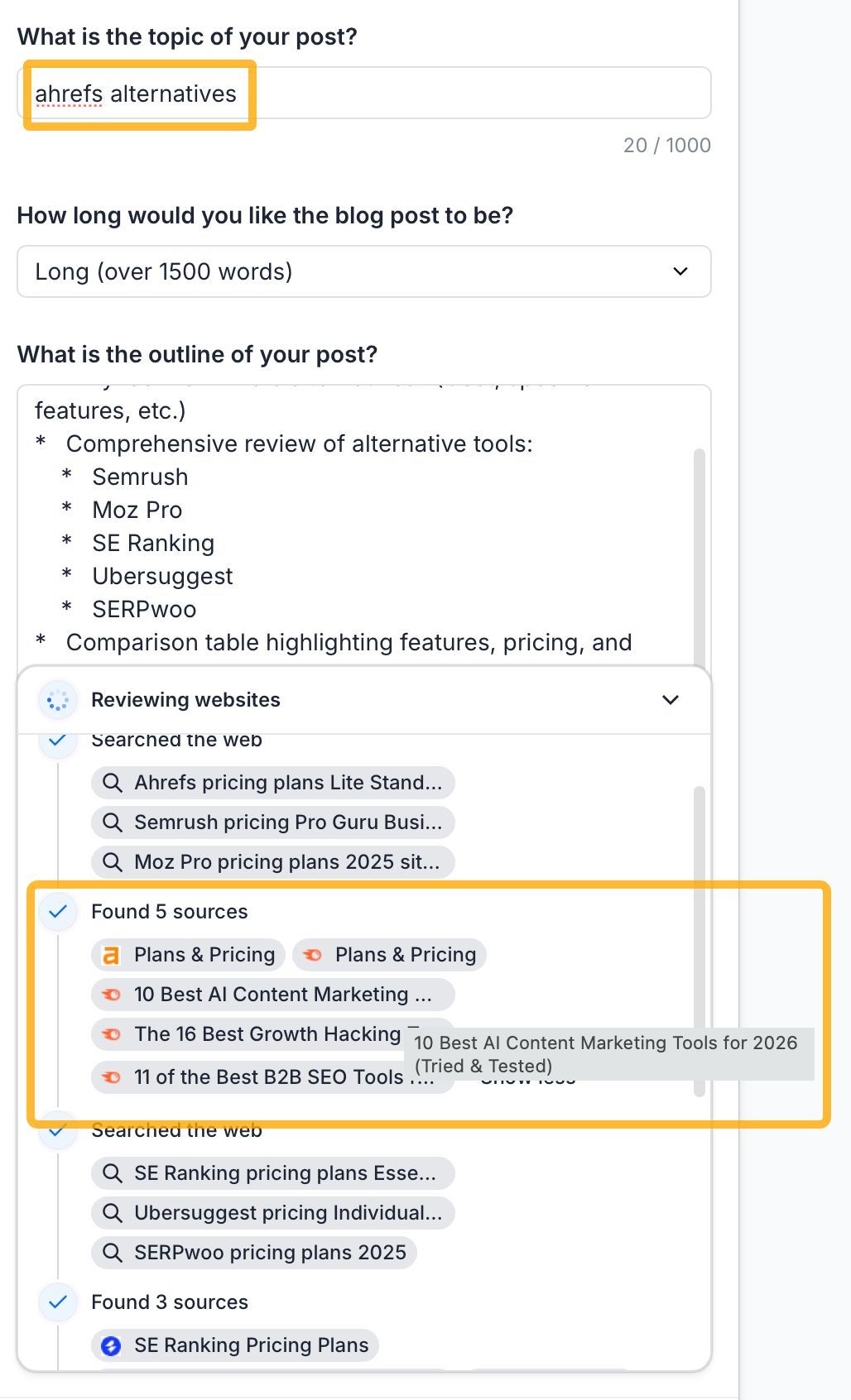

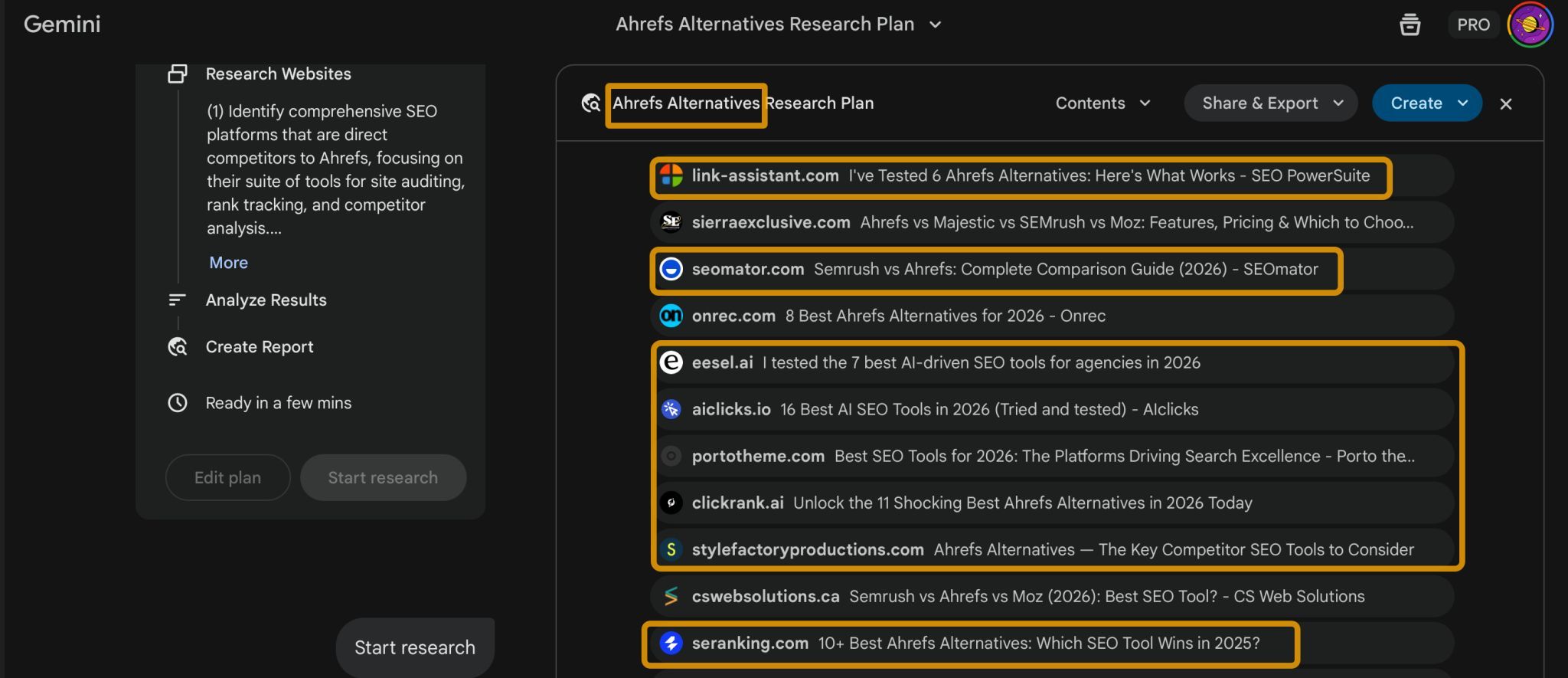

A significant issue identified is the research methodology of most AI writing tools. They tend to "fact-check" content by cross-referencing against existing top-ranking search results. This often means relying on competitor marketing pages, outdated blog posts, or articles that have themselves copied information from other sources. This process, described as "laundering errors through consensus," can lead to the AI presenting inaccurate information as fact if multiple flawed sources converge.

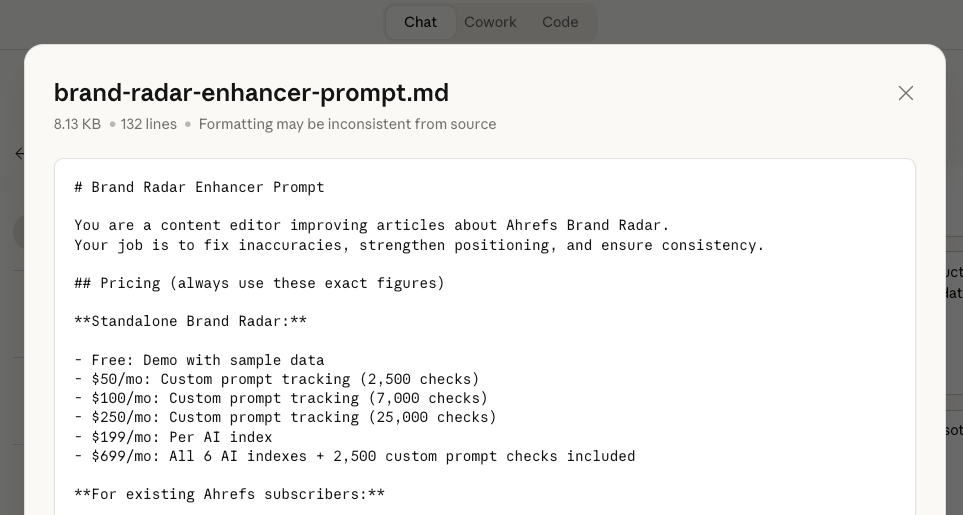

The consequences of this approach can be severe, with the author experiencing incorrect pricing, misrepresented features, and significantly inaccurate numerical data. The AI’s inability to discern biased or unreliable sources exacerbates the problem, paving the way for "worldwide meta-spam." Even advanced assistants like Gemini, when used as a basis for article generation, exhibit this tendency to rely on existing, and potentially flawed, search results for branded topics.

For complex content, such as a comparison of eight products, the AI was unable to manage the necessary 15-20 reference files, including product-specific fact-checked documents, style guides, and editing checklists.

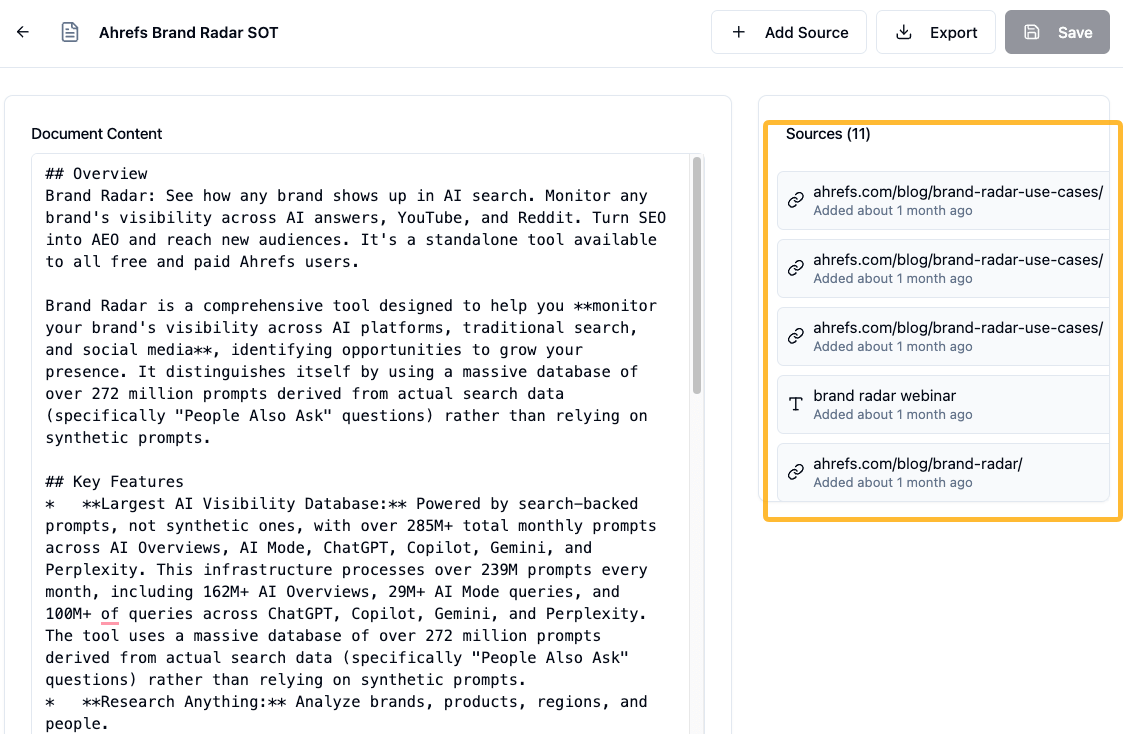

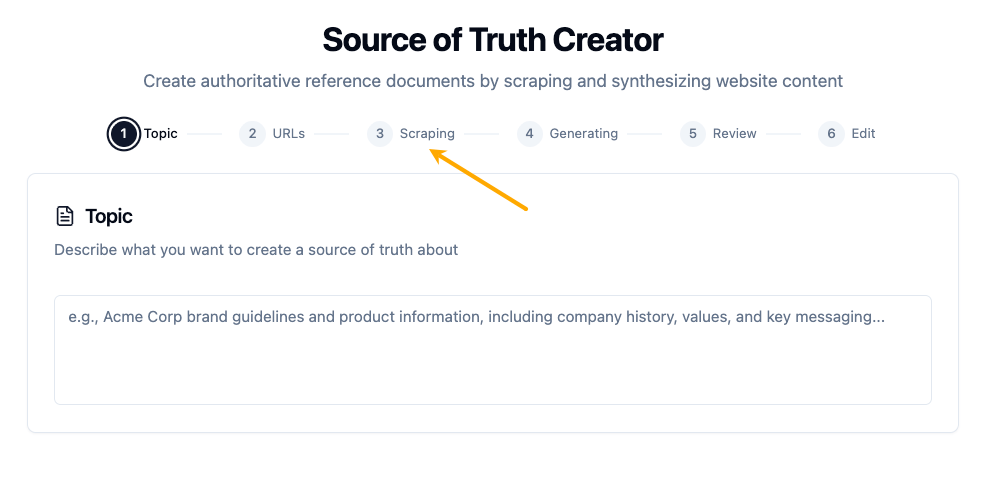

Solution: The author advocates for building custom "source of truth" (SOT) files for every product and competitor covered. This involves compiling verified data, such as pricing, features, and key metrics, into easily digestible formats. For competitor analysis, this means preparing documents detailing their pricing pages, feature lists, and limitations. The recommendation is to dedicate a significant portion of the project timeline – up to three weeks for a four-week project – to building these reference files before initiating any AI content generation.

2. The Process Problem: One-Shot Generation vs. Iterative Writing

AI writing tools often operate like assembly lines, designed for a single generation pass. However, the author likens effective writing to cooking, a process that involves continuous tasting, adjustment, and the incorporation of unplanned elements. Even with attempts to control brand voice through dropdowns or style files, AI-generated content consistently requires substantial editing. Achieving the desired voice often necessitated five to six rounds of revisions per article, involving detailed feedback on tone and clarity.

The user interface of many writing tools also proves limiting, offering fixed editing options that cannot accommodate the nuanced, multi-level revisions required – from single sentence rewrites to complete section restructuring.

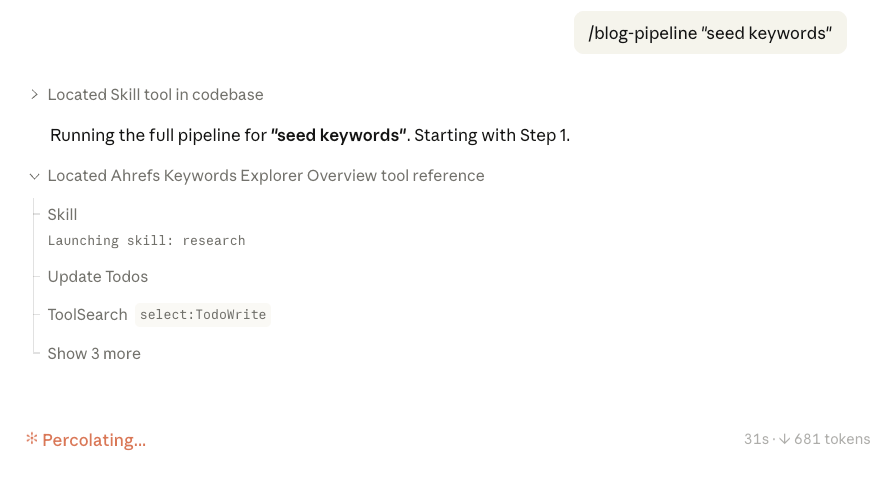

Solution: The author suggests breaking down the writing workflow into repeatable tasks and developing specific prompts for each. This iterative approach, refined through trial and error, allows for greater control and quality. These specialized prompts can later be integrated into more automated workflows, such as "Claude skills." For critical steps, running prompts twice or using a second AI for cross-checking is recommended.

3. The Scale Problem: AI Tools Treat Articles as Isolated Entities

While AI writing tools often tout scalability, their practical implementation for large-scale content production proved frustrating. Building complex workflows was difficult, human-in-the-loop functionality was limited, and output quality tended to degrade as requirements became more nuanced.

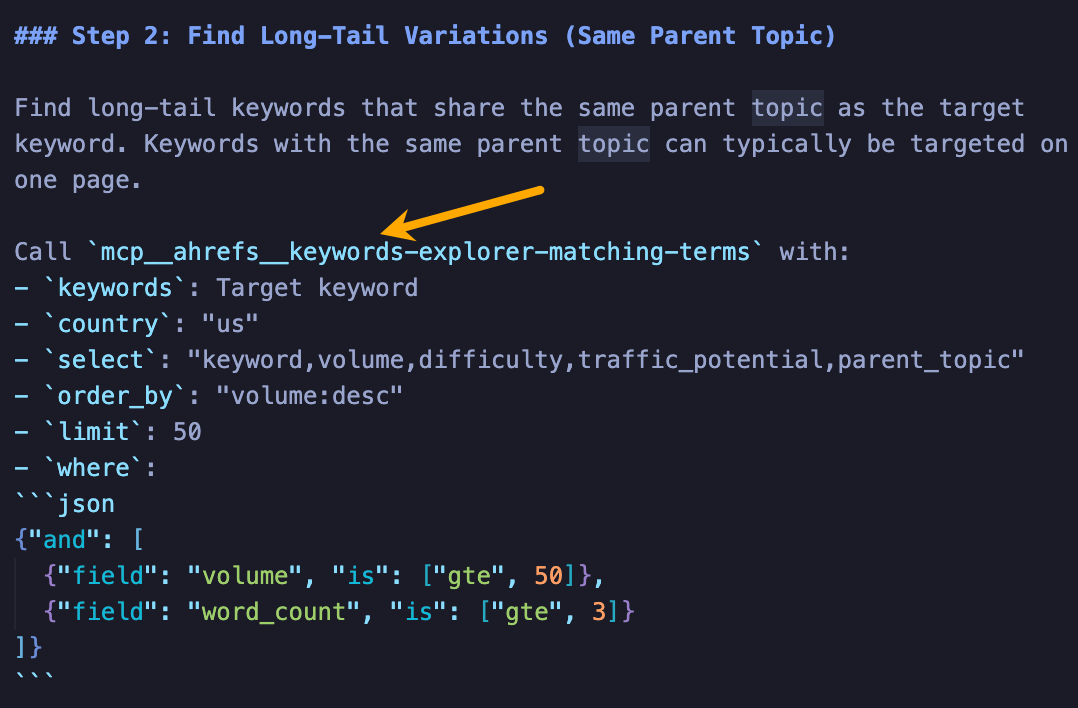

Solution: The author champions the use of AI assistants with coding capabilities, such as Claude Code and OpenAI Codex. These tools can initiate comprehensive processes, including fetching SEO data, referencing SOT files, and performing web scraping, all within a single instruction. By defining phases for content generation, users can allow the AI to run autonomously while they focus on other tasks. Integration with research tools, like Ahrefs’ MCP, can further automate data input into these workflows. Even manual data extraction, such as screenshots, can be effective if provided with specific instructions to the AI.

4. The Economics Problem: Wrapper Costs Exceeding Engine Value

A significant economic disparity exists between the cost of direct chatbot subscriptions and dedicated AI writing tools. A chatbot subscription, often around $20 per month, provides access to the latest models with unlimited generation. In contrast, writing tools can range from $50 to $2,000 per month, frequently utilizing older models with usage caps. This represents a situation where users pay more for less capability.

The author illustrates this by describing a process of using Ahrefs’ Brand Radar to identify top-cited articles, then leveraging Claude to extract structures for outlines and integrate personal insights. This conversational, stage-controlled approach is more cost-effective and flexible than relying on bundled writing tools.

Solution: The author advises investing resources in the quality of the information fed to the AI, rather than in the AI tool itself. This includes time and money spent on building robust SOT files and refining prompts. This strategy also enhances adaptability to evolving AI models and changing content requirements.

5. The Content Strategy Problem: A One-Size-Fits-All Approach

AI writing tools typically assume a uniform approach to content creation, often simplifying the process to keyword input and article generation. However, the author identifies two distinct tracks for content in modern marketing: searchable content and shareable content. Writing tools are ill-equipped to handle either effectively.

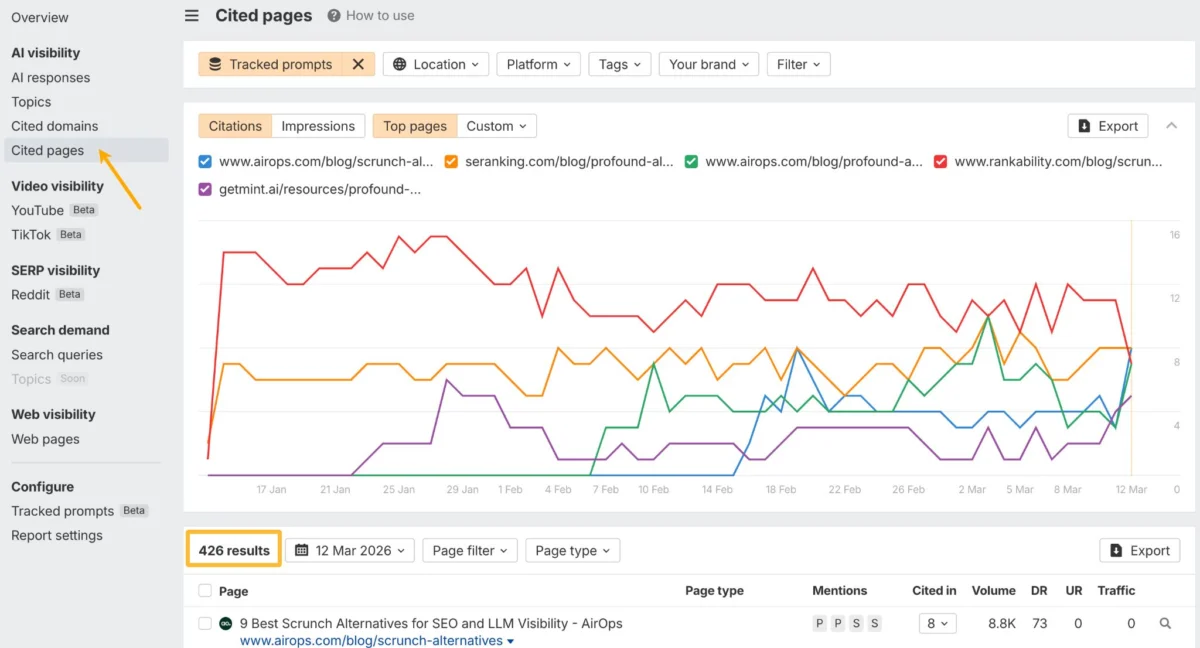

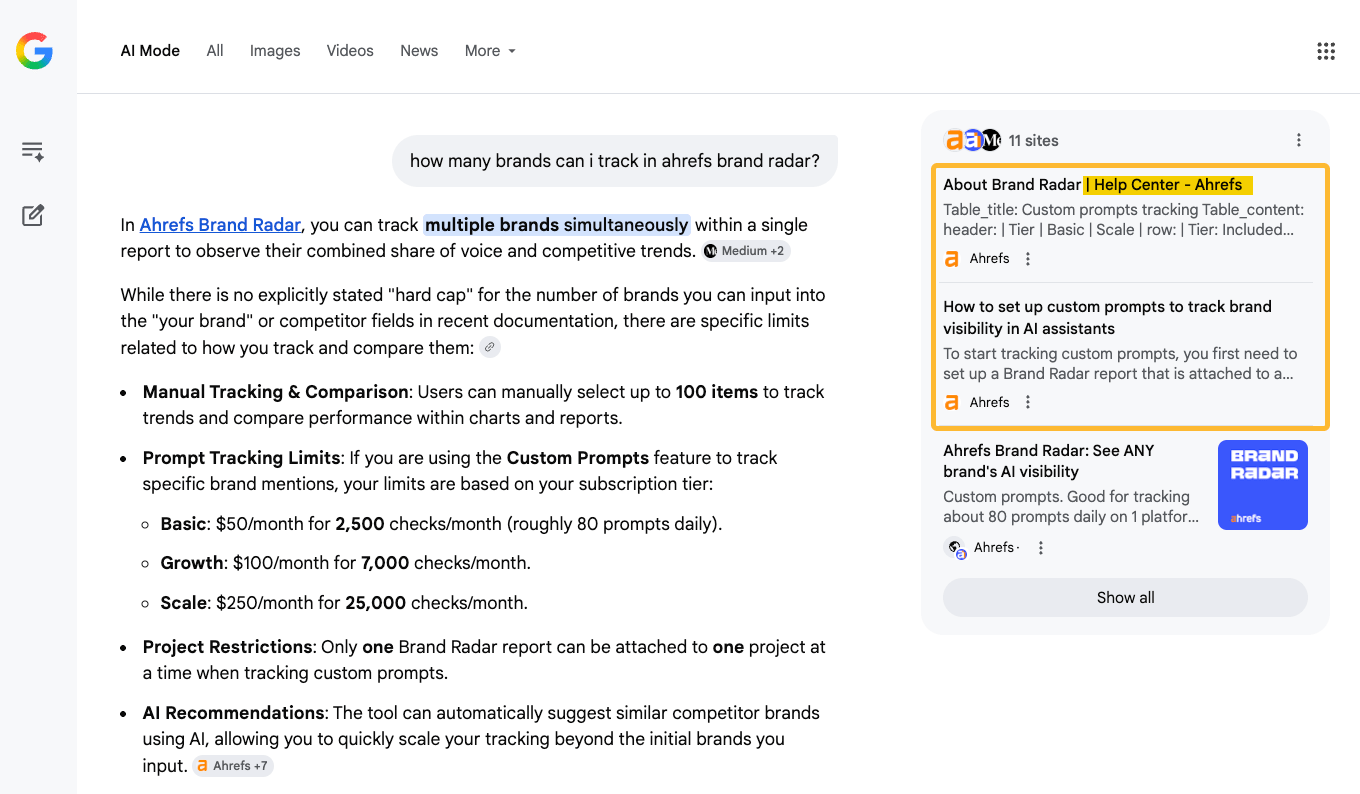

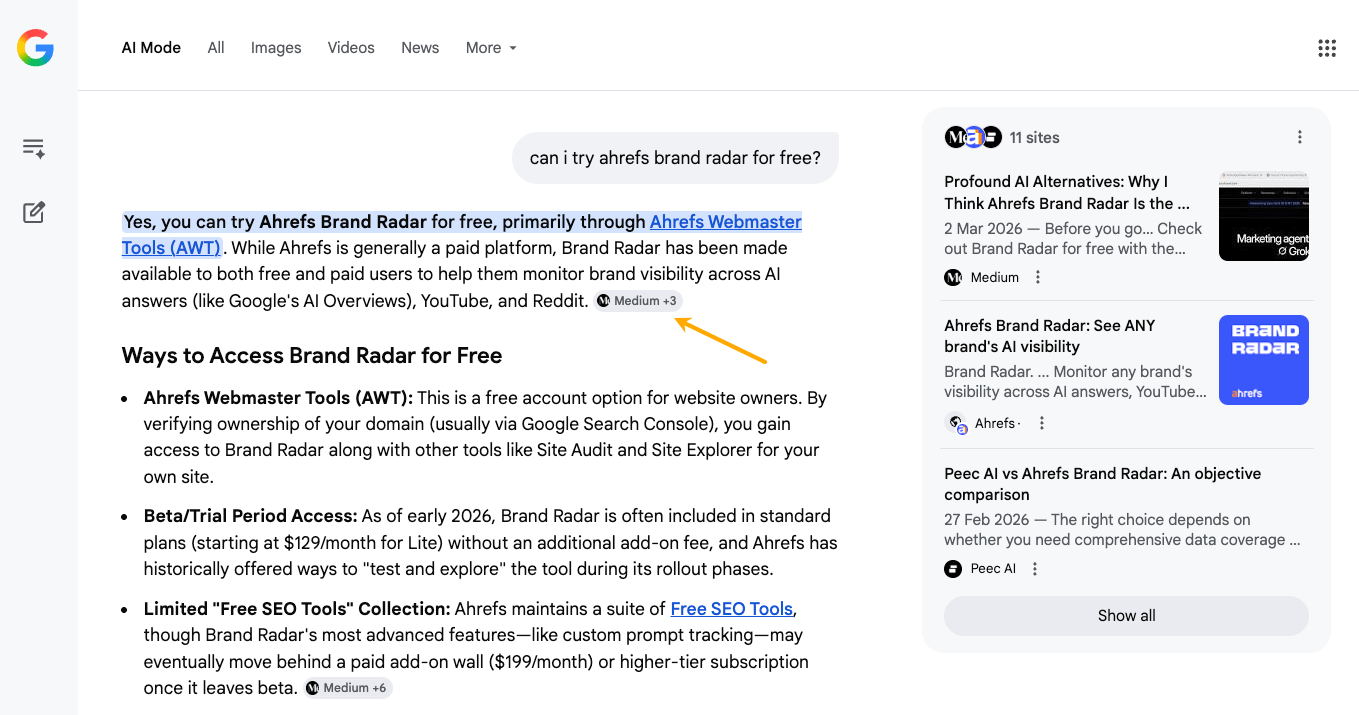

Searchable Content: This category includes product documentation, help articles, and comparison pages. These are crucial for grounding AI responses and preventing hallucinations. The author’s product documentation, for example, was directly cited by an AI assistant when queried about the capabilities of Ahrefs Brand Radar. Conversely, gaps in official documentation can lead to AI relying on less reliable sources or fabricating information.

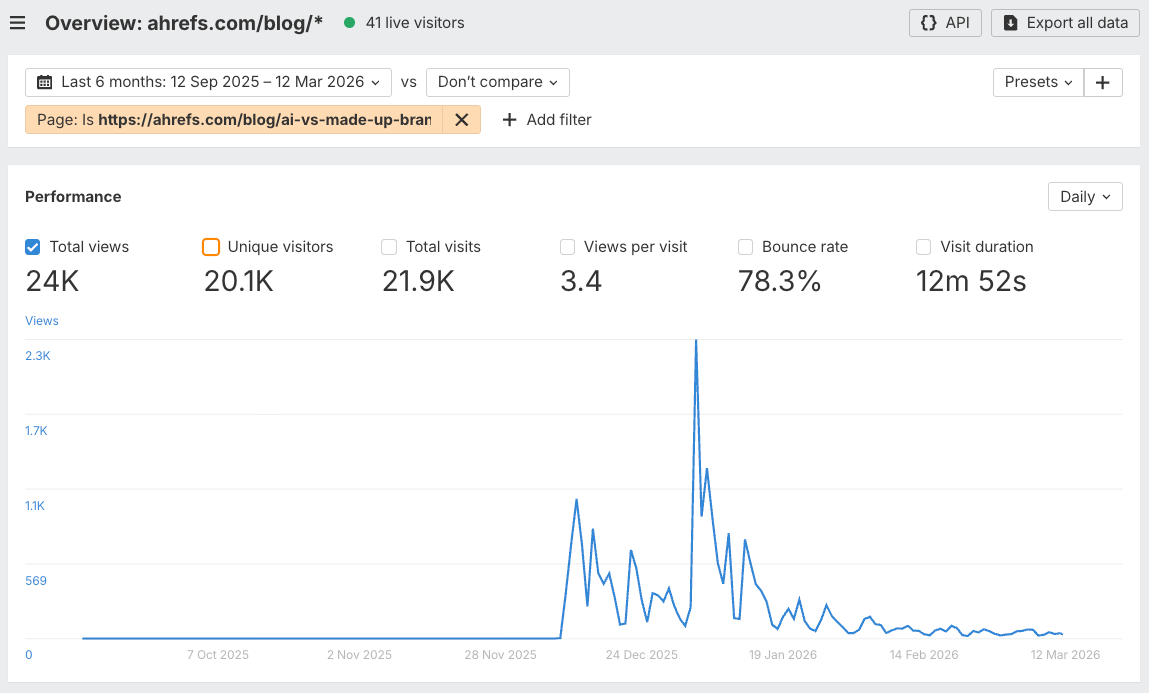

Shareable Content: This refers to human-first content stemming from personal experience and unique insights, which cannot be easily templated. The author’s "AI misinformation experiment," while not ranking for search, drove significant traffic and social engagement.

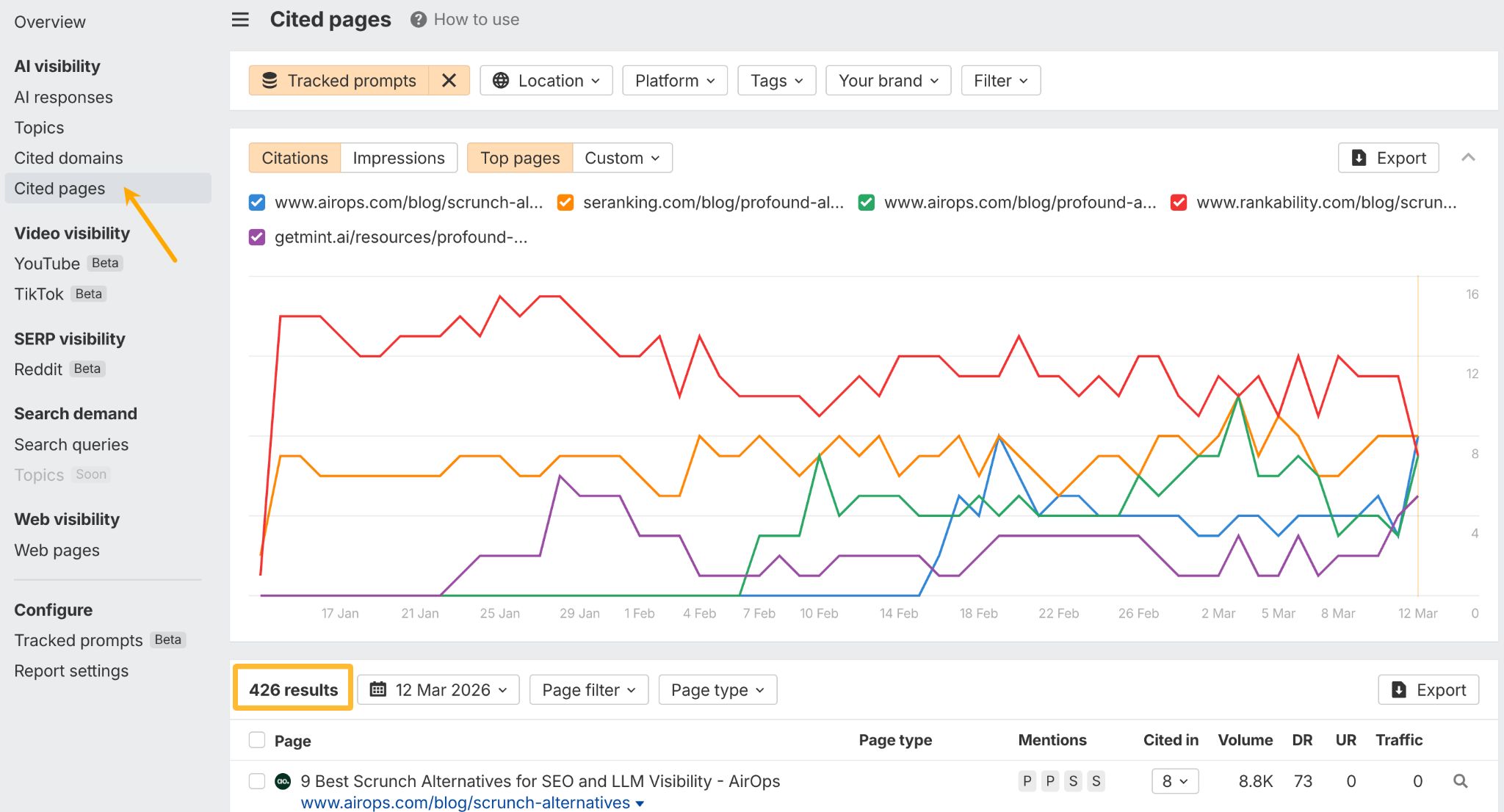

Solution: The author stresses the need for flexibility, arguing that AI chatbots are the most adaptable tools for both content types. For searchable content, a thorough audit of product documentation is essential to ensure it can serve as a reliable source for AI. Tools like Ahrefs Brand Radar can help identify content gaps at scale. For shareable content, developing an idea pipeline is key. This involves collecting ideas, facts, quotes, and other materials in a centralized system like Notion or a custom-built tool. Features like "example finders" and "idea generators" can significantly enhance this process. Furthermore, setting up AI agents to monitor web trends and identify content ideas automatically can help maintain a constant pulse on emerging topics. Tools like Firehose can stream real-time web data and integrate with AI agents via API for advanced content discovery.

In conclusion, the most successful content creators in the AI era will likely be those who prioritize the quality of information and human judgment over the convenience of automated generation tools. The focus will shift towards becoming knowledge curators, leveraging AI as a powerful assistant rather than a sole creator. The author plans to release a detailed breakdown of their 40-article experiment in a subsequent piece.