1

1 1

1

1

1 2

2 3

3

The Atlassian Confluence engineering team has successfully completed a multi-year performance initiative that has fundamentally transformed the user experience of its collaborative platform. Over the past 24 months, the team has dedicated significant resources to optimizing page load speeds, ultimately achieving a 50% reduction in 90th percentile latency. A cornerstone of this improvement over the last year has been the implementation of React 18’s streaming capabilities for server-side rendered (SSR) applications. By shifting from traditional rendering methods to a streaming-based architecture, Confluence has realized a 40% improvement in First Contentful Paint (FCP), drastically reducing the time users wait to see content on their screens.

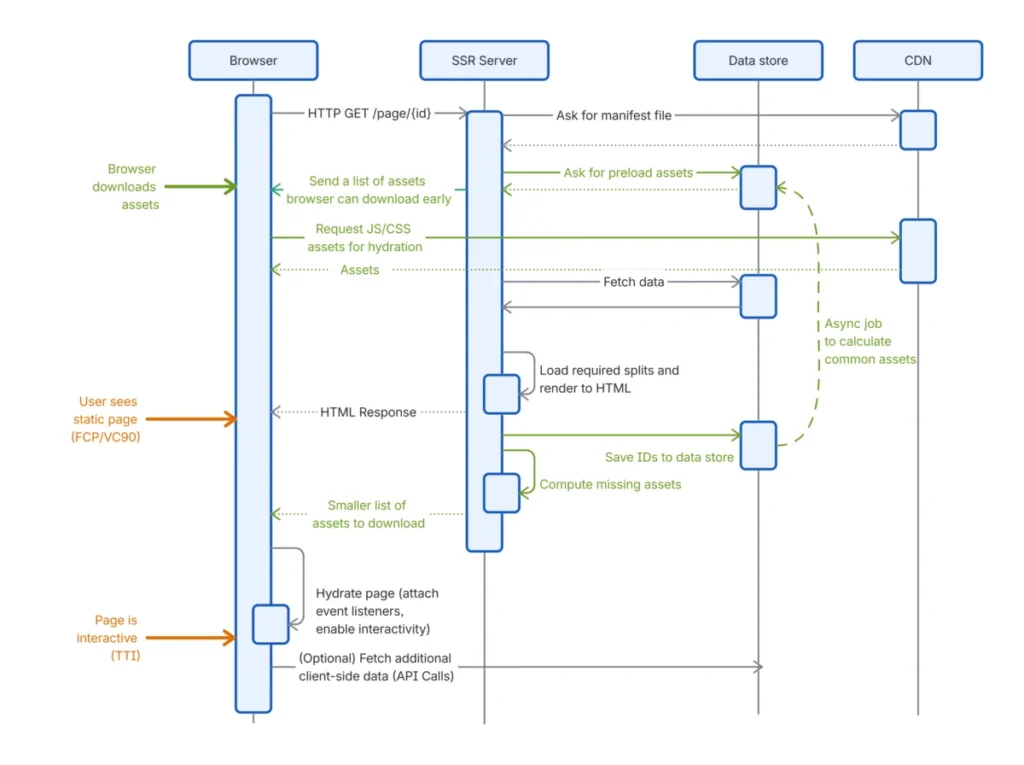

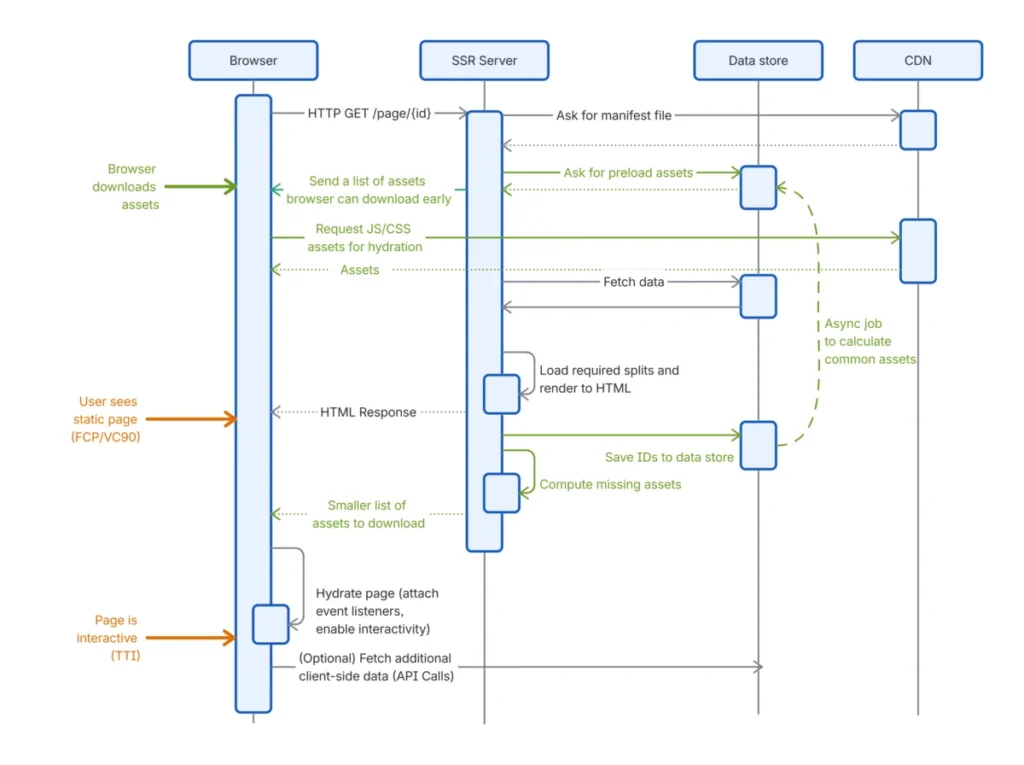

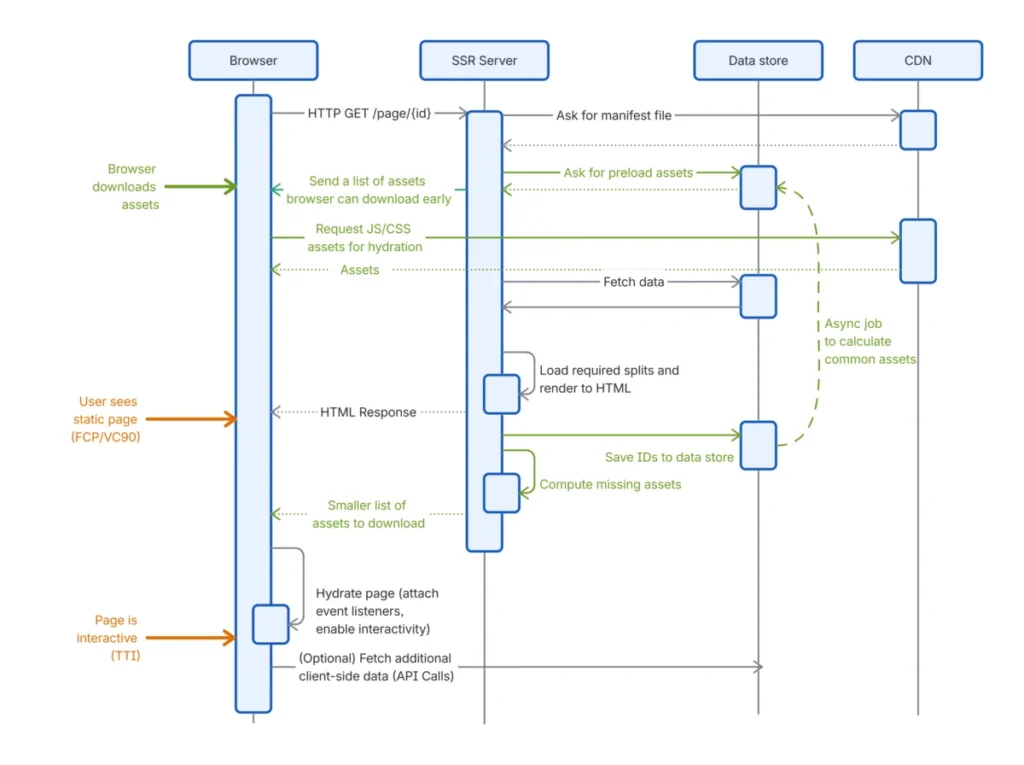

To quantify these improvements, Atlassian utilizes a sophisticated suite of performance metrics designed to capture the nuances of the user journey. Key among these are First Contentful Paint (FCP), which measures when the first bit of content is rendered; Time to Visually Complete (TTVC), which tracks when the page appears fully loaded to the user; and Time to Interactive (TTI), which identifies the point at which the page becomes fully responsive to user input. By focusing on these indicators, the engineering team was able to pinpoint bottlenecks in the traditional single-page application (SPA) and SSR models.

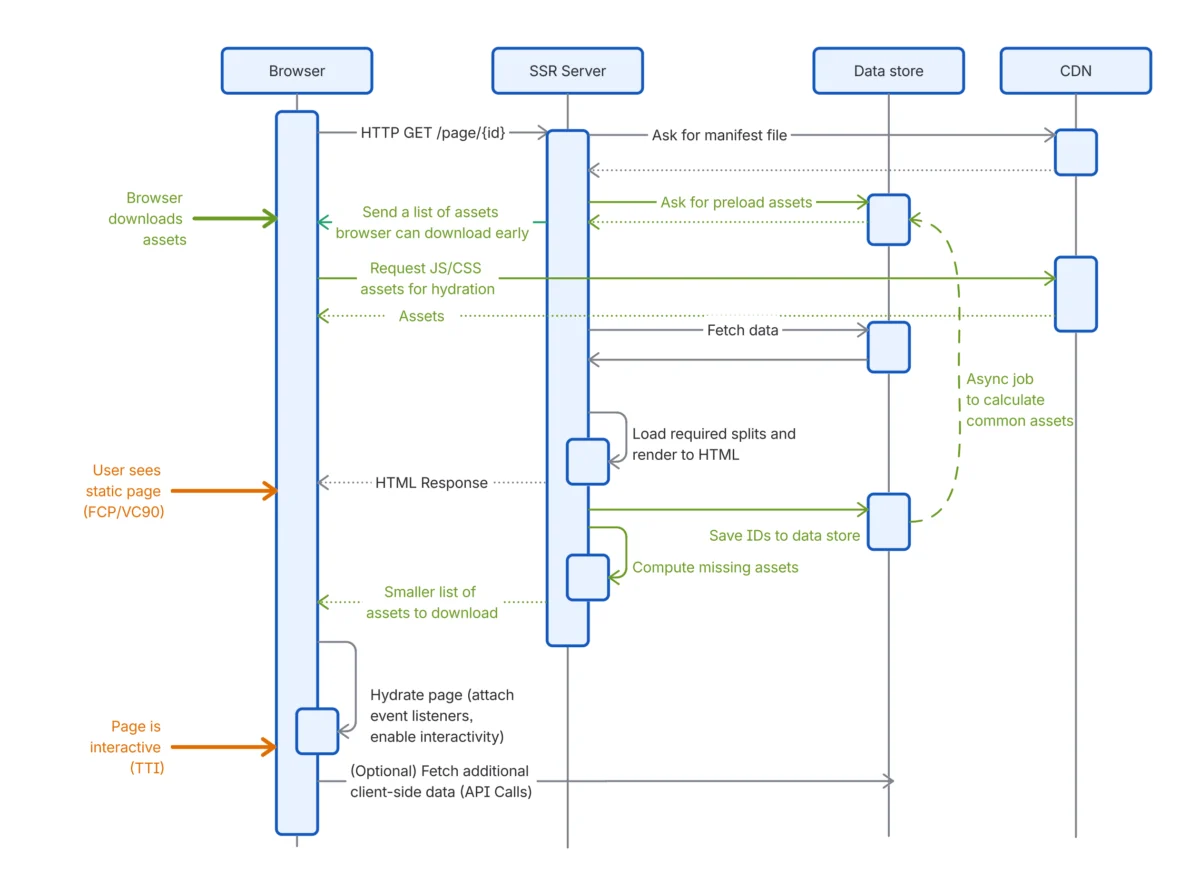

In a standard React-based SPA, the browser must first fetch and load static assets, such as JavaScript and CSS, and then request data from the server before any content becomes visible. This sequential process often results in a sluggish initial experience, particularly for users on high-latency networks or those using devices with limited CPU power. While Confluence had previously adopted SSR to mitigate these issues by executing client code on a server near the backend, the traditional SSR model still required the server to load all necessary data and render the full markup before sending any response to the client. This "all-or-nothing" approach created a delay in the initial response time.

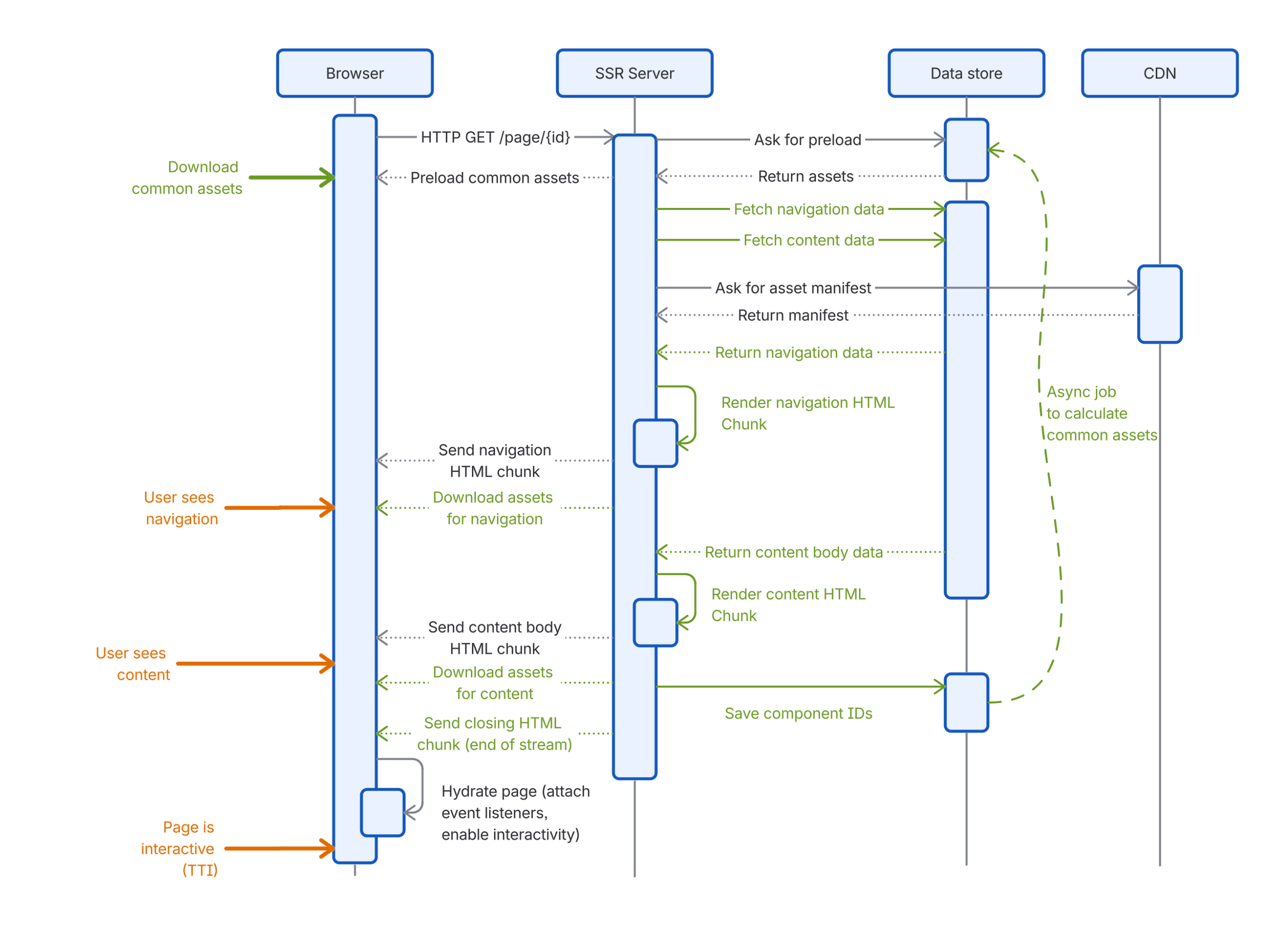

The release of React 18 introduced a paradigm shift with the ability to emit parts of the page markup as they are generated. This feature, known as streaming SSR, allows the browser to begin rendering fragments of the page as soon as they arrive, rather than waiting for the entire document. This enables the browser to start fetching static assets earlier in the lifecycle and allows the page to become interactive in stages.

A critical component of this transition was the adoption of React Hydration. In early iterations of SSR, client-side applications would often discard the server-rendered markup and replace it with a fresh frontend-rendered version. This process was not only inefficient but also caused visual "jank" or flickering as DOM nodes were destroyed and recreated. Modern React hydration allows the frontend to reuse the existing server-rendered HTML. During hydration, React traverses the component tree, ensuring the existing markup matches the intended state, and then attaches event handlers and side effects. This reuse of nodes significantly accelerates both TTVC and TTI, provided the markup remains identical between the server and the client.

To facilitate this, the Confluence team utilized Suspense boundaries. By wrapping component trees in <Suspense /> tags, developers can signal to React that certain parts of the page have pending dependencies. When the server encounters a suspense boundary, it can opt to send a loading state to the client immediately while continuing to process the heavy data requirements of the actual component in the background. React 18’s renderToPipeableStream and renderToReadableStream functions enable the server to stream these completed chunks to the client as they become ready.

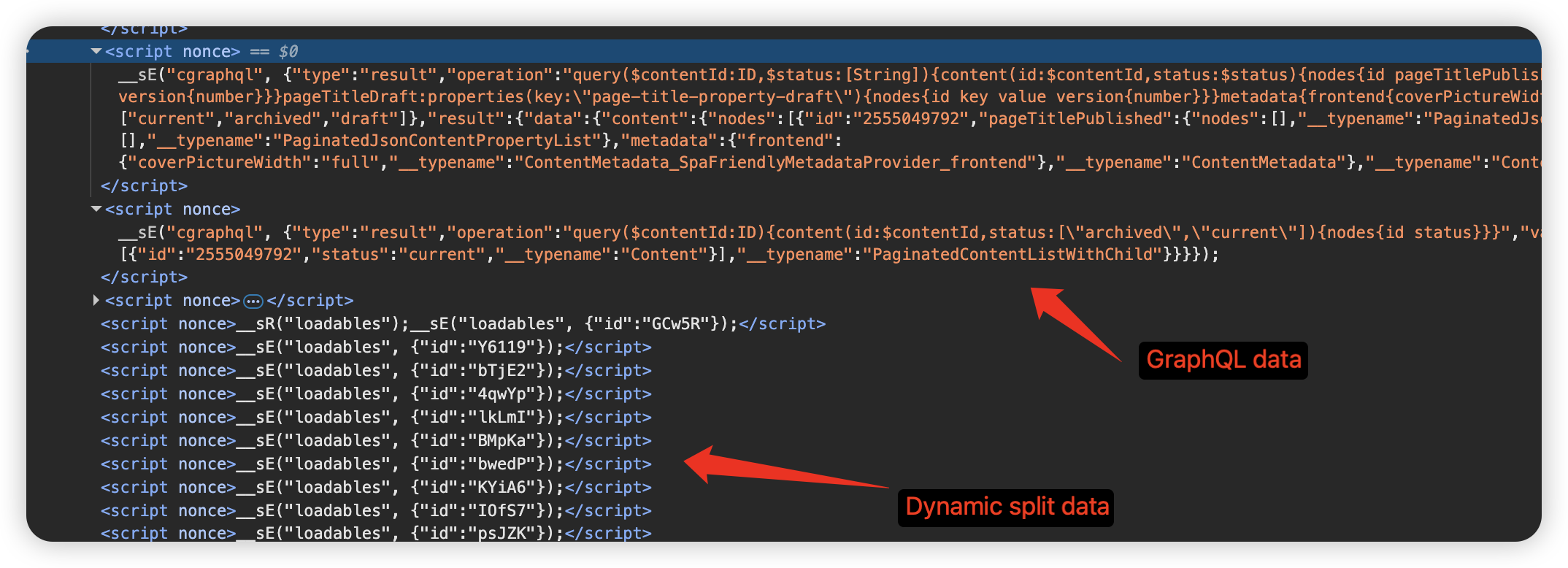

The technical implementation involves the use of special marker comments, such as <!--$?--> and <!--/$-->, which denote pending boundaries. Once a boundary is resolved on the server, the markup is sent to the client along with a small snippet of inline JavaScript that replaces the placeholder with the final content. This ensures a seamless visual transition for the user.

Beyond markup, the engineering team had to solve the challenge of streaming data. To hydrate successfully, the client-side application requires access to the exact state used during the server-side render. Confluence developed custom data streaming abstractions using NodeJS transform streams. These transforms allow the server to inject arbitrary data into the HTML stream before the associated markup is emitted. This precise sequencing—placing data before the markup—is vital. If the data is not available when hydration is triggered, a state mismatch occurs, leading to hydration errors and forced client-side re-renders that negate the performance gains.

The team also addressed the inherent delays in asset loading. In a typical SSR environment, the browser does not know which JavaScript bundles are required for a specific page until the server has finished rendering. This often results in a late start for asset downloads. To optimize this, Atlassian implemented a predictive feedback loop. By analyzing previous render results, the system can predict which JavaScript assets will likely be needed for a given page and instruct the browser to preload them. While hydration still waits for these assets, preloading ensures they are already in the browser cache, cutting the time to interaction nearly in half.

Furthermore, as the server renders the page, it continuously sends metadata embedded in the HTML stream. This metadata allows the browser to initialize data structures and download feature-specific assets—such as those required for charts or tables of contents—long before the entire page has finished rendering on the server.

However, the shift to streaming was not without significant technical hurdles. One major challenge involved the performance overhead of existing page transformations. Confluence historically used various transformations to mark content boundaries and denote script preloads, often relying on regular expressions (regex) run over the completed page body. In a streaming context, these transformations must be executed repeatedly over small chunks of data. The team found that traditional regex operations were too slow for this purpose. To resolve this, they replaced regex-based logic with a high-performance streaming string scanner and minimized the number of passes required over the data chunks.

Another complex issue emerged regarding React 18’s hydration behavior. The team discovered that if a change to the React context occurred during the hydration of a suspense boundary, React would discard the work and re-render the entire component and its children. As more suspense boundaries were added to a page, this led to an exponential increase in rendering cycles, causing high CPU usage and delaying interactivity. This was further complicated by a state management library that failed to properly clean up event listeners, leading to a memory leak. While this behavior is slated for a fix in React 19, the Confluence team mitigated it in the interim by using the unstable_scheduleHydration feature to manually prioritize the hydration of suspense boundaries.

Network-level buffering also presented an obstacle. Many intermediate servers and proxies, including Nginx, are configured to buffer responses to improve compression efficiency. For a streaming application, this buffering can be detrimental, as it holds back small chunks of HTML until a certain size threshold is met, effectively killing the "stream." The team addressed this by implementing the X-Accel-Buffering: no header to disable proxy buffering and modifying the server logic to manually flush the compression middleware as soon as React finishes a chunk.

To ensure these changes delivered tangible benefits without introducing regressions, Atlassian employed a rigorous rollout strategy. This included extensive A/B testing and the monitoring of performance across p50, p90, and p99 percentiles. By using a conservative, multi-week rollout, the team was able to validate improvements in FCP, TTVC, and TTI while ensuring hydration success rates remained high.

The integration of React 18 streaming SSR marks a significant milestone in Confluence’s performance journey. By breaking down the monolithic page load process into smaller, manageable streams of data and markup, Atlassian has created a faster, more responsive environment for its global user base. The engineering team plans to continue building on these foundations, exploring further optimizations in page interactivity and load efficiency to maintain Confluence’s position as a high-performance collaboration tool.