1

1 1

1

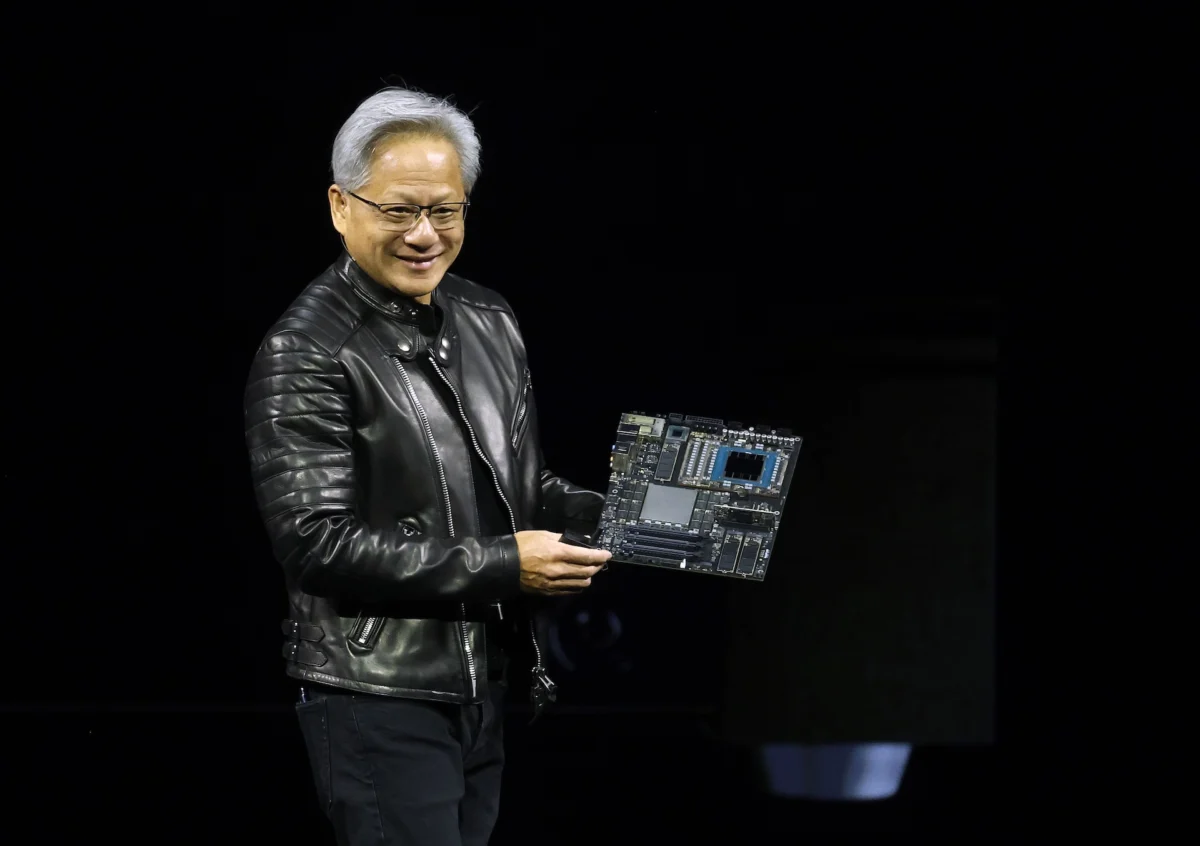

Nvidia commenced its highly anticipated annual GTC developer conference in San Jose, California, on Monday, March 18, 2024. The event, a cornerstone for the global technology community, officially began with a keynote address from CEO Jensen Huang, scheduled for 11 a.m. PT / 2 p.m. ET. This address is widely considered the highlight of the conference, setting the tone for Nvidia’s strategic direction and anticipated technological breakthroughs.

GTC, which stands for GPU Technology Conference, serves as Nvidia’s flagship annual event, drawing developers, researchers, and industry leaders from around the globe. This year’s conference is slated to run from March 18 to March 21, providing a multi-day platform for deep dives into accelerated computing, artificial intelligence, and a myriad of related technologies. Historically, Nvidia leverages the significant spotlight of GTC to unveil groundbreaking new products, solidify and champion strategic partnerships, and articulate its overarching vision for the future of computing. Jensen Huang’s keynote is expected to be a pivotal moment, specifically focusing on Nvidia’s integral and expanding role in shaping the future landscape of computing and the burgeoning field of artificial intelligence. The two-hour address offers both an in-person experience at the SAP Center in San Jose and a globally accessible livestream via the event’s official website, ensuring a broad audience can witness the announcements firsthand.

The broader three-day agenda for GTC 2024 is meticulously curated to explore the next frontiers of AI across a diverse spectrum of industries. This includes critical sectors such as healthcare, where AI is revolutionizing drug discovery and diagnostics; robotics, with advancements in automation and intelligent machines; and autonomous vehicles, where AI underpins the very foundation of self-driving technology and intelligent transportation systems. The comprehensive program promises sessions, workshops, and demonstrations that delve into the practical applications and theoretical advancements of AI, illustrating its transformative potential across these varied domains.

Among the most eagerly awaited announcements are potential revelations on both the software and hardware fronts. On the software side, industry observers and tech enthusiasts are rife with speculation regarding the potential release of an open-source platform specifically designed for enterprise AI agents. This rumored platform, reportedly dubbed "NemoClaw" and initially brought to light by Wired, could represent a significant strategic move for Nvidia. The primary objective of such a platform would be to furnish businesses with a structured, efficient, and scalable methodology for constructing and deploying AI agents. These sophisticated software entities are capable of autonomously executing multistep tasks, streamlining complex operations, and enhancing productivity across various enterprise functions. The introduction of NemoClaw would strategically position Nvidia to directly compete with, or at least mirror, similar offerings from other prominent technology companies, notably OpenAI, which has also been expanding its enterprise-focused AI agent solutions. By providing an open-source framework, Nvidia would empower a wider developer community, potentially fostering rapid adoption and innovation within the enterprise AI ecosystem, further cementing its position as a foundational provider in the AI stack.

Concurrently, on the hardware side, expectations are high for the unveiling of a new chip specifically engineered to significantly accelerate the AI inference process. As reported by The Wall Street Journal, this new silicon innovation could shake up the computing market by addressing one of the critical bottlenecks in scaling AI applications. To understand the significance of this, it’s essential to differentiate between AI training and AI inference. AI training is the initial, compute-intensive phase where an AI model learns from vast datasets, building its knowledge base and refining its parameters. This process typically demands immense computational power, an area where Nvidia’s GPUs have long held a dominant position, commanding an estimated 80% market share. In contrast, AI inference is the subsequent process where a trained AI model applies what it has learned to generate responses, make predictions, or render decisions in real-time. While less computationally demanding than training, faster and more cost-effective inference is widely recognized as one of the last remaining significant bottlenecks preventing the broad, ubiquitous scaling of AI applications across various industries and use cases. This rumored chip represents Nvidia’s latest and perhaps most aggressive bid to not only maintain its stronghold in the AI training market but also to assert its dominance in the rapidly growing inference market. This segment is becoming increasingly competitive, with tech giants like Google and Amazon investing heavily in developing their own custom chips, such as Tensor Processing Units (TPUs) and AWS Inferentia, respectively, alongside other startups and established semiconductor firms. Nvidia’s move to enhance inference capabilities with a dedicated chip underscores its ambition to provide end-to-end solutions for the entire AI lifecycle, from foundational research and development to widespread deployment and real-world application.

Beyond these specific product and platform rumors, attendees and virtual participants can anticipate a comprehensive array of partnership announcements. These collaborations are expected to further illustrate Nvidia’s expansive reach and influence across various industries, showcasing how its AI capabilities are being integrated into diverse solutions. Alongside these partnership revelations, there will be numerous live demonstrations highlighting the practical power and versatility of Nvidia’s AI technologies in action, providing tangible examples of their impact and potential.

Adding another layer of intrigue to the conference, Kevin Cook, a senior equity strategist at Zacks Investment Research, shared insights with TechCrunch, suggesting that attendees should also pay close attention to any updates regarding Nvidia’s relationship with Groq. Groq is an AI chip challenger known for its innovative architecture designed for extremely fast inference. The curiosity surrounding this tie-up stems from a significant report late last year, which indicated that Nvidia had reportedly paid a substantial $20 billion to license Groq’s cutting-edge technology. While the exact nature and terms of this rumored deal remain a subject of intense speculation, the report also suggested that key figures from Groq, including its founder Jonathan Ross and president Sunny Madra, along with other members of the Groq team, agreed to join Nvidia. Their presumed role within Nvidia would be to further advance and scale the licensed technology, integrating Groq’s expertise into Nvidia’s extensive ecosystem. This potential collaboration is seen as highly strategic, offering Nvidia access to Groq’s unique inference acceleration capabilities and a talented team, which could significantly bolster Nvidia’s efforts in the inference market and provide a competitive edge against other custom chip developers. The industry is keen to learn more about how this integration will manifest and what it means for Nvidia’s future product roadmap, especially concerning its strategies for maintaining leadership in the rapidly evolving AI hardware landscape. The GTC conference is thus poised to be a pivotal event, shaping expectations and defining trajectories for Nvidia and the broader artificial intelligence industry in the coming years.