1

1 1

1

In a significant shift toward automated software quality assurance, engineering teams at Atlassian have successfully developed and deployed a Mutation Coverage AI Assistant designed to overcome the traditional bottlenecks of mutation testing. The initiative, which began as a manual experimentation phase in early 2025, evolved during a team innovation week in September 2025 into a sophisticated tool integrated with the Rovo Dev CLI. This assistant now enables multiple development teams to reliably push project mutation coverage toward and beyond the 80 percent threshold, a metric often considered difficult to achieve through manual testing efforts alone.

Mutation testing is a high-level form of software testing where "mutants," or small changes in the source code, are introduced to verify if existing test suites can detect and "kill" them. While critical for ensuring test quality, improving mutation coverage at scale has historically been a painful and time-consuming process for developers. The manual analysis of mutation reports, which identify surviving mutants, often requires hours of tedious work to write specific boilerplate tests for each gap. Recognizing this as a repetitive, pattern-based task, Atlassian engineers identified mutation coverage as a "sweet spot" for Large Language Models (LLMs).

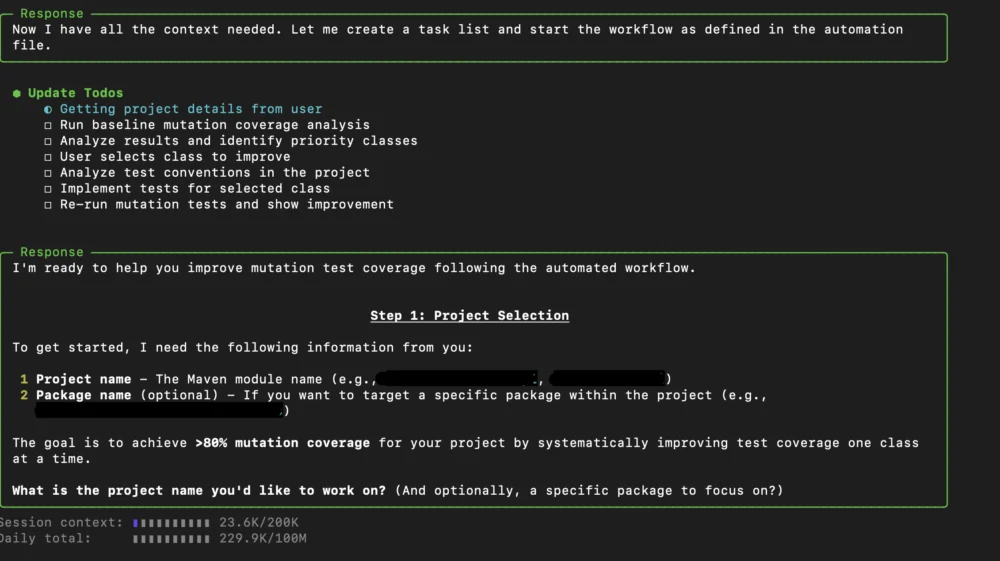

The Mutation Coverage AI Assistant functions by guiding an LLM through a complete end-to-end workflow rather than relying on isolated, manual prompts. Launched via the Rovo Dev CLI, the assistant operates as a "Dev-in-the-loop" copilot. Its primary functions include generating comprehensive mutation reports, recommending specific classes or packages that require coverage improvements, and identifying existing test patterns within a project. Once a target is identified, the AI writes the necessary test code to address the gaps and then auto-validates the results to ensure the new tests actually improve the coverage metrics.

One of the defining characteristics of this AI assistant is its project-awareness. Unlike generic AI coding tools that might suggest irrelevant libraries, the Rovo-based assistant adapts to the specific environment of each project. It identifies the testing frameworks in use—such as JUnit 4, JUnit 5, Mockito, or AssertJ—and recognizes the project’s specific architectural patterns. This allows the AI to generate code that is consistent with the existing codebase, reducing the friction usually associated with AI-generated boilerplate.

The effectiveness of this guided automation approach was demonstrated through a series of internal pilot programs across various Jira-related projects. In a comparative analysis, the team found that while manual prompts to LLMs were easy to start, they lacked a guided workflow, leading to unpredictable behavior and high time-to-completion. Similarly, standard AI plugin commands like "/tests" often generated code without awareness of the mutation reports, resulting in tests that failed to meaningfully improve coverage. The Mutation Coverage AI Assistant bridged this gap by maintaining a constant feedback loop between the mutation report and the test generation phase.

Data collected from the early adoption phase showed marked improvements in coverage across five key projects. "Jira-project-a" saw an increase from 56 percent to 80 percent mutation coverage, while "jira-project-b" jumped from 70 percent to 88 percent. The most significant high-end result was observed in "jira-project-c," which reached 96 percent coverage from an initial 83 percent. Furthermore, the assistant proved its scalability during a major Jira migration project. In that instance, the tool was used across hundreds of classes to kill approximately 1,500 mutants, achieving these results in significantly less time than traditional manual methods.

Throughout the development and expansion of the assistant, the engineering teams identified several critical lessons regarding the integration of LLMs into the development lifecycle. A primary challenge involved the time required to generate mutation reports for large-scale projects. On projects with extensive class and package structures, generating a full report could take upwards of ten minutes, leading to developer frustration and reduced tool adoption. To mitigate this, the workflow was enhanced to support package-level mutation analysis. By narrowing the focus to specific segments of the code, the team reduced report generation time and allowed for more targeted, incremental improvements.

The team also addressed limitations inherent in LLM logic. Early versions of the assistant occasionally produced problematic code, such as incorrect imports, logic that ignored existing test utilities, or "hallucinated" methods that did not exist in the source code. To solve this, the engineering team tightened the workflow instructions, providing the AI with explicit guidelines and constraints. This resulted in more reliable test generation and fewer manual corrections required by the human developers overseeing the process.

Another hurdle was the tendency of the AI to generate redundant or excessive tests when given generic instructions to "kill mutants." This "bloated test" problem not only increased the size of the codebase but also led to longer build times and higher maintenance costs. The solution involved implementing detailed, mutant-specific test-writing guidelines. By instructing the AI to focus on specific mutation types—such as conditional boundaries or mathematical operator changes—the assistant began producing more concise and effective test suites.

A key philosophical takeaway from the project was the necessity of the "Dev-in-the-loop" model. The Atlassian team deliberately chose not to pursue full autonomy for the AI assistant. They concluded that human judgment remains essential for high-level decisions, such as determining which classes are too critical to be left to AI or identifying when a class has reached its pragmatic coverage limit. For example, the team discovered that AI often struggled with "bloated classes" that possessed too many responsibilities. In such cases, the AI’s failure to improve coverage served as a signal to developers that the class required architectural refactoring rather than more tests.

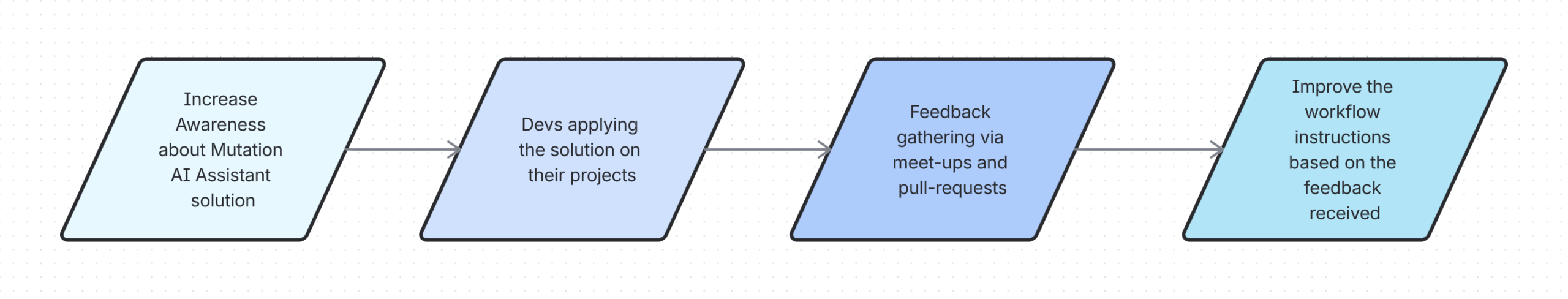

The development of the assistant was also a testament to cross-team collaboration. The project involved input from the Jira Service Management (JSM) Foundations team, the Jira Cloud migrations team, and the Rovo Dev team. This collaborative model allowed the prompt instructions to evolve as "living artifacts," constantly updated based on real-world edge cases encountered by different departments. As developers found new ways the AI could fail or succeed, they refined the central workflow, creating a system that improved with every use.

Despite the successes, current challenges remain. The engineering team is still working to refine the assistant’s ability to avoid redundant tests and is investigating how different LLM models impact the quality of the generated code. Developers are also advised to use the tool pragmatically; if an AI session becomes unproductive or gets stuck in a loop, restarting the session to reset the LLM’s memory context is often the recommended fix.

Looking forward, Atlassian intends to continue maturing the prompt instructions as adoption grows. While the current focus remains on guided automation with human oversight, the long-term goal is to explore more autonomous AI agent use cases. For now, the Mutation Coverage AI Assistant stands as a robust proof of concept for how AI can handle the "heavy lifting" of software quality, allowing developers to focus on complex architectural challenges while maintaining high standards for code reliability and test integrity.

The success of this initiative is credited to the collaborative efforts of several key engineers and teams, including those from the JSM Foundations and Jira Cloud Migration sectors, who transformed a small innovation week experiment into a standard-setting tool for the organization’s development ecosystem.