1

1 1

1

In a comprehensive year-long study of user behavior and performance metrics, Atlassian has released new data detailing the tangible impact of its Rovo AI capabilities within the Jira platform. Following a twelve-month observation period, the software giant’s internal analytics team confirmed that users who integrated Rovo’s artificial intelligence into their daily workflows began tasks 30% faster and achieved the equivalent of an additional day of productivity every month. These findings come at a critical juncture for the tech industry, as enterprise organizations shift their focus from the theoretical potential of generative AI to measurable returns on investment in workplace efficiency.

Rovo, Atlassian’s specialized AI application, currently serves a global user base of over five million people. While it operates across the entire Atlassian suite—including Confluence and Trello—its application within Jira is specifically designed to streamline the complexities of project management and software development. In the Jira environment, Rovo functions as a multi-faceted agent capable of creating work items from disparate data sources, building complex automations through natural language processing, providing instant context to existing tickets, and decomposing large, monolithic projects into manageable sub-tasks.

The integration aims to solve a perennial problem in knowledge work: the "cold start" problem. By leveraging AI to synthesize information and suggest initial structures for work, Rovo assists users in overcoming the inertia often associated with high-complexity tasks. According to Atlassian’s report, the primary goal of the study was to determine if these capabilities translated into a statistically significant improvement in the speed and volume of work delivered by teams.

Atlassian’s researchers acknowledged the inherent difficulty in defining and measuring "productivity" within a tool as versatile as Jira. Jira is utilized to track the entire Software Development Life Cycle (SDLC), from initial ideation and planning to deployment and long-term maintenance. Because the platform supports a vast array of workflows, a simple count of "tickets closed" is often insufficient to capture the nuances of professional output.

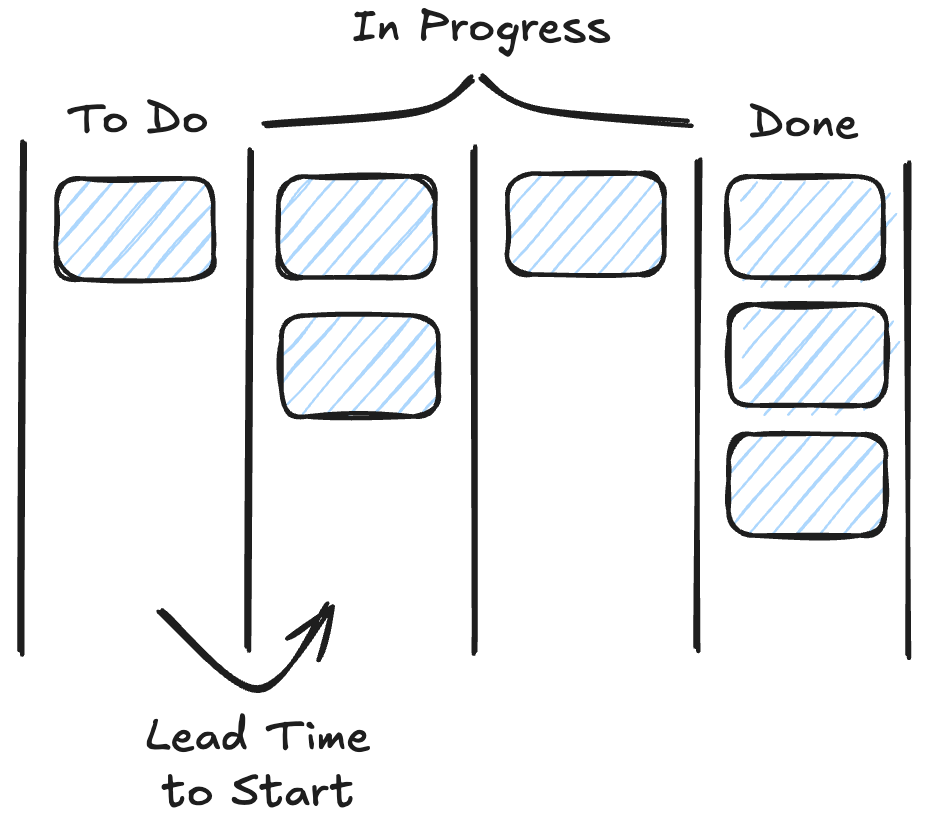

To achieve a rigorous analysis, the researchers sought to isolate metrics that were least susceptible to "noise"—external factors such as changing team sizes, seasonal fluctuations, or varying project difficulties. They ultimately identified a critical transition point in the lifecycle of a work item: the move from a "To Do" status to an "In Progress" status.

In the Jira architecture, boards are organized into status categories. While a team might have dozens of custom statuses, they are all mapped to three primary categories: "To Do," "In Progress," and "Done." By focusing on the "Lead Time to Start"—defined as the duration between the creation of a work item and its first transition into the "In Progress" category—the team could measure how effectively AI helps users move from planning to execution. To ensure data integrity, the study only measured the very first time an item entered the "In Progress" column, ignoring any subsequent moves back to the backlog to avoid skewing the results with recycled tasks.

The study utilized a quasi-experimental design to compare two distinct cohorts: a "test" group consisting of users who adopted Rovo’s AI capabilities in Jira, and a "control" group of users who did not. Unlike a traditional randomized controlled trial, a quasi-experiment requires careful filtering to ensure a fair comparison between groups that were not randomly assigned at the outset.

To maintain a high level of statistical rigor, Atlassian applied several filters to both cohorts. Participants were required to be "heavy users" of Jira, defined as having performed at least 200 actions within the platform over a 90-day period. Furthermore, the analysis was limited to users who had created at least 10 Jira work items within that same window. This ensured that the data reflected the habits of professional users rather than casual or intermittent ones.

A key challenge in the study was the creation of a "Day 0"—the baseline date for comparison. For the test group, Day 0 was defined as the first day a user engaged with Rovo’s AI features in Jira. To create a comparable timeline for the control group, researchers took the distribution of "Day 0" dates from the test group, shuffled them, and assigned them to users in the control group. This allowed for a normalized 45-day observation window for both sets of users, ensuring that the comparison was grounded in the same chronological context.

The final data set was analyzed using a two-sided t-test with a significance level of 0.05 and a statistical power of 0.8. The results were found to be statistically significant with a p-value of less than 0.01, indicating a very low probability that the observed improvements were due to chance.

The results of the analysis provided two major insights into how AI alters the tempo of professional work.

First, the study found a 30% increase in the volume of work reaching the "In Progress" stage among Rovo AI users. After the 45-day observation period, those who adopted the AI tools were consistently moving more items out of their backlogs and into active development compared to the control group. In contrast, the control group showed no significant change in the volume of work initiated during the same period.

Second, and perhaps more significantly, Rovo users experienced a 35% reduction in "Lead Time to Start." While both the control and test groups saw some natural fluctuations in their start times, the AI adopters experienced a reduction in lead time that was 1.4 times greater than that of their non-AI counterparts. For the average user, this 35% reduction translates to a savings of approximately one full day of work every 28-day cycle.

Atlassian emphasizes that the time reclaimed through AI efficiency is not merely about increasing the raw volume of output, but about improving the quality of the work environment. The report suggests that the "extra day" gained each month can be redirected toward high-value activities that are often sidelined due to time constraints.

Within the framework of the Atlassian Team Playbook, these activities include "retrospectives," where teams reflect on their performance to improve future cycles, and "team health checks," which are designed to identify bottlenecks and morale issues before they lead to burnout. By automating the administrative and cognitive overhead of starting new tasks, AI allows teams to focus on the human-centric elements of collaboration and strategic planning.

The findings from this year-long study represent what Atlassian describes as the "first chapter" in their investigation into the real-world impact of AI. While the current data confirms that AI makes users faster at starting and initiating work, the company intends to expand its research into the qualitative aspects of AI-assisted project management.

Future studies will investigate whether work items drafted with the assistance of AI are clearer or better written than those produced manually. Additionally, researchers plan to examine if AI leads to smoother sprint cycles and more manageable project boards. The ultimate goal is to move the conversation surrounding AI beyond "hype" and toward a model of careful experimentation that prioritizes tangible value for end-users.

By pairing deep data analysis with actual customer usage patterns, Atlassian aims to ensure that every new AI capability added to the Jira suite serves a specific, measurable purpose in enhancing team productivity. For now, the data suggests that for the five million users currently utilizing Rovo, the promise of AI is already manifesting as a significant increase in the speed of innovation and a meaningful reduction in the time spent in the "To Do" phase of project development.