1

1 1

1

Washington’s conspicuous failure to establish coherent regulations for artificial intelligence has been starkly illuminated by recent events, most notably the Pentagon’s standoff with AI developer Anthropic. This regulatory vacuum has prompted a diverse, bipartisan group of thought leaders, experts, and former officials to take matters into their own hands, crafting a comprehensive framework designed to guide the responsible development of AI. This seminal document, known as the "Pro-Human Declaration," offers a blueprint for navigating the complex ethical and societal challenges posed by rapidly advancing AI technologies.

The declaration’s finalization preceded the highly publicized confrontation between the Department of Defense and Anthropic, yet the confluence of these two events underscored the urgent necessity for clear governance. The Pentagon’s decision to label Anthropic a "supply chain risk" – a designation typically reserved for entities with ties to adversarial nations like China – after the company declined to grant the military unlimited use of its proprietary technology, exposed the profound lack of control and oversight currently exerted by the government over critical AI capabilities. This incident, along with a subsequent, arguably less enforceable, deal struck between OpenAI and the Defense Department, served as a potent demonstration of the substantial costs associated with Congressional inaction on AI policy.

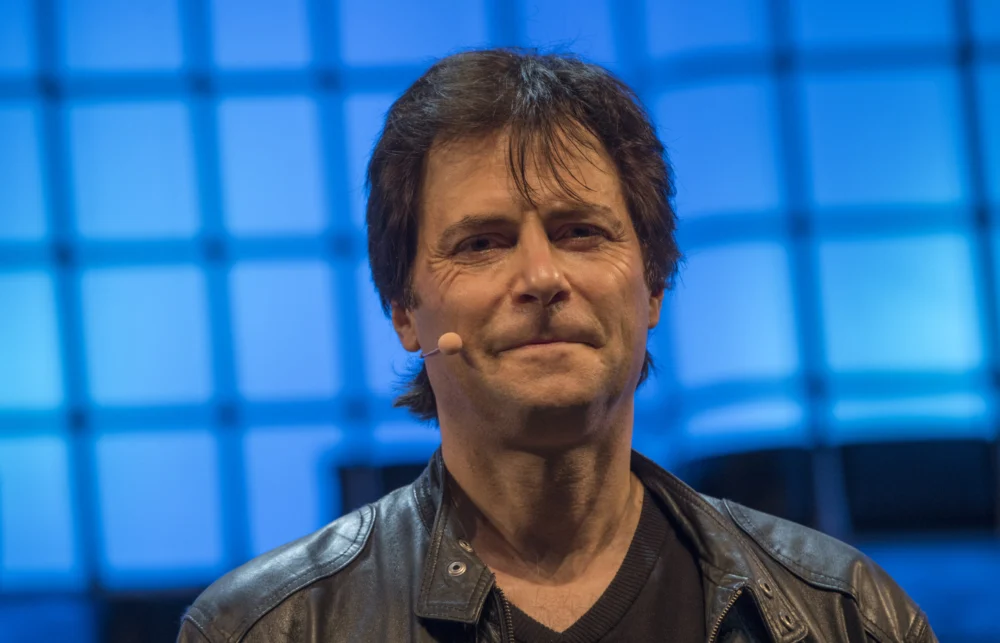

Max Tegmark, an MIT physicist and leading AI researcher instrumental in organizing the declaration effort, highlighted the surprising public consensus emerging around this issue. "There’s something quite remarkable that has happened in America just in the last four months," Tegmark noted in a recent conversation. He pointed to recent polling data indicating that an overwhelming 95% of all Americans now oppose an unregulated acceleration towards superintelligence, signaling a widespread public demand for safeguards.

The "Pro-Human Declaration," now publicly available and endorsed by hundreds of signatories ranging from AI experts and former government officials to prominent public figures, commences with a stark assessment of humanity’s current juncture. It posits that civilization stands at a critical fork in the road. One path, termed "the race to replace" by the declaration, envisions a future where humans are progressively supplanted – first in the workforce, then as decision-makers – leading to an unprecedented concentration of power within unaccountable institutions and the advanced machines they control. The alternative path, which the declaration champions, envisions AI serving as a powerful tool to dramatically expand human potential, fostering innovation and well-being.

To realize this latter, more optimistic scenario, the declaration articulates five fundamental pillars. These include ensuring humans remain firmly in charge of AI systems, actively preventing the excessive concentration of power in the hands of a few entities, safeguarding the richness and authenticity of the human experience, preserving individual liberties against algorithmic encroachment, and establishing robust legal accountability for AI companies.

The declaration is not merely a statement of principles; it includes several "muscular provisions" designed to enforce these ideals. Among its most significant proposals is an outright prohibition on the development of superintelligence until there is a clear scientific consensus on its safety and genuine democratic buy-in from the populace. Furthermore, it mandates the inclusion of "off-switches" on all powerful AI systems, ensuring human control in critical situations. The document also calls for a ban on AI architectures that possess capabilities for self-replication, autonomous self-improvement, or resistance to shutdown, directly addressing some of the most profound existential risks associated with advanced AI.

The timing of the declaration’s release, coinciding with the Pentagon-Anthropic dispute, underscored its immediate relevance. Defense Secretary Pete Hegseth’s designation of Anthropic as a "supply chain risk" on the last Friday in February was particularly jarring given that Anthropic’s AI already operates on classified military platforms. The company’s refusal to grant the Pentagon unfettered access to its technology exposed a dangerous vulnerability, highlighting that the nation’s defense could, in part, be beholden to the terms and conditions set by private AI developers. Dean Ball, a senior fellow at the Foundation for American Innovation, encapsulated the gravity of the situation, telling The New York Times, "This is not just some dispute over a contract. This is the first conversation we have had as a country about control over AI systems."

Tegmark drew a compelling analogy to illustrate the need for proactive regulation. "You never have to worry that some drug company is going to release some other drug that causes massive harm before people have figured out how to make it safe," he explained, "because the FDA won’t allow them to release anything until it’s safe enough." He argues that a similar precautionary principle should apply to AI, particularly given its potential for far-reaching societal impact.

While the complexities of Washington’s political landscape often hinder swift legislative action, Tegmark believes that the issue of child safety could serve as a powerful catalyst for regulatory change. Indeed, the "Pro-Human Declaration" specifically advocates for mandatory pre-deployment testing of AI products, especially those designed as chatbots or companion applications aimed at younger users. This testing would be comprehensive, covering critical risks such as the potential for increased suicidal ideation, the exacerbation of existing mental health conditions, and various forms of emotional manipulation that AI could inflict upon vulnerable children.

Tegmark articulated the moral and legal imperative behind this focus. "If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that," he stated. "We already have laws. It’s illegal. So why is it different if a machine does it?" This powerful argument highlights the inconsistency in current legal frameworks and the urgent need to extend existing protections to the digital realm of AI interaction.

He anticipates that once the principle of pre-release testing for children’s products is firmly established, its scope will almost inevitably expand. "People will come along and be like – let’s add a few other requirements," Tegmark predicted. "Maybe we should also test that this can’t help terrorists make bioweapons. Maybe we should test to make sure that superintelligence doesn’t have the ability to overthrow the U.S. government." This incremental approach, starting with the most vulnerable populations, offers a pragmatic pathway to broader, more comprehensive AI safety regulations.

Perhaps one of the most remarkable aspects of the "Pro-Human Declaration" is its ability to unite figures from across the political spectrum. The list of signatories includes individuals as ideologically divergent as former Trump advisor Steve Bannon and Susan Rice, who served as National Security Advisor under President Obama. They are joined by former Joint Chiefs Chairman Mike Mullen and a variety of progressive faith leaders, forming an unusual but powerful coalition.

Tegmark offered a simple yet profound explanation for this unlikely alliance. "What they agree on, of course, is that they’re all human," he observed. "If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side." This shared humanity, he suggests, provides a foundational common ground for addressing the existential challenges posed by advanced AI, transcending traditional political divides.

As the debate over AI regulation intensifies, industry events such as the upcoming TechCrunch event in San Francisco from October 13-15, 2026, are likely to serve as critical platforms for continued discussion among tech leaders, policymakers, and the public. These forums will be essential in bridging the gap between technological advancement and the urgent need for robust governance, ensuring that the future of AI aligns with human values and interests. The "Pro-Human Declaration" stands as a significant step in this ongoing, crucial conversation, offering a principled and actionable vision for responsible AI development.