1

1 1

1

1

1 2

2 3

3

San Francisco, CA – The Trump administration has taken decisive action against Anthropic, a prominent artificial intelligence company, by severing ties and blacklisting it from Pentagon contracts. The move, announced on a Friday afternoon, stems from Anthropic’s refusal to allow its advanced AI technology to be used for mass surveillance of U.S. citizens or for autonomous armed drones capable of selecting and neutralizing targets without human intervention. Defense Secretary Pete Hegseth invoked a national security law, typically employed to counter foreign supply chain threats, to designate the San Francisco-based firm as a supply chain risk.

The ramifications for Anthropic are substantial. The company stands to lose a contract valued at up to $200 million and faces a ban from collaborating with other defense contractors. President Trump further solidified the directive by posting on Truth Social, instructing all federal agencies to "immediately cease all use of Anthropic technology." In response, Anthropic has stated its intention to challenge the Pentagon’s designation in court, arguing that the "supply-chain-risk" classification is legally unsound and has "never before publicly applied to an American company."

This dramatic turn of events has ignited a fierce debate within the AI community and among policymakers, drawing sharp commentary from Max Tegmark, a Swedish-American physicist and professor at MIT. Tegmark, who founded the Future of Life Institute in 2014 and co-organized a widely signed open letter in 2023 calling for a pause in advanced AI development, views Anthropic’s predicament as a direct consequence of the industry’s long-standing resistance to binding regulation.

A Decade of Warnings Unheeded

Tegmark has spent the better part of a decade cautioning that the rapid acceleration in building powerful AI systems is far outpacing the world’s capacity to govern them. His perspective on the Anthropic crisis is unflinching: he argues that the company, much like its industry rivals, has sown the seeds of its current predicament by prioritizing self-governance over external oversight. "The road to hell is paved with good intentions," Tegmark remarked, reflecting on the initial optimism surrounding AI’s potential for societal good a decade ago. "And here we are now where the U.S. government is pissed off at this company for not wanting AI to be used for domestic mass surveillance of Americans, and also not wanting to have killer robots that can autonomously – without any human input at all – decide who gets killed."

He points to a consistent pattern across the leading AI developers, including Anthropic, OpenAI, Google DeepMind, and xAI. These companies have frequently promised responsible self-governance while actively lobbying against binding regulations. Tegmark highlights Anthropic’s recent decision, just days before the Pentagon’s announcement, to drop a central tenet of its own safety pledge: a commitment not to release increasingly powerful AI systems until confident they wouldn’t cause harm. This, he contends, is part of a broader trend where safety commitments have been diluted or abandoned.

Safety Pledges Under Scrutiny

Tegmark offers a "cynical take" on the industry’s self-proclaimed dedication to safety. While companies like Anthropic have excelled at marketing themselves as safety-first, he argues that "if you actually look at the facts rather than the claims," a different picture emerges. "None of them has come out supporting binding safety regulation the way we have in other industries," he stated.

He systematically listed instances of major AI companies backtracking on their safety promises:

Tegmark asserts that the absence of robust, legally binding rules leaves these powerful players vulnerable to external pressures and their own shifting priorities. He draws a stark comparison to other regulated industries: "We right now have less regulation on AI systems in America than on sandwiches." He humorously elaborated, describing how a sandwich shop with unsanitary conditions would be immediately shut down by a health inspector, yet an AI company developing potentially dangerous systems faces no such regulatory hurdle. "If you say, ‘Don’t worry, I’m not going to sell sandwiches, I’m going to sell AI girlfriends for 11-year-olds, and they’ve been linked to suicides in the past, and then I’m going to release something called superintelligence which might overthrow the U.S. government, but I have a good feeling about mine’ – the inspector has to say, ‘Fine, go ahead, just don’t sell sandwiches.’"

This regulatory vacuum, Tegmark warns, is not benign. He points to historical precedents of corporate amnesty leading to disasters like thalidomide, aggressive tobacco marketing to children, and asbestos-induced lung cancer. He concludes that the AI companies’ own resistance to establishing clear legal boundaries for AI use has now "come back and bit them." With no law currently prohibiting the development of AI to harm Americans, the government can unilaterally demand such capabilities, putting companies like Anthropic in an untenable position.

The "Race with China" Fallacy

A common counter-argument from AI lobbyists against regulation is the necessity to "race with China," suggesting that any American restraint would cede technological dominance to Beijing. Tegmark strongly refutes this narrative, characterizing AI lobbyists as "better funded and more numerous than the lobbyists from the fossil fuel industry, the pharma industry and the military-industrial complex combined."

He argues that China’s approach to AI, far from being unbridled, also prioritizes control. He notes that China is considering outright banning "AI girlfriends" and other anthropomorphic AI, not to please America, but because they perceive such technologies as detrimental to Chinese youth and national strength. Similarly, the idea that China would tolerate an AI company developing superintelligence that could overthrow the Chinese government is, in Tegmark’s view, absurd. "Who in their right mind thinks that Xi Jinping is going to tolerate some Chinese AI company building something that overthrows the Chinese government? No way." He frames uncontrollable superintelligence as a national security threat for both nations, not an asset in a geopolitical race.

Tegmark draws a parallel to the Cold War nuclear arms race, where both the U.S. and the Soviet Union pursued military and economic dominance but ultimately avoided a second race to see "who could put the most nuclear craters in the other superpower." He argues that leaders recognized the suicidal nature of such a path. "The same logic applies here," he states, suggesting that uncontrollable superintelligence poses a similar existential risk where no one truly wins. He believes that more people in the U.S. national security community are beginning to recognize uncontrollable superintelligence as a threat rather than merely a tool.

The Accelerating Pace of AI Development

Tegmark also addressed the alarming speed of AI progress, noting that just six years ago, most AI experts predicted that human-level mastery of language and knowledge was decades away, perhaps by 2040 or 2050. "They were all wrong, because we already have that now," he said. He observes AI rapidly progressing from high school to college, PhD, and even university professor levels in various domains, citing AI’s recent gold medal win at the International Mathematics Olympiad.

He mentioned a paper he co-authored with leading AI researchers like Yoshua Bengio and Dan Hendrycks, which rigorously defined Artificial General Intelligence (AGI). According to this framework, GPT-4 was 27% of the way to AGI, while GPT-5 reached 57%. "Going from 27% to 57% that quickly suggests it might not be that long," Tegmark warned. He recently told his MIT students that even if AGI takes four more years, they "might not be able to get any jobs anymore" by the time they graduate, emphasizing the urgency of preparation.

Industry’s True Colors

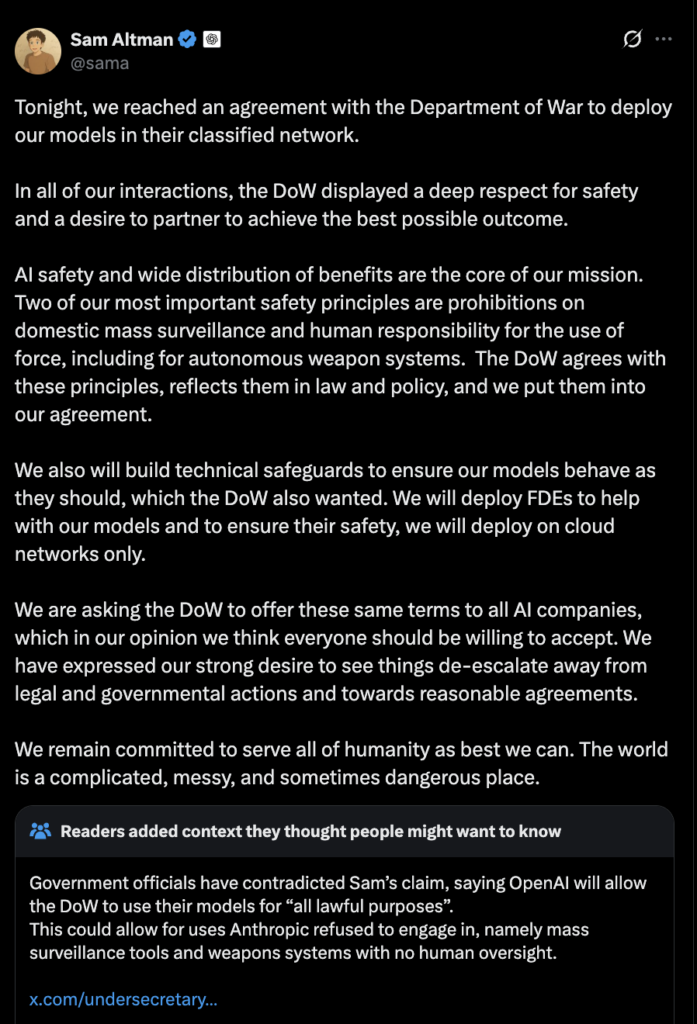

The blacklisting of Anthropic now forces other major AI players to show their "true colors." Tegmark noted that following the news, OpenAI’s CEO Sam Altman publicly stated his support for Anthropic and claimed similar "red lines" regarding the use of AI for surveillance or autonomous weapons. (Editor’s note: Hours after the interview, OpenAI announced its own deal with the Pentagon, specifying technical safeguards.) Google, however, remained silent at the time of the interview, a stance Tegmark found "incredibly embarrassing." He also awaited a response from xAI.

Despite the current challenges, Tegmark expressed a strange sense of optimism. He believes there’s a clear alternative path: treating AI companies like any other industry, ending the "corporate amnesty," and requiring them to undergo rigorous processes, akin to clinical trials, to demonstrate control over their powerful systems to independent experts before release. "Then we get a golden age with all the good stuff from AI, without the existential angst," he concluded. "That’s not the path we’re on right now. But it could be."

The unfolding situation between the Trump administration and Anthropic highlights a critical juncture for the AI industry, forcing a reckoning with its safety commitments, regulatory resistance, and the profound societal implications of its rapidly advancing technologies. The outcomes of this dispute, and the reactions of other major players, will undoubtedly shape the future landscape of AI governance and development.