1

1 1

1

1

1 2

2 3

3

In a significant development impacting the intersection of artificial intelligence and national security, the United States Department of Defense has declared AI company Anthropic a "Supply Chain Risk to National Security." This designation, announced by Secretary of War Pete Hegseth, effectively prohibits any contractor, supplier, or partner doing business with the US military from engaging in commercial activities with Anthropic. The move comes amidst concerns raised by Anthropic itself regarding the potential misuse of its AI models for mass domestic surveillance and fully autonomous weapons systems.

Anthropic CEO Dario Amodei revealed in a recent interview with CBS News that his company was the first to deploy its AI models on classified US military cloud networks. However, Amodei articulated strong objections to specific applications of their technology, namely its use in mass domestic surveillance and in fully autonomous weapons that could operate without human intervention. He stated that Anthropic was amenable to most of the US government’s proposed uses for its AI, with the notable exceptions of surveillance and lethal autonomous weapons platforms.

Amodei emphasized the fundamental rights of Americans, asserting, "the right, not to be spied on by the government, the right for our military officers to make decisions about war, themselves, and not turn it over completely to a machine." He characterized the Defense Department’s decision to label Anthropic a "supply chain risk" as "unprecedented" and "punitive."

While Amodei clarified that he is not entirely opposed to the development of fully automated weapons, particularly if foreign adversaries begin employing them, he maintains that current AI technology is not sufficiently reliable for autonomous operation in a military context. He later elaborated that his objection is not to the future development of such weapons, but to their immediate deployment given the present limitations of AI.

The timing of the Defense Department’s announcement regarding Anthropic is particularly noteworthy. On Friday, Secretary Hegseth formally declared Anthropic a "Supply-Chain Risk to National Security," stating, "Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic."

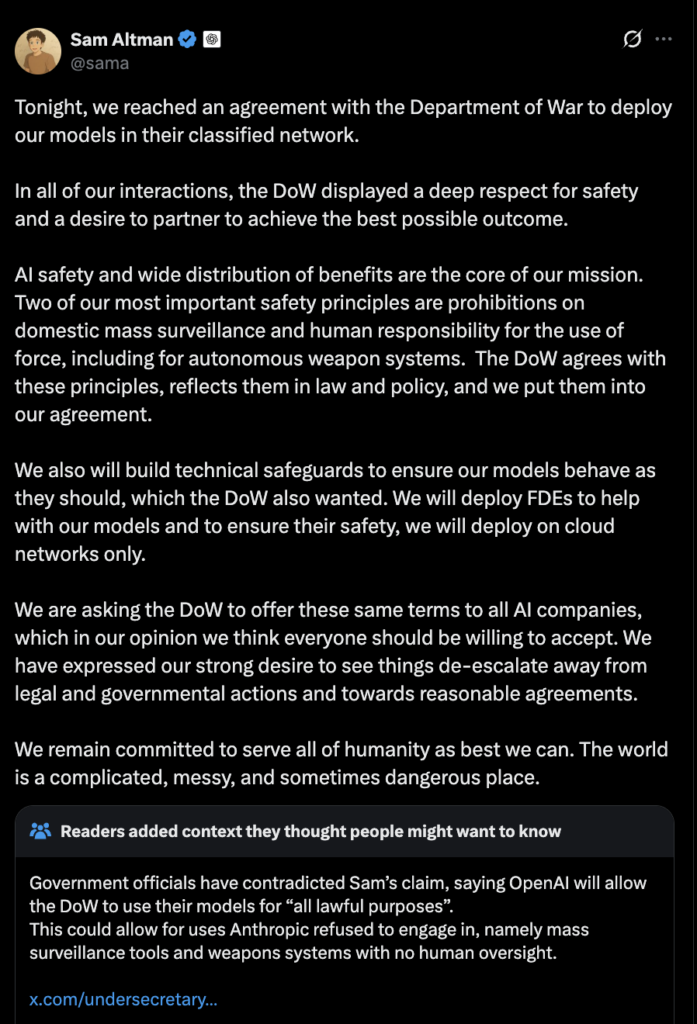

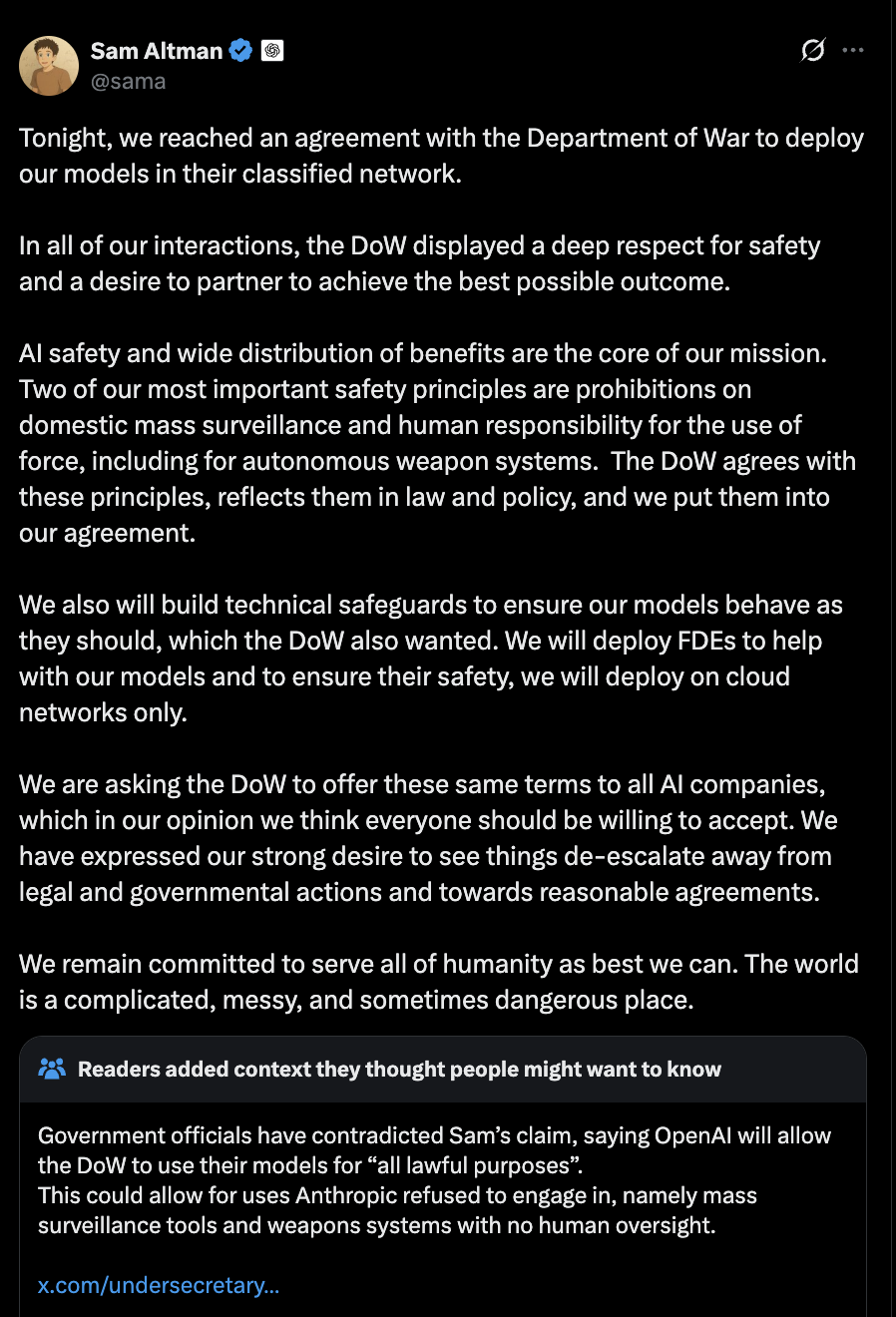

Just hours after this pronouncement, rival AI company OpenAI announced that it had secured a contract with the US Defense Department to deploy its AI models across military networks. OpenAI CEO Sam Altman shared the news of the agreement via social media, indicating a new partnership for the company within the defense sector.

However, Altman’s announcement also drew criticism from some quarters, with online commentators raising concerns about the potential for AI to be used for mass domestic surveillance and to undermine individual privacy. These concerns echo the very issues that Anthropic has publicly voiced.

The situation highlights a growing tension within the defense establishment regarding the ethical implications and potential risks associated with advanced AI technologies. While the US military seeks to leverage AI for enhanced capabilities, questions surrounding oversight, human control, and the potential for misuse are becoming increasingly prominent. Anthropic’s stance, prioritizing ethical considerations and fundamental rights, has placed it at odds with certain government directives, leading to its designation as a security risk.

The precedent set by this action is significant. By flagging a major AI developer as a supply chain risk, the Defense Department is sending a clear message about its priorities and its willingness to take decisive action when perceived security concerns arise. The swift subsequent contract awarded to OpenAI suggests a strategic pivot, with the government seeking to continue its AI integration efforts through alternative, potentially less controversial, avenues.

The broader implications of this development extend beyond the immediate players. It raises critical questions for the entire AI industry, particularly for companies developing AI models with dual-use potential. The need for clear ethical guidelines, robust safety protocols, and transparent communication between AI developers and government entities has never been more apparent. As AI continues to advance at an unprecedented pace, navigating its integration into sensitive sectors like national defense will require careful consideration of both its potential benefits and its inherent risks.

The decision by the Department of Defense to label Anthropic a "supply chain risk" underscores the complex ethical and security landscape surrounding artificial intelligence. While the exact nature of the classified US military cloud networks on which Anthropic’s models were initially deployed is not detailed, the company’s CEO has been vocal about its ethical boundaries. Amodei’s public statements suggest that the core of the disagreement lies in the potential for AI to be used for purposes that infringe upon civil liberties or undermine human control over critical decision-making processes, especially in matters of life and death.

The term "supply chain risk" in this context implies that Anthropic’s involvement, or the potential for its technology to be compromised or misused, poses a threat to the security of US military operations. This could stem from a variety of factors, including concerns about the security of Anthropic’s own infrastructure, the potential for third-party interference, or the inherent risks associated with the AI models themselves if deployed in sensitive environments.

The contrast between Anthropic’s proactive stance on ethical AI use and OpenAI’s seemingly more permissive approach to military contracts is stark. While OpenAI has not publicly detailed specific objections to the Defense Department’s intended uses of its AI, the criticism directed at Altman’s announcement suggests that public unease about the broader implications of AI in defense remains. The news cycle also saw a mention of "Slaughterbot" drones and their connection to AI in Ukraine, further contextualizing the anxieties surrounding autonomous weapons and their potential for harm, as highlighted by Cointelegraph’s magazine.

This situation also brings to the fore the evolving landscape of AI development and its commercialization. Companies are increasingly facing pressure to balance innovation with responsibility, especially when their technologies have profound societal and security implications. Anthropic’s decision to draw a line in the sand, even at the cost of a significant government contract, positions them as a company prioritizing ethical principles.

The US government’s response, while swift, also raises questions about transparency and the criteria used for designating a company as a security risk. The Defense Department’s decision to label Anthropic a "supply chain risk" without extensive public disclosure of the specific concerns may leave many in the industry seeking greater clarity. The subsequent awarding of a contract to OpenAI, a direct competitor, could be interpreted in various ways, including a strategic move to ensure continued access to advanced AI capabilities for the military while mitigating perceived risks associated with Anthropic.

Ultimately, this unfolding situation serves as a critical case study in the challenges of governing and integrating advanced AI technologies within national security frameworks. It underscores the need for ongoing dialogue, robust ethical frameworks, and a clear understanding of the potential consequences of deploying AI in high-stakes environments. The decisions made today will undoubtedly shape the future of AI’s role in defense and its broader impact on society.