1

1 1

1

1

1 2

2 3

3

The advent of artificial intelligence in software development promises a revolution, offering tools that can write, debug, and deploy code with unprecedented autonomy. However, this transformative power has largely come with a significant price tag, sparking discontent among the very developers these tools aim to empower. Anthropic’s Claude Code, a terminal-based AI agent renowned for its capabilities, exemplifies this dilemma with monthly pricing ranging from $20 to $200, depending on usage. This financial barrier has ignited a growing movement among programmers seeking more accessible solutions.

Emerging as a formidable free alternative is Goose, an open-source AI agent developed by Block, the financial technology company formerly known as Square. Goose offers nearly identical functionality to Claude Code but distinguishes itself by running entirely on a user’s local machine. This crucial difference eliminates subscription fees, cloud dependencies, and restrictive rate limits that reset every few hours. "Your data stays with you, period," emphasized Parth Sareen, a software engineer who demonstrated Goose during a recent livestream. This statement encapsulates the core appeal of Goose: it grants developers complete control over their AI-powered workflow, including the invaluable ability to work offline, even during air travel.

The project has witnessed an explosive surge in popularity since its launch. Goose now boasts over 26,100 stars on GitHub, the premier code-sharing platform, attracting 362 contributors and releasing 102 versions. Its latest iteration, 1.20.1, shipped on January 19, 2026, reflecting a rapid development pace that rivals many commercial products. For developers increasingly frustrated by Claude Code’s pricing structure and stringent usage caps, Goose represents a rare and increasingly vital offering in the AI industry: a genuinely free, no-strings-attached option designed for serious, professional work.

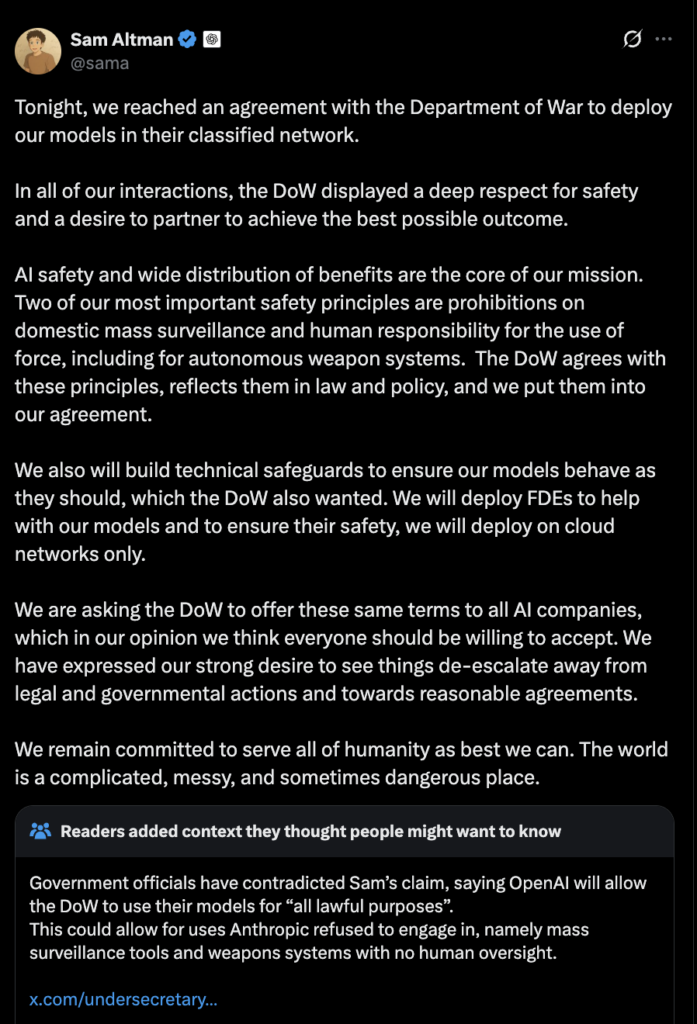

To fully grasp the significance of Goose, one must understand the controversy surrounding Claude Code’s pricing. Anthropic, the San Francisco-based artificial intelligence company founded by former OpenAI executives, integrates Claude Code into its various subscription tiers. The free plan offers no access to Claude Code whatsoever. The Pro plan, priced at $17 per month with annual billing or $20 monthly, severely limits users to just 10 to 40 prompts every five hours—a constraint that many professional developers can exhaust within minutes of intensive coding. The Max plans, at $100 and $200 per month, provide more generous headroom: 50 to 200 prompts and 200 to 800 prompts respectively, along with access to Anthropic’s most powerful model, Claude 4.5 Opus. However, even these premium tiers come with restrictions that have ignited widespread frustration within the developer community.

The catalyst for much of this discontent came in late July 2025, when Anthropic announced new weekly rate limits for Claude Code. Under this revised system, Pro users receive 40 to 80 "hours" of Sonnet 4 usage per week. Max users on the $200 tier are allocated 240 to 480 "hours" of Sonnet 4, plus an additional 24 to 40 "hours" of Opus 4. Despite nearly five months passing since these changes, developer frustration shows no signs of subsiding. The fundamental problem lies in the interpretation of these "hours," which are not literal time units but rather token-based limits that fluctuate dramatically depending on the size of the codebase, the length of the conversation, and the complexity of the code being processed. Independent analyses suggest that the actual per-session limits translate to roughly 44,000 tokens for Pro users and approximately 220,000 tokens for the $200 Max plan.

"It’s confusing and vague," one developer articulated in a widely shared analysis. "When they say ’24-40 hours of Opus 4,’ that doesn’t really tell you anything useful about what you’re actually getting." The backlash has been fierce across platforms like Reddit and various developer forums. Numerous users have reported hitting their daily limits within as little as 30 minutes of intensive coding sessions, prompting some to cancel their subscriptions entirely, labeling the new restrictions "a joke" and "unusable for real work." Anthropic has defended these changes, asserting that the limits affect fewer than five percent of users and primarily target individuals running Claude Code "continuously in the background, 24/7." However, the company has not clarified whether this five percent figure refers to Max subscribers specifically or to all users, a distinction that carries significant weight for understanding the true impact.

Goose, in contrast, adopts a fundamentally different approach to addressing the challenges of AI-assisted coding. Built by Block, the payments company founded by Jack Dorsey, Goose is what engineers term an "on-machine AI agent." Unlike cloud-based solutions such as Claude Code, which necessitate sending your code and queries to Anthropic’s remote servers for processing, Goose is designed to run entirely on your local computer. This is achieved by leveraging open-source language models that users can download and manage themselves. The project’s documentation highlights its ambition to go "beyond code suggestions" by enabling the agent to "install, execute, edit, and test with any LLM." That final phrase—"any LLM"—is a critical differentiator, underscoring Goose’s model-agnostic design.

Developers using Goose have the flexibility to connect it to various language models. They can use Anthropic’s Claude models if they possess API access, or integrate with OpenAI’s GPT-5 or Google’s Gemini through their respective APIs. Furthermore, Goose can route requests through services like Groq or OpenRouter. Crucially, and where its core innovation lies, Goose can operate entirely locally using tools such as Ollama, which facilitate the download and execution of open-source models directly on a user’s hardware. The practical implications of a local setup are profound: it eliminates subscription fees, usage caps, and rate limits, while also alleviating any concerns about sensitive code being transmitted to external servers. All interactions with the AI remain securely on the user’s machine. "I use Ollama all the time on planes—it’s a lot of fun!" Sareen noted during his demonstration, emphasizing how local models liberate developers from the constraints of internet connectivity.

Goose operates as either a command-line tool or a desktop application, capable of autonomously performing complex development tasks. Its capabilities extend far beyond simple code completion. It can build entire projects from inception, write and execute code, debug failures, orchestrate intricate workflows across multiple files, and interact with external APIs—all with minimal human intervention. This advanced functionality relies heavily on what the AI industry refers to as "tool calling" or "function calling," which is the ability of a language model to request and trigger specific actions from external systems. When a developer instructs Goose to create a new file, run a test suite, or check the status of a GitHub pull request, the agent doesn’t merely generate text describing the action; it actually executes these operations within the user’s environment.

The effectiveness of this capability is deeply tied to the underlying language model. According to the Berkeley Function-Calling Leaderboard, which benchmarks models on their proficiency in translating natural language requests into executable code and system commands, Claude 4 models from Anthropic currently exhibit superior performance in tool calling. However, newer open-source models are rapidly closing this gap. Goose’s documentation specifically highlights several open-source options with robust tool-calling support, including Meta’s Llama series, Alibaba’s Qwen models, Google’s Gemma variants, and DeepSeek’s reasoning-focused architectures. The tool also integrates with the Model Context Protocol (MCP), an emerging standard designed to connect AI agents to various external services. Through MCP, Goose can access databases, search engines, file systems, and third-party APIs, significantly extending its operational capabilities beyond what the base language model alone provides.

For developers seeking a completely free and privacy-preserving AI coding setup, the process involves three primary components: Goose itself, Ollama (a tool for running open-source models locally), and a compatible open-source language model.

Step 1: Install Ollama

Ollama is an open-source project that streamlines the complex process of running large language models on personal hardware. It efficiently handles the downloading, optimization, and serving of these models through a straightforward interface. To begin, download and install Ollama from ollama.com. Once installed, users can easily pull models with a single command. For coding tasks, Qwen 2.5 is often recommended for its strong tool-calling support. Simply execute ollama run qwen2.5 in your terminal. The model will download automatically and then begin running on your local machine.

Step 2: Install Goose

Goose is available as both a desktop application and a command-line interface, catering to different developer preferences. The desktop version offers a more visual and intuitive experience, while the CLI is favored by developers who prefer working exclusively within the terminal. Installation instructions vary depending on the operating system but typically involve downloading pre-built binaries from Goose’s GitHub releases page or utilizing a package manager. Block provides readily available binaries for macOS (supporting both Intel and Apple Silicon), Windows, and Linux.

Step 3: Configure the Connection

Once both Ollama and Goose are installed, the final step is to establish the connection between them. In Goose Desktop, navigate to the Settings menu, then select "Configure Provider," and choose "Ollama." Confirm that the API Host is set to http://localhost:11434, which is Ollama’s default port, and then click "Submit." For users of the command-line version, execute goose configure, select "Configure Providers," choose "Ollama," and then enter the name of the desired model when prompted. With these steps completed, Goose is now fully connected to a language model running entirely on your hardware, ready to execute complex coding tasks without any subscription fees or external dependencies.

An inevitable question arises: what kind of computer is required to run these powerful models locally? Running large language models on personal hardware demands significantly more computational resources than typical software applications. The primary constraint is memory—specifically, RAM on most systems, or VRAM if a dedicated graphics card is utilized for acceleration. Block’s documentation suggests that 32 gigabytes of RAM provides "a solid baseline for larger models and outputs." For Mac users, the computer’s unified memory serves as the main bottleneck. Conversely, for Windows and Linux users equipped with discrete NVIDIA graphics cards, GPU memory (VRAM) becomes more critical for accelerating model inference.

However, it is not always necessary to possess expensive, high-end hardware to get started. Smaller models with fewer parameters can operate effectively on much more modest systems. Qwen 2.5, for instance, is available in multiple sizes, and its smaller variants can perform well on machines with as little as 16 gigabytes of RAM. "You don’t need to run the largest models to get excellent results," Sareen emphasized during his demonstration. The practical recommendation for developers is to begin with a smaller model to test their workflow and then scale up to larger models as their specific needs and hardware capabilities allow. For context, an entry-level Apple MacBook Air with 8 gigabytes of RAM would likely struggle with most capable coding models, whereas a MacBook Pro with 32 gigabytes—an increasingly common configuration among professional developers—can handle them comfortably.

While Goose with a local LLM presents a compelling alternative, it is important to acknowledge that it is not a perfect, drop-in substitute for Claude Code. The comparison involves genuine trade-offs that developers must understand.

Model Quality: Anthropic’s flagship model, Claude 4.5 Opus, is widely regarded as arguably the most capable AI for complex software engineering tasks. It excels at comprehending intricate codebases, adhering to nuanced instructions, and generating high-quality code on the first attempt. While open-source models have made dramatic advancements, a discernible gap persists, particularly for the most challenging and abstract coding problems. One developer who transitioned to the $200 Claude Code plan described the difference succinctly: "When I say ‘make this look modern,’ Opus knows what I mean. Other models give me Bootstrap circa 2015."

Context Window: Claude Sonnet 4.5, accessible via the API, offers an immense one-million-token context window. This capacity is sufficient to load entire large codebases without the need for complex chunking or context management strategies. In contrast, most local models are typically limited to 4,096 or 8,192 tokens by default, though many can be configured for longer contexts at the expense of increased memory usage and potentially slower processing speeds.

Speed: Cloud-based services like Claude Code operate on dedicated server hardware meticulously optimized for AI inference, resulting in very fast response times. Local models, running on consumer laptops or desktops, generally process requests more slowly. This difference in speed can be significant for iterative development workflows where rapid changes and immediate AI feedback are crucial.

Tooling Maturity: Claude Code benefits from Anthropic’s substantial engineering resources, leading to highly polished and well-documented features. Examples include prompt caching, which can reduce costs by up to 90 percent for repeated contexts, and robust structured outputs. Goose, while actively developed with 102 releases to date and a vibrant community, relies on community contributions and may, in specific areas, lack the same level of refinement found in commercial offerings.

Goose enters a crowded and rapidly evolving market of AI coding tools, yet it carves out a distinctive niche. Competitors like Cursor, a popular AI-enhanced code editor, also employ a subscription model, charging $20 per month for its Pro tier and $200 for Ultra, mirroring Claude Code’s Max plans. However, Cursor’s Ultra level provides approximately 4,500 Sonnet 4 requests per month, a substantially different allocation model compared to Claude Code’s hourly resets. Other open-source projects such as Cline and Roo Code offer AI coding assistance, but often with varying levels of autonomy and tool integration, frequently focusing more on intelligent code completion rather than the agentic task execution that defines both Goose and Claude Code. Enterprise solutions like Amazon’s CodeWhisperer and GitHub Copilot, along with other offerings from major cloud providers, primarily target large organizations with complex procurement processes and dedicated budgets, making them less relevant to individual developers and small teams seeking lightweight, flexible tools.

Goose’s unique value proposition lies in its combination of genuine autonomy, model agnosticism, local operation, and zero cost. The tool is not attempting to outcompete commercial offerings solely on polish or raw model quality. Instead, its competitive edge is rooted in freedom—both financial and architectural.

The AI coding tools market is experiencing rapid evolution, driven by the accelerating improvements in open-source models. These models are narrowing the gap with proprietary alternatives at an unprecedented pace. Notably, Moonshot AI’s Kimi K2 and z.ai’s GLM 4.5 now benchmark near Claude Sonnet 4 levels, yet they are freely available. If this trajectory continues, the quality advantage that currently justifies Claude Code’s premium pricing may erode significantly. This would inevitably place pressure on Anthropic to compete more intensely on features, user experience, and seamless integration rather than relying solely on superior model capability.

For the time being, developers face a clear choice. Those who prioritize the absolute best model quality, can afford premium pricing, and are willing to accept usage restrictions may continue to prefer Claude Code. However, those who place a higher value on cost-effectiveness, privacy, offline access, and architectural flexibility now have a compelling and genuine alternative in Goose. The mere existence of a zero-dollar, open-source competitor offering comparable core functionality to a $200-per-month commercial product is itself remarkable. It underscores both the maturation of open-source AI infrastructure and the profound appetite among developers for tools that respect their autonomy and empower them with greater control.

Goose is not without its limitations. It typically requires a more technical setup process than most commercial alternatives. It also depends on substantial hardware resources that not every developer possesses. Furthermore, while its model options are improving rapidly, they may still trail the very best proprietary offerings when tackling the most complex and nuanced tasks. Yet, for a growing community of developers, these limitations are considered acceptable trade-offs for something increasingly rare and valuable in the AI landscape: a tool that truly belongs to them.

Goose is available for download at github.com/block/goose. Ollama is available at ollama.com. Both projects are free and open source.